The 2026 Roadmap: From Ideation to Building

Three weeks ago, I started writing about the regulated worker’s AI stack. Ten posts later, I have a clearer picture of both the problems and the potential solutions.

Now comes the hard part: deciding what to actually build.

This post synthesizes the ideation phase into concrete priorities. Some of these will become features in Claude Buddy. Some are bigger than one tool. Some are best served by documentation and frameworks rather than code.

Let’s inventory what emerged and decide where to focus.

What We Explored

Week 1: The Regulated Worker’s AI Stack

The Compliance Tax introduced “compliant velocity”—the realistic productivity multiplier when you account for governance overhead. Not 10x, but 3x. Still transformational.

Your AI Toolkit is Probably Illegal provided an audit framework: data residency, retention, discoverability, access controls. Most consumer AI tools fail these checks.

The Reference Architecture proposed a 4-layer model: Foundation Models → Development Tools → Productivity Tools → Personal Tools. With hard boundaries between layers 3 and 4.

Week 2: The Identity Problem

Non-Human Identities framed what AI agents need: unique identity, permission scopes, audit trails, lifecycle governance.

The Agent Impersonation Problem exposed how AI currently operates under borrowed human credentials, and proposed three models: borrowed → supervised → autonomous identity.

The AI Context Portability Problem explored knowledge ownership when AI assistants accumulate institutional context. Gray zones everywhere.

Week 3: Building Toward Trust

The Trust Ladder defined five levels of AI autonomy (0-4) with graduation criteria for moving between them.

OpenClaw and the Trust Ladder applied the trust framework to analyze a real-world case: 145,000 stars of demand for AI agents, but critical trust infrastructure missing.

Validation Hooks provided the technical foundation: pre-execution gates, post-execution verification, confidence scoring.

Buildable Projects Identified

From these ten posts, several buildable projects emerged:

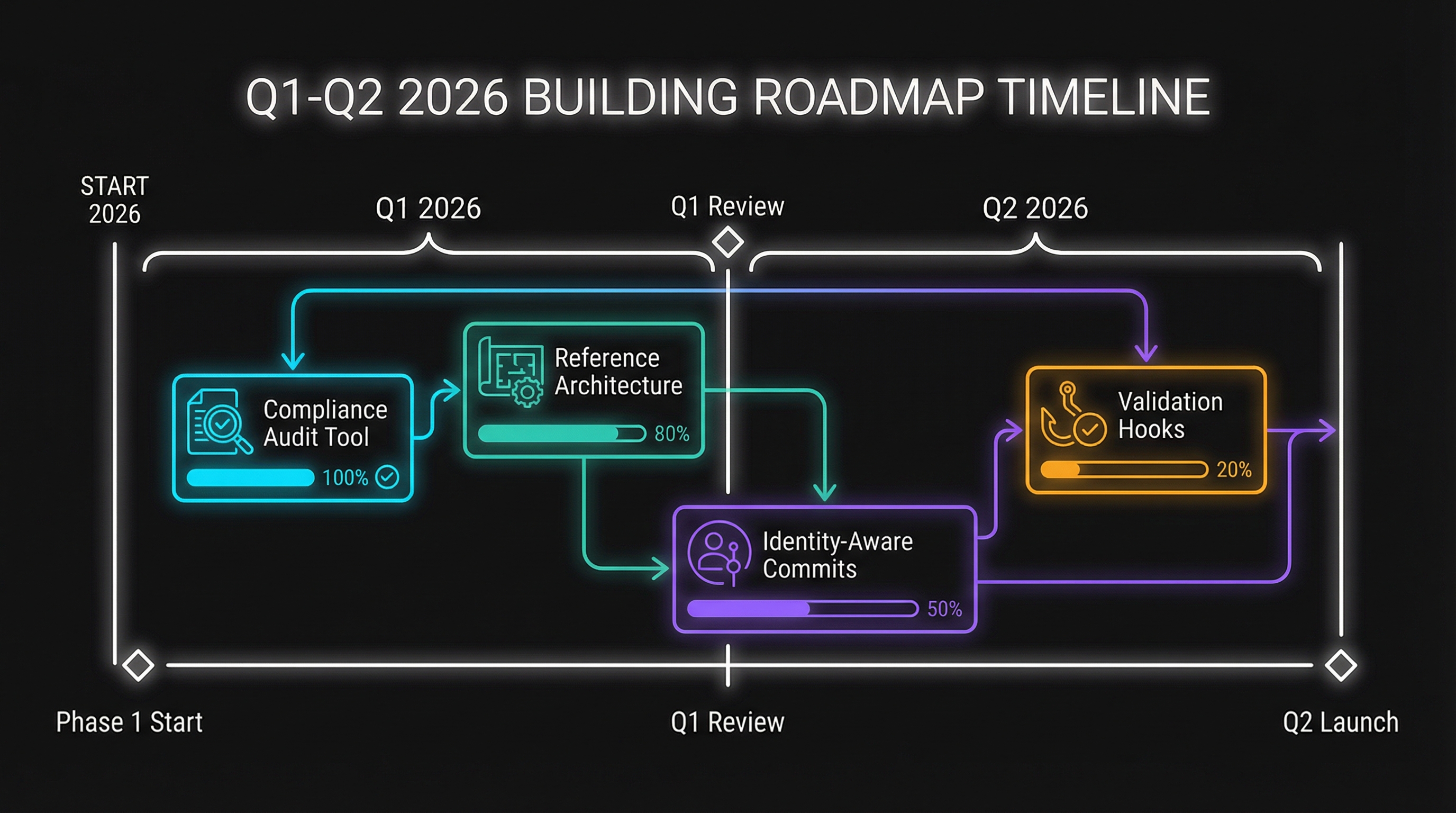

Q1 — Foundations

- Compliant Velocity Metrics (Post 1) — Low complexity, medium impact

- AI Tool Compliance Audit Template (Post 2) — Low complexity, high impact

- Reference Architecture Documentation (Post 3) — Medium complexity, high impact

- AI Knowledge Policy Template (Post 6) — Low complexity, medium impact

Q2 — Implementation

- Identity-Aware Commits (Post 5) — Medium complexity, medium impact

- Trust Ladder Assessment Tool (Post 7) — Medium complexity, high impact

- Validation Hooks for Claude Buddy (Post 9) — High complexity, very high impact

Q1 2026 Focus: Foundations

The first quarter is about establishing foundations—documentation, templates, and lightweight tooling that others can use immediately.

1. Reference Architecture Documentation

What: Turn the 4-layer model into a comprehensive guide that organizations can adopt. Include decision trees, implementation patterns, and vendor evaluation criteria.

Deliverable: A standalone document or microsite explaining the architecture, with practical guidance for implementation.

Why first: Everything else builds on having a clear mental model. This is the foundation.

2. AI Tool Compliance Audit Template

What: A structured template and checklist for evaluating AI tools against the four criteria (residency, retention, discoverability, access controls).

Deliverable: Spreadsheet template, assessment guide, and scoring rubric that compliance teams can use immediately.

Why prioritize: High impact, low effort. Every regulated organization needs this. Generic enough to be widely useful.

3. AI Knowledge Policy Template

What: Sample policy language for organizations to define what knowledge can and cannot be shared with AI systems.

Deliverable: Editable policy document with sections for different knowledge categories, clear examples, and implementation guidance.

Why prioritize: Fills an immediate gap. Most organizations have no policy on this. Providing a starting point accelerates adoption.

4. Compliant Velocity Metrics Dashboard

What: A simple way to track actual productivity gains while accounting for compliance overhead.

Deliverable: Metrics definitions, measurement approaches, and a basic dashboard concept (potentially as a Claude Buddy feature).

Why prioritize: Helps organizations demonstrate AI value realistically. Counters the “10x or nothing” narrative with evidence.

Q2 2026 Focus: Implementation

Second quarter shifts to building features—actual code in Claude Buddy that implements the concepts from the series.

5. Validation Hooks System

What: Implement the full validation architecture in Claude Buddy: pre-hooks, post-hooks, confidence scoring, escalation.

Deliverable: Claude Buddy feature that enables configurable validation pipelines with the patterns described in Post 8.

Why high priority: This is the technical enabler for everything else. You can’t climb the trust ladder without validation infrastructure.

Implementation approach:

- Phase 1: Pre-hook framework (scope validation, pattern blocklist)

- Phase 2: Post-hook framework (output validation, diff analysis)

- Phase 3: Confidence scoring and escalation

- Phase 4: Metrics and graduation support

6. Trust Ladder Assessment Tool

What: A tool/feature that helps organizations assess their current trust level per task category and identify graduation criteria.

Deliverable: Assessment interface in Claude Buddy that tracks task types, measures accuracy, and recommends trust level adjustments.

Why prioritize: Operationalizes the trust ladder framework. Without measurement, trust calibration is just theory.

7. Identity-Aware Commits

What: Implement supervised identity model for git operations in Claude Buddy.

Deliverable: Commits that clearly attribute AI vs. human contribution, with configurable identity modes.

Implementation approach:

- Agent identity in commit metadata

- Authorization tracking (explicit/batch/policy)

- Audit export for compliance reporting

- Integration with existing git workflows

What I’m NOT Building (Yet)

Some projects from the series are important but not immediate priorities:

Autonomous Identity Framework

Full autonomous identity (Model 3 from Post 5) requires more than one tool can provide. This needs industry standards, platform support, and legal clarity. I’ll monitor developments but won’t build custom solutions prematurely.

Layer 3 Productivity Tools

The gap in enterprise-grade productivity AI tools is real, but building new productivity applications is beyond Claude Buddy’s scope. This is a market opportunity, not a feature request.

Regulatory Compliance Certification

Formal compliance certifications (SOC 2, ISO 27001 specifically for AI tooling) require organizational resources beyond what I can provide. I’ll document what’s needed; others will need to execute.

Community Input Requested

This roadmap reflects my priorities, but I don’t have perfect visibility into what matters most to others. I’d love input on:

Prioritization: Should something move up or down? Is there a critical gap I’ve missed?

Use cases: What specific scenarios would these tools need to support? What edge cases am I not seeing?

Integration: How would these features need to integrate with existing tools and workflows?

Alternatives: Are there existing solutions for some of these that I should recommend rather than build?

The Building Phase

Three weeks of ideation produced:

- A mental model (the 4-layer architecture)

- A measurement framework (compliant velocity, trust ladder)

- A technical pattern (validation hooks)

- Identified gaps (identity, knowledge ownership)

Now the work shifts from thinking to building. The next three months will produce actual artifacts—documentation, templates, and code—that make these ideas actionable.

Some of this will work. Some will need iteration. Some may prove to be wrong approaches to real problems. That’s fine. The point is to move from theory to practice, from ideation to implementation.

The regulated worker’s AI stack won’t build itself. But with clear priorities and concrete deliverables, it becomes tractable.

Series Index

For reference, here are all ten posts in this series:

Week 1: The Regulated Worker’s AI Stack

- The Compliance Tax — Why 3x is transformational

- Your AI Toolkit is Probably Illegal — Audit framework

- The Reference Architecture — 4-layer model

Week 2: The Identity Problem

- Non-Human Identities — What agents need

- The Agent Impersonation Problem — Borrowed vs. supervised identity

- The AI Context Portability Problem — Knowledge ownership

Week 3: Building Toward Trust

- The Trust Ladder — 5 levels of AI autonomy

- OpenClaw and the Trust Ladder — Real-world trust analysis

- Validation Hooks — Technical implementation

- The 2026 Roadmap — This post

This concludes the ideation series. Building updates will follow as work progresses on the Q1 and Q2 priorities.

Have thoughts on the roadmap? Want to collaborate on any of these projects? Find me on X or LinkedIn.