Non-Human Identities: When AI Agents Need Employee Badges

Here’s a question that keeps me up at night: who approved that action?

When a human employee makes a decision—approves a loan, deploys code, sends a client communication—we know who did it. Their identity is attached to the action. If something goes wrong, there’s accountability. If regulators ask, we have answers.

When an AI agent takes the same action, who approved it?

The reference architecture I outlined last week defines where AI tools live in your stack. But it doesn’t address a deeper question: how do we identify the AI agents operating within that architecture?

This is the problem of Non-Human Identity.

What is Non-Human Identity?

Non-Human Identity (NHI) is a security concept that’s been around for a while, primarily in the context of service accounts, API keys, and automated systems. The core idea: not everything that acts in your systems is a human, and non-human actors need identity management too.

Traditional NHI includes:

- Service accounts that run batch processes

- API keys that authorize system integrations

- Robots that execute automated workflows

- Scheduled jobs that perform maintenance tasks

AI agents are the newest—and most complex—addition to this category.

Why AI Agents Are Different

Service accounts are predictable. They do what they’re programmed to do, the same way, every time. You can audit the code, review the logic, and understand exactly what actions the service account can take.

AI agents are unpredictable by design. That’s their value—they handle situations that weren’t explicitly programmed. They interpret natural language. They make judgment calls. They take actions that no human specifically defined.

This creates a fundamentally different identity challenge:

Traditional Service Account → AI Agent

- Actions: Predefined, deterministic → Dynamic, contextual

- Scope: Static permissions → May need dynamic scoping

- Audit: What it did → What it did AND why

- Accountability: Clear owner → Unclear attribution

The service account that runs your nightly backup either succeeds or fails. The AI agent that responds to customer inquiries might give different answers to similar questions, escalate unexpectedly, or take actions you didn’t anticipate.

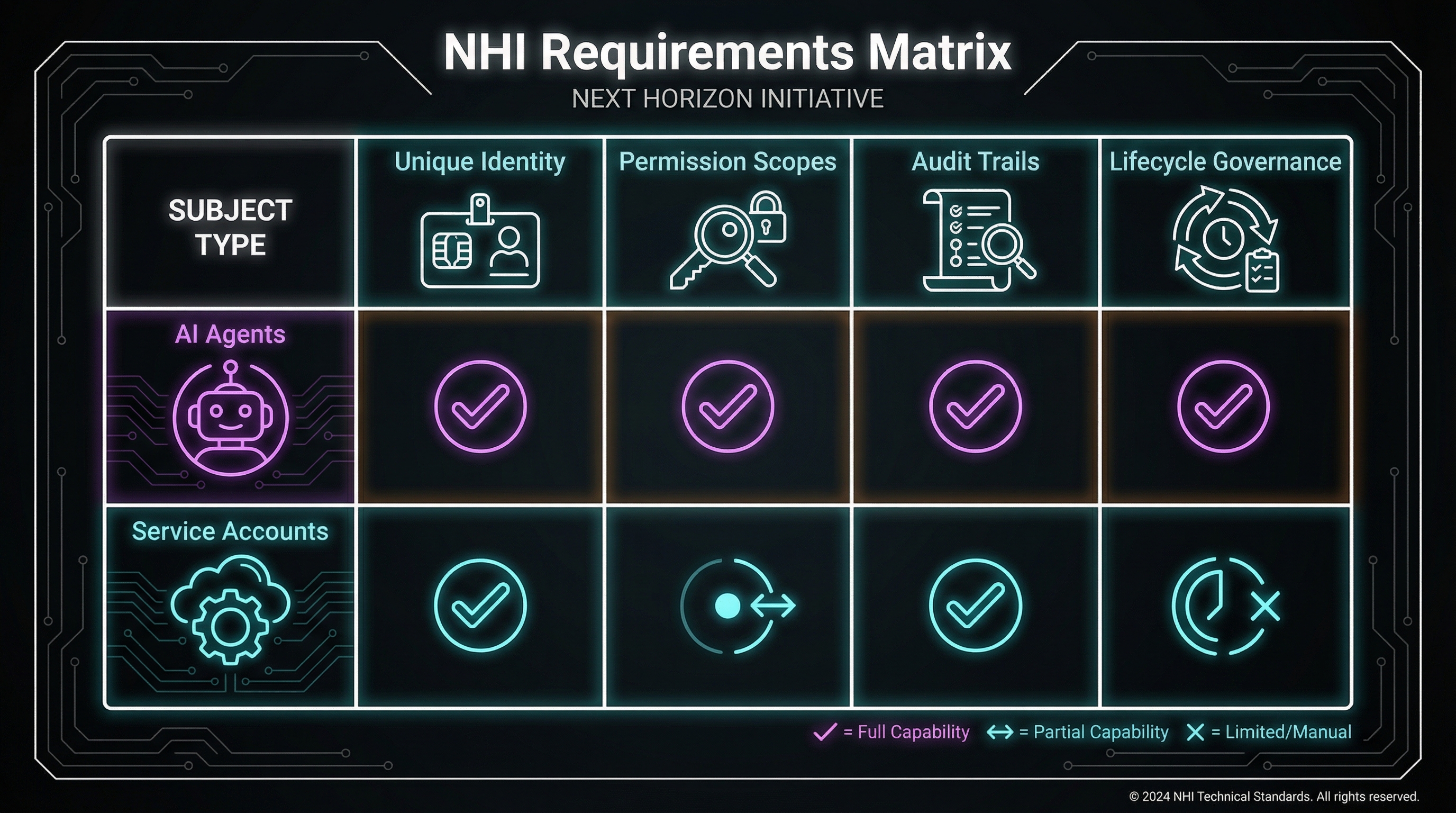

The Four Requirements

Any Non-Human Identity solution for AI agents needs to address four core requirements:

1. Unique Identity

Every AI agent instance needs a unique, verifiable identity. Not “the Claude API” but “Agent-7a3f deployed in production for customer service on 2026-01-15.”

Why it matters: When something goes wrong, you need to know which specific agent did it. Not “an AI” but “this exact agent with this exact configuration.”

What this looks like:

- Agent instances have unique identifiers

- Identifiers are immutable once assigned

- Identifiers include deployment context (environment, purpose, timestamp)

- Identifiers are attached to every action the agent takes

2. Permission Scopes

AI agents need defined boundaries on what they can do. This is harder than it sounds because agents are designed to be flexible.

Why it matters: Regulators ask “could this agent access customer data?” You need to answer with precision, not “maybe, depending on how it interpreted the request.”

What this looks like:

- Explicit permission grants (can read customer data, cannot modify)

- Context-aware scoping (can access data for assigned customer only)

- Action-type restrictions (can query but not update)

- Rate limiting and usage caps

3. Audit Trails

Traditional audit logging captures what happened. AI agent audit trails need to capture what happened AND the reasoning that led to that action.

Why it matters: “The agent approved the loan” isn’t useful without “because the customer met criteria X, Y, and Z based on inputs A, B, and C.”

What this looks like:

- Complete input logging (what the agent received)

- Reasoning capture (how the agent interpreted the input)

- Decision logging (what the agent decided and why)

- Action logging (what the agent actually did)

- Outcome logging (what happened as a result)

4. Lifecycle Governance

AI agents have lifecycles: they’re created, deployed, updated, and retired. Unlike service accounts, agent “updates” might fundamentally change their behavior.

Why it matters: The agent approved yesterday might reason differently than the agent approved today, even with the same name. Model updates, prompt changes, and configuration tweaks all affect behavior.

What this looks like:

- Version tracking for all agent components (model, prompts, tools, configuration)

- Change management processes for agent updates

- Rollback capabilities

- Clear procedures for agent retirement and succession

The Regulatory Angle

Regulators haven’t fully caught up with AI agents, but they’ve been clear about one thing: accountability doesn’t disappear because AI was involved.

The accountability question: “Who approved this action?” has specific regulatory meaning. In banking, loan decisions need documented approval chains. In healthcare, treatment decisions need physician sign-off. In legal, client communications need attorney oversight.

AI agents complicate this because they create a gap between the human who deployed the agent and the action the agent took. The human didn’t specifically approve that action—they approved the agent that took the action.

How organizations are handling this:

Approach 1: Human-in-the-loop for everything

Every agent action requires explicit human approval. This preserves clear accountability but sacrifices most of the efficiency gains from AI agents.

Approach 2: Tiered approval based on risk

Low-risk actions proceed automatically. High-risk actions require human approval. The challenge is defining risk tiers accurately.

Approach 3: Agent identity as proxy

The agent itself becomes the accountable party, with the deploying human responsible for the agent’s configuration and oversight. This is emerging but legally untested.

What regulators will likely require:

Based on emerging guidance and historical patterns, expect:

- Clear documentation of what agents can and cannot do

- Audit trails that demonstrate oversight

- Mechanisms to intervene when agents err

- Evidence that humans reviewed agent outputs (at least on a sampling basis)

- Ability to explain agent decisions in human-understandable terms

Practical Implementation

How do you actually implement Non-Human Identity for AI agents? Some patterns:

Identity Assignment

Agent Identity Structure:

├── Unique ID (UUID)

├── Deployment Context

│ ├── Environment (prod/staging/dev)

│ ├── Purpose (customer-service/code-review/etc)

│ └── Deployment timestamp

├── Configuration Hash

│ ├── Model version

│ ├── Prompt version

│ └── Tool configuration

└── Ownership

├── Deploying user

├── Responsible team

└── Approval chainEvery agent instance gets an identity object containing all of this. The identity is immutable—any configuration change creates a new agent identity.

Permission Framework

Build permissions around capabilities, not just resources:

Permission Structure:

├── Resource Access

│ ├── What data can be read

│ └── What data can be modified

├── Action Capabilities

│ ├── What actions can be initiated

│ └── What actions require escalation

├── Context Boundaries

│ ├── What customers/accounts are in scope

│ └── What time windows apply

└── Rate Limits

├── Actions per time period

└── Data volume limitsAudit Schema

Design audit logs to be reconstructable:

Audit Record:

├── Agent Identity (full identity object)

├── Timestamp

├── Input

│ ├── Raw input

│ └── Interpreted input

├── Reasoning

│ ├── Relevant context retrieved

│ ├── Decision factors

│ └── Confidence assessment

├── Output

│ ├── Action taken

│ └── Output generated

└── Outcome

├── Success/failure

└── Downstream effectsThe Identity-Aware AI Stack

Integrating NHI into the reference architecture:

Layer 1 (Foundation Models): Model access authenticated by agent identity. Audit logs include requesting agent ID. Model provider maintains no identity—identity is your responsibility.

Layer 2 (Development Tools): Development agents have developer-equivalent permissions with additional logging. Code generated is attributed to specific agent instances. Changes tracked as agent-authored with human oversight attribution.

Layer 3 (Productivity Tools): Each productivity agent has defined scope. Customer-facing agents have customer-specific boundaries. All actions logged with full reasoning chain.

Layer 4 (Personal): Outside NHI scope—personal tools don’t need enterprise identity. The boundary between Layer 3 and 4 is partly enforced by identity: enterprise agent identities only exist within the enterprise stack.

What’s Missing Today

Being honest about current gaps:

No standard identity framework: Every organization implements NHI differently. There’s no equivalent to SAML or OAuth for AI agent identity.

Limited tooling: Most AI platforms don’t have native identity management. You’re building custom solutions or adapting identity tools designed for humans.

Unclear legal precedent: Courts haven’t ruled on AI agent accountability. We’re building systems for a legal framework that doesn’t fully exist yet.

Model provider opacity: When Claude or GPT-4 is updated, your agent’s behavior might change. You control the prompts and tools, but not the underlying model’s reasoning patterns.

Where This Is Headed

The NHI space is evolving rapidly. Watch for:

Emerging standards: Organizations like NIST and ISO are developing AI governance frameworks. Agent identity will likely be addressed.

Platform support: AI platforms will eventually offer native identity features. First-mover advantage goes to those who’ve built identity-aware systems.

Regulatory clarity: Expect more specific guidance on AI agent accountability, especially in heavily regulated industries.

Identity-as-a-service: Third-party solutions for AI agent identity management will emerge, similar to how identity management evolved for human users.

Next in this series: The Agent Impersonation Problem—what happens when AI acts under human credentials.

Implementing Non-Human Identity in your organization? I’d love to hear your approach. Find me on X or LinkedIn.