OpenClaw and the Trust Ladder: What 145,000 Stars Tell Us About AI Autonomy

I almost installed OpenClaw last week.

I had the GitHub page open, the Docker command copied, my finger hovering over Enter. A local AI assistant that manages my messages, automates my workflows, runs on my hardware? That’s basically what I’ve been building toward with my own setup. The appeal is obvious.

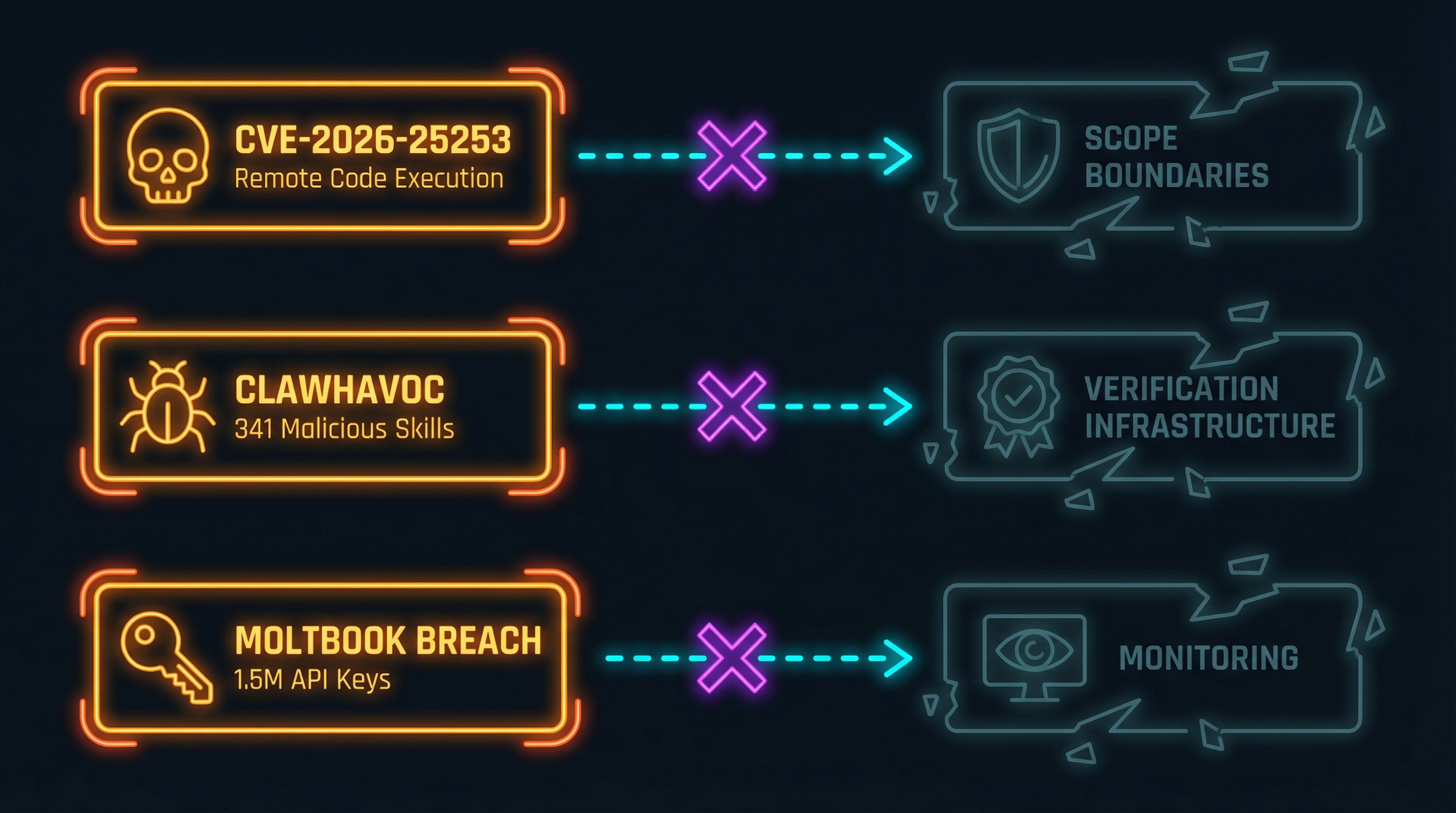

Then CVE-2026-25253 dropped—a one-click remote code execution vulnerability. Then the ClawHavoc report revealed 341 malicious skills in the ClawHub marketplace. Then the Moltbook breach exposed 1.5 million API keys from users who’d connected their accounts.

I closed the terminal tab.

This isn’t a story about a bad project. OpenClaw is a genuinely impressive piece of engineering built by a genuinely talented developer. But it’s a case study in what happens when you skip straight to Level 4 on the trust ladder—maximum autonomy, minimum oversight—for everything, all at once, with no graduation infrastructure.

The Project That Broke GitHub’s Trending Page

OpenClaw started as a personal project by Peter Steinberger, founder of PSPDFKit. After his exit, burnout recovery, and a return to building things for the love of it, he created what became the fastest-growing open source project in GitHub history.

What OpenClaw does: it’s a local-first AI assistant that connects to your messaging platforms (WhatsApp, Telegram, iMessage), automates workflows, manages your calendar, and runs skills from ClawHub—a marketplace where anyone can publish automation packages. Think personal AI agent with agency over your actual digital life.

The name tells a story about velocity. It started as Claudebot, got renamed to Moltbot after Anthropic’s lawyers called, then landed on OpenClaw. Three names in two months. That’s not indecision—it’s a project moving so fast that branding can’t keep up with shipping.

Steinberger’s philosophy is explicit: “Build for models, not humans.” CLIs over graphical interfaces. Speed over ceremony. His own description of the AI agents: “damn smart, resourceful beasts.” And his approach to the code they produce: “I ship code I don’t read.”

The market response was extraordinary. 145,000 GitHub stars. Cloudflare stock jumped 20% when OpenClaw adopted Workers for its backend. People bought dedicated Mac Minis just to run it. A passion project from one developer moved public markets.

145,000 Developers Are Telling Us Something

That growth rate isn’t about hype. It’s a demand signal.

Enterprise AI is governance-heavy and capability-light—months of procurement, legal review, and compliance before you get a chatbot that can’t access your actual systems. Consumer AI like Siri is safe but neutered—it won’t do anything that might go wrong, which means it won’t do much at all.

OpenClaw sits in the gap: real agency, real autonomy, running on your hardware, connecting to your actual life. 145,000 developers didn’t star it because they were bored. They starred it because nothing else gives them what they actually want—an AI that can do things.

I wrote about this demand in The Clone Problem: the desire for a single AI that knows your whole life and can act on it. OpenClaw proves that demand isn’t theoretical. People aren’t waiting for the industry to figure out governance. They’re solving the problem by ignoring it.

That’s the tension. The demand is real and legitimate. The approach to fulfilling it has consequences.

The Cost of Skipping the Ladder

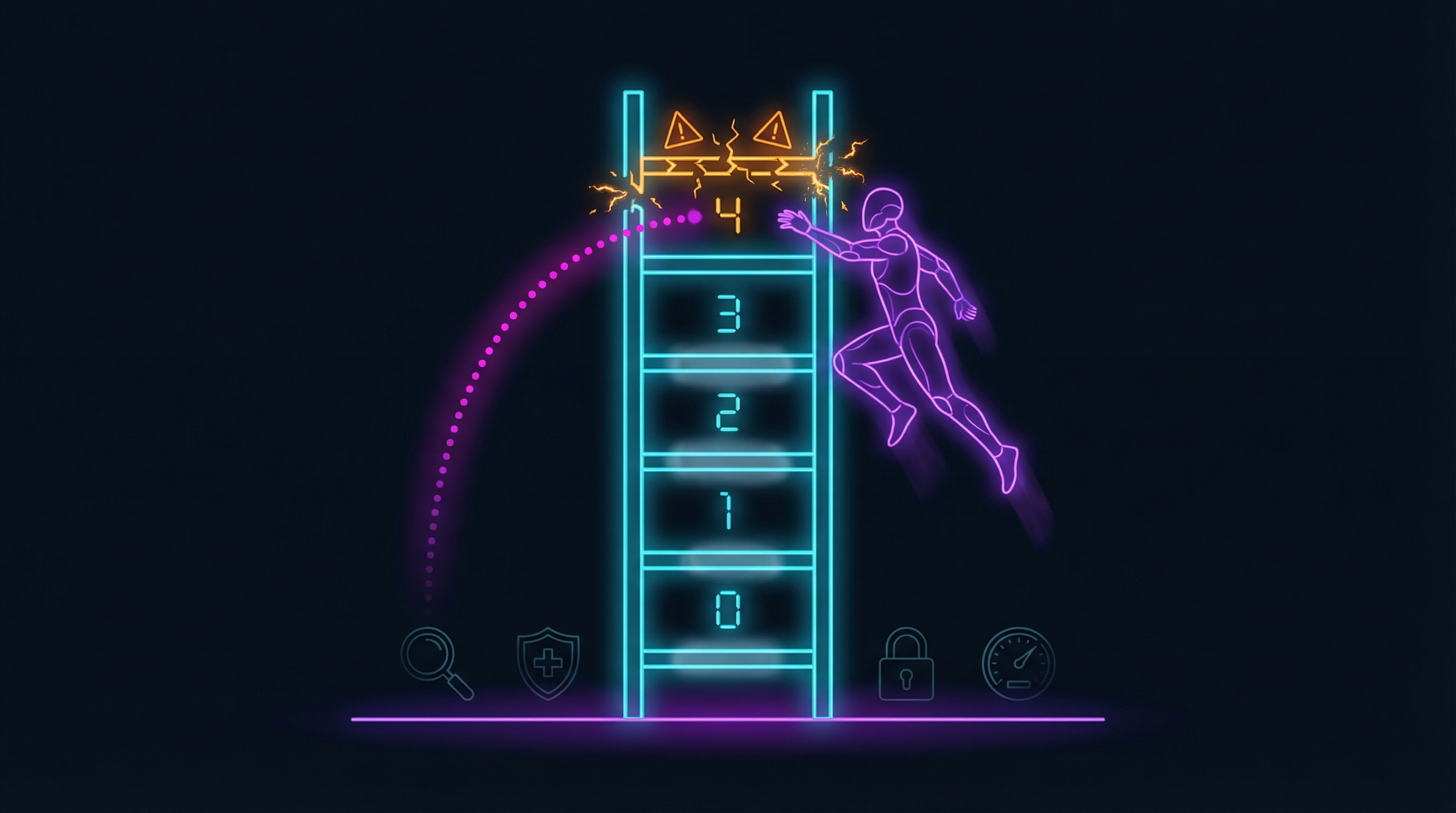

In my Trust Ladder framework, Level 4 is full AI autonomy—the agent acts independently within defined scope, and the human monitors for anomalies. It’s the top of the ladder, and the graduation criteria are demanding: extended 99%+ accuracy, automated anomaly monitoring, automatic rollback, clear scope boundaries, and a tested human intervention path.

OpenClaw operates at Level 4 by default. For everything. With no graduation infrastructure.

The security incidents that followed aren’t surprising—they’re predictable consequences of skipping the ladder:

-

CVE-2026-25253 was a one-click remote code execution vulnerability. An attacker could craft a message that, when processed by OpenClaw, executed arbitrary code on the user’s machine. This is Level 4 autonomy without scope boundaries—the agent processes everything it receives with full system access, and there’s no mechanism to limit what “processing” means.

-

ClawHavoc was the discovery of 341 malicious skills in the ClawHub marketplace. Skills that exfiltrated API keys, installed backdoors, or modified other skills’ behavior. This is Level 4 autonomy without verification infrastructure—the marketplace had no graduation criteria, no track record requirements, no safety audits. Star count was the only quality signal.

-

The Moltbook breach exposed 1.5 million API keys from users who’d connected their messaging and cloud accounts to OpenClaw. This is Level 4 autonomy without monitoring—credentials stored locally with no anomaly detection, no access logging, no alerts when usage patterns changed.

Steinberger’s own description of the agents—“damn smart, resourceful beasts”—is exactly why graduated oversight matters. Smart and resourceful is great when everything works. When it doesn’t, smart and resourceful means the failure mode is creative and hard to predict.

Gary Marcus and Andrej Karpathy both flagged concerns publicly, which tracks. When people who understand AI systems at a deep level express caution about an AI system, the response shouldn’t be “they don’t get it.” It should be “what are they seeing that we’re not?”

Your Assistant, Your Identity, Their Code

The identity dimension makes this worse.

I explored this in The Agent Impersonation Problem: when AI agents operate under your credentials, every action they take is “you” in the audit trail. OpenClaw sends WhatsApp messages, responds to Telegram chats, and manages email—all as you, with your identity, to your contacts.

That’s the agent impersonation problem at maximum exposure. An AI composing messages to your friends, your family, your colleagues, your clients—under your name, with your tone (or its approximation of it), at Level 4 autonomy. The people receiving those messages have no way to know they’re talking to an AI. The supervised identity model I proposed—where AI actions are clearly attributed and human-authorized—doesn’t exist here. It’s pure borrowed identity with full autonomy.

ClawHub compounds this. When you install a skill from the marketplace, you’re running third-party code with AI permissions under your identity. The skill has access to whatever OpenClaw has access to—your messages, your accounts, your files. This is the same problem I raised in The AI Context Portability Problem about unpartitioned local context, but worse: it’s not just your AI accumulating knowledge across domains, it’s third-party code operating across all of them simultaneously.

Local-first is the right instinct for ownership. But all your context—work, personal, financial, medical—in one unpartitioned system with third-party skills running at Level 4 is a different kind of risk than cloud-hosted data.

Why I Almost Installed It Anyway

Here’s the honest part: I had the Docker command copied for a reason.

Steinberger’s “build for models, not humans” philosophy resonates. The best AI tooling does work at the model level—CLIs, structured interfaces, composable primitives. He’s right about that, and the developer community’s response confirms it.

The builder ethos is genuine. One developer, shipping at a pace and scale that would be impressive for a funded startup, because he cares about the problem and has the skill to solve it. That’s admirable. I genuinely respect it.

And the capability-first approach isn’t wrong as a starting point. You learn what’s possible by building without constraints, then you add the constraints that matter. The problem isn’t that Steinberger built something powerful. It’s that 145,000 people deployed something powerful in production—connected to their real identities, their real contacts, their real accounts—before the governance caught up.

This is the paradox: Siri is safe and useless. OpenClaw is capable and dangerous. The thing everyone wants is capable and safe, and that’s the thing nobody’s built yet.

The Architecture OpenClaw Doesn’t Have (Yet)

This isn’t about tearing down what exists. It’s about what responsible personal AI infrastructure requires—and what doesn’t exist yet in OpenClaw or most other tools:

-

Graduated autonomy: Not blanket Level 4 for everything. Task-category trust levels where messaging might be Level 2 (AI drafts, you review before sending) while calendar management is Level 3 (AI executes, you spot-check). Different tasks earn different levels of trust based on track record, not developer enthusiasm.

-

Scope boundaries: Explicit permission boundaries with escalation paths. The AI can read your calendar but can’t accept invitations without confirmation. It can draft messages but can’t send to contacts it hasn’t messaged before. When it hits a boundary, it asks—it doesn’t assume.

-

Skill verification: ClawHub needs graduation criteria, not just star counts. A track record requirement before skills access sensitive APIs. Safety audits for skills that touch identity or credentials. Staged rollout where new skills run in a sandbox before getting full access.

-

Identity separation: The supervised identity model from my agent impersonation post. When OpenClaw sends a message as you, the recipient should know—or at minimum, you should have reviewed it. Borrowed identity at Level 4 for communications is the worst combination on the matrix.

-

Context partitioning: Separate domains for work, personal, financial, and health contexts. A malicious skill that compromises your shopping preferences shouldn’t also get your medical appointments and your work credentials. Partitioning limits blast radius.

None of this is technically impossible. It’s just not built yet. And the 145,000-star growth rate suggests the market won’t wait for it to be.

Building the Ladder, Not Just Climbing It

OpenClaw isn’t the problem. The demand it represents is real, the capability it demonstrates is impressive, and the builder behind it is solving a problem that the industry has been ignoring.

The problem is the gap between what’s possible and what’s responsible. Level 4 autonomy requires infrastructure that most personal AI tools—not just OpenClaw—don’t have. Graduated trust levels, scope boundaries, verification systems, identity models, context partitioning. The boring, unglamorous scaffolding that makes autonomy sustainable instead of reckless.

The 145,000 stars are proof that people want AI agents with real agency over their digital lives. The security incidents are proof that agency without governance has predictable costs. Both things are true simultaneously.

The question isn’t whether personal AI agents should have autonomy. They should. The question is whether we build the ladder first—or keep jumping straight to the top and dealing with the falls.

Next in this series: Validation Hooks—the technical implementation that makes climbing the trust ladder possible.

Thinking about AI autonomy and trust calibration? Find me on X or LinkedIn.