The Agent Impersonation Problem: When AI Commits Code as You

When I run git commit through Claude Code, whose name appears in the commit history?

Mine.

The commit message might say “Co-Authored-By: Claude” somewhere in the body, but the actual commit author—the identity that shows up in git log, that gets credited in contribution graphs, that appears in audit systems—is me.

This is the agent impersonation problem. AI agents operate under human credentials, wearing our identity badges while performing actions we didn’t specifically approve.

And it’s not just git. It’s everywhere.

The Current State: Borrowed Identity

Last post, I explored what Non-Human Identity looks like in theory. Let’s look at what it looks like in practice: non-existent.

Today’s AI agents overwhelmingly operate by borrowing human identity:

Development Tools

- Claude Code uses my git credentials

- Copilot commits appear as the developer who accepted them

- Code review tools comment as the user who triggered them

Productivity Tools

- AI-drafted emails send from your account

- Meeting summaries post under your name

- Document edits appear in version history as you

System Integrations

- API calls authenticate with your keys

- Database queries run under your connection

- Cloud operations execute with your permissions

The pattern is consistent: AI does the work, human takes the credit (and the blame).

Why This Is a Problem

Borrowed identity creates several categories of risk:

Audit Trail Contamination

When regulators ask “who approved this change?”, the audit log says “you.” But you didn’t review every line of AI-generated code. You didn’t evaluate every possible interpretation of the prompt. You accepted output that looked reasonable.

The audit trail is technically accurate—you did trigger the commit—but it’s semantically false. It implies a level of review and approval that may not have occurred.

Accountability Diffusion

If AI-generated code introduces a bug, a security vulnerability, or a compliance violation, whose fault is it?

- The developer who accepted the code? They couldn’t review every implication.

- The AI that generated it? It has no legal personhood.

- The organization that deployed the AI? They didn’t write the specific code.

- The AI vendor? They just provided the capability.

Borrowed identity lets everyone point at someone else. Nobody is clearly accountable because the identity trail conflates human and AI action.

Skill Misrepresentation

Git contribution graphs don’t distinguish between code you wrote and code you accepted from AI. Your professional identity includes work that isn’t entirely yours.

This isn’t inherently bad—people have always used tools—but the magnitude is different. When a developer’s output includes 60% AI-generated code, their “contributions” represent something qualitatively different than purely human work.

Permission Escalation

AI agents operating under human credentials have human permissions. Your Claude Code session can do anything you can do—read any file you can read, commit to any repository you can commit to, access any system you can access.

This is appropriate for tools that execute your explicit commands. It’s problematic for agents that interpret your intent and take autonomous action.

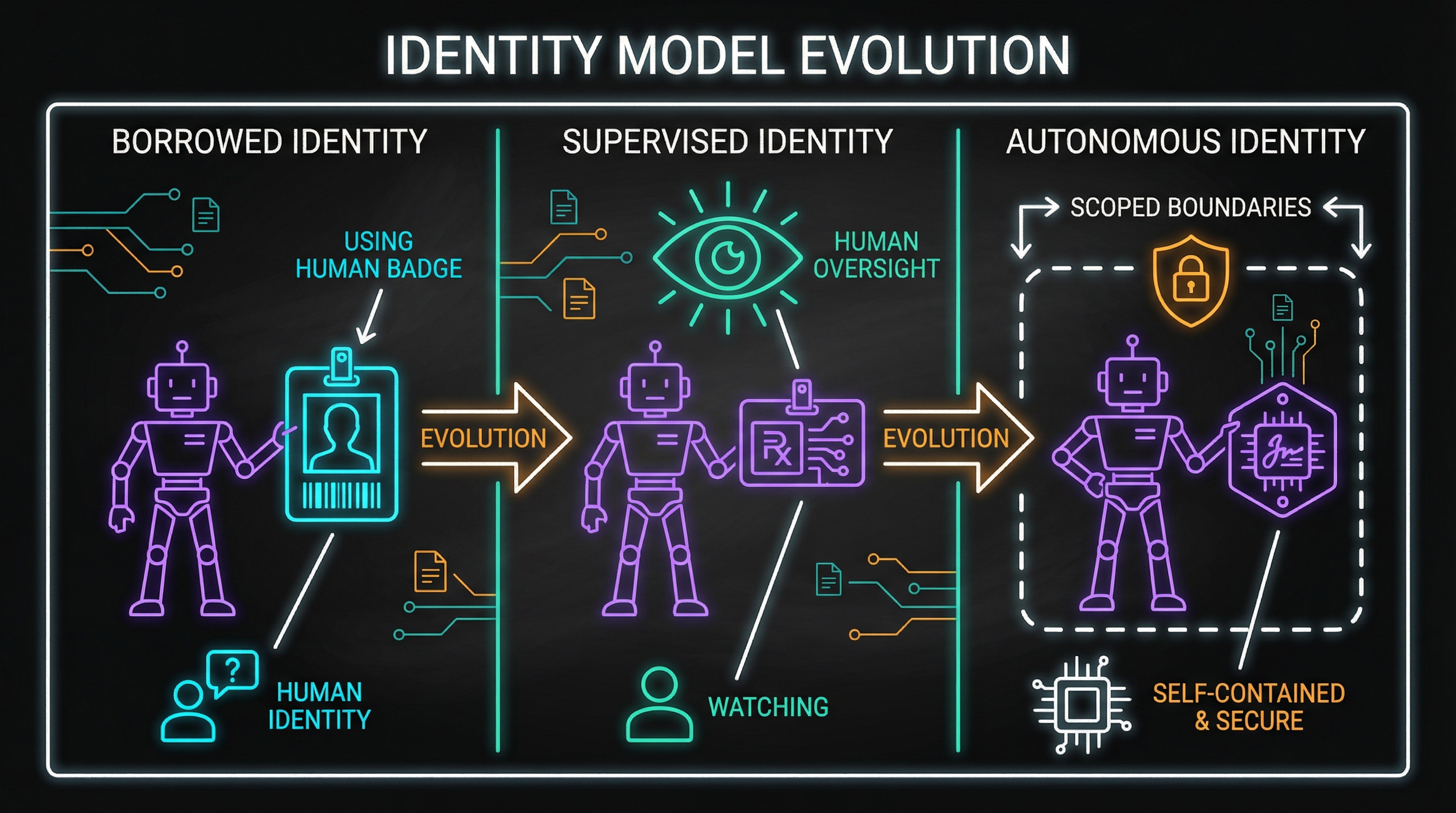

The Three Identity Models

I see the solution space as a progression through three models:

Model 1: Borrowed Identity (Current State)

How it works: AI operates entirely under human credentials. No distinction between human and AI actions.

Characteristics:

- Simple to implement (no changes needed)

- Human fully “responsible” for all outputs

- No visibility into what was human vs. AI

- Audit trails conflate human and AI action

When it’s acceptable: Low-stakes, non-regulated environments where the human genuinely reviews everything. Personal projects where accountability doesn’t matter.

When it fails: Regulated environments, collaborative work, any context where “who did this” matters.

Model 2: Supervised Identity

How it works: AI has distinct identity but operates only under explicit human authorization. Every AI action is linked to both the AI that performed it and the human who authorized it.

Characteristics:

- Dual attribution (AI + human) for every action

- Human authorization required before AI action

- Clear audit trail of what AI did vs. what human did

- Human maintains control but AI is visible

Implementation pattern:

Action Record:

├── Actor: Agent-XY7 (AI)

├── Authorizer: jane.doe@company.com (Human)

├── Authorization Type: Explicit approval / Batch authorization / Policy-based

├── Timestamp: 2026-01-29T14:30:00Z

└── Action: commit to main branchWhen it’s appropriate: Regulated environments where accountability matters but efficiency requires AI assistance. The sweet spot for most enterprise use cases.

Model 3: Autonomous Identity

How it works: AI operates with its own identity and defined scope of authority. Within that scope, no human authorization needed. Outside that scope, escalation required.

Characteristics:

- AI has genuine identity separate from any human

- Defined permission boundaries

- Can act autonomously within scope

- Escalates when scope exceeded

- Full accountability to AI identity

Implementation pattern:

Agent Identity:

├── ID: Agent-XY7

├── Scope:

│ ├── Can commit to: feature branches

│ ├── Cannot commit to: main, release branches

│ ├── Can modify: test files, documentation

│ └── Cannot modify: production configs, credentials

├── Escalation: Requires jane.doe authorization for out-of-scope actions

└── Audit: All actions logged with full reasoningWhen it’s appropriate: Mature environments with well-defined boundaries, thorough monitoring, and organizational trust in AI capabilities. We’re not there yet for most use cases.

The Git Problem in Detail

Let me focus on the specific case I deal with daily: git identity in Claude Code.

Current behavior:

$ git log --oneline

a3f7c2d Fix authentication bug in user service

b8e1d4a Add rate limiting to API endpoints

c9d2e5b Refactor database connection poolingAll three commits show me as author. But:

- Commit a3f7c2d: I found the bug, described it, reviewed the fix

- Commit b8e1d4a: Claude proposed it proactively, I approved without deep review

- Commit c9d2e5b: I asked for refactoring, Claude did extensive changes I spot-checked

These represent very different levels of human involvement, but the audit trail doesn’t reflect that.

What supervised identity would look like:

$ git log --oneline --format='%h %s [%an, authorized by %cn]'

a3f7c2d Fix authentication bug [Claude-Agent-7a3f, authorized by me]

b8e1d4a Add rate limiting [Claude-Agent-7a3f, authorized by me (batch)]

c9d2e5b Refactor database pooling [Claude-Agent-7a3f, authorized by me (partial review)]The commit author would be the AI agent. The committer (or a custom field) would indicate who authorized it and how.

Implementation challenges:

- Git wasn’t designed for this

- Co-Author-By is a convention, not enforced

- Build systems expect human identities

- GitHub/GitLab profiles don’t have “AI agent” options

- Compliance tools parse existing identity formats

What Claude Buddy Could Do

I’ve been thinking about how to address this in Claude Buddy. Some possibilities:

Identity-Aware Commits

Instead of just adding “Co-Authored-By” to commit messages, implement proper identity separation:

# claude-buddy.yml

git:

identity_mode: supervised

agent_identity: "Claude-Buddy-Agent"

authorization_tracking: true

commit_metadata:

include_session_id: true

include_review_level: true # none, spot-check, full-reviewThis would produce commits that clearly distinguish AI from human contribution, with metadata about how much human review occurred.

Authorization Levels

Different actions could require different authorization:

authorization:

file_creation: auto # AI can create files autonomously

file_modification: auto # AI can modify existing files

commit_to_feature: explicit # Requires human "commit" command

commit_to_main: blocked # Not allowed, period

deployment: multi-approval # Requires additional human approvalAudit Export

Generate compliance-friendly audit reports:

Session Audit Report

====================

Session ID: CB-2026-0129-7a3f

Human: jane.doe@company.com

Agent: Claude-Buddy-Agent-v4.2

Actions Performed:

1. File created: src/auth/handler.ts

Authorization: Explicit (user prompt: "create auth handler")

Review level: Spot-check

2. File modified: src/api/routes.ts

Authorization: Implicit (continuation of task 1)

Review level: None (part of approved task)

3. Commit created: a3f7c2d

Authorization: Explicit (user command: /commit)

Review level: Full review (user reviewed diff)The Larger Conversation

This isn’t just about git or Claude Code. It’s about how we attribute work in an AI-augmented world.

Academic authorship: Papers written with AI assistance face the same attribution questions. Who’s the author when 40% of the content was generated?

Professional portfolios: Designers, writers, and developers showcasing work that’s partially AI-generated. How do we represent that honestly?

Performance evaluation: Measuring employee productivity when AI is involved. Are we evaluating the human or the human-AI system?

Legal liability: Contracts, legal documents, financial statements reviewed or generated by AI. Where does professional responsibility lie?

The agent impersonation problem is a symptom of a larger question we haven’t answered: how do we attribute contribution in human-AI collaborative work?

The AIL Framework

Daniel Miessler’s AI Influence Level (AIL) framework offers a practical approach to this transparency problem. AIL provides a 0-5 scale for labeling AI involvement in creative work:

- AIL 0: Human created, no AI involved

- AIL 1: Human created, minor AI assistance (grammar, sentence structure)

- AIL 2: Human created, major AI augmentation

- AIL 3: AI created, human provided full structure

- AIL 4: AI created, human provided basic idea

- AIL 5: AI created, minimal human involvement

This post? AIL 2—I structured the argument and refined the output, but Claude helped draft sections. The code examples are my patterns, the framework synthesis is collaborative.

AIL solves the disclosure problem for content, but it doesn’t solve the identity problem for actions. Knowing an article is “AIL 3” tells you about the content’s provenance. It doesn’t tell you who authorized the AI to publish it, or who’s accountable if it causes harm.

The agent impersonation problem needs both: content transparency (AIL) AND action attribution (supervised identity).

Moving Forward

I don’t have complete answers, but I have directional beliefs:

Transparency is non-negotiable: We need to be able to tell what AI did vs. what humans did. The current conflation is unsustainable in any context where accountability matters.

Supervised identity is the near-term answer: Full autonomous identity requires trust we haven’t built yet. Supervised identity—AI acts, human authorizes—is achievable and appropriate.

Tooling needs to evolve: Git, audit systems, contribution metrics—all assume human actors. We need standards and tooling that accommodate AI identity.

This is urgent: The longer we operate with borrowed identity, the more contaminated our audit trails become. Every AI-assisted commit today creates attribution debt we’ll have to reconcile later.

Next in this series: Your Digital Twin’s Employment Contract—what happens when your AI assistant absorbs institutional knowledge.

Thinking about AI identity in your work? I’d love to discuss. Find me on X or LinkedIn.