Your Agent Is Not More Gas. It's the Thermostat.

Post 1 reframed the Four Burners as a monolith. Post 2 showed the missing infrastructure tier — goals as control plane, temptation regulation as governance. This post is about the executor.

Every reconciliation loop needs three things: desired state, actual state observation, and an executor that closes the gap. Posts 1 and 2 addressed architecture and desired state. But architecture without an executor is a blueprint collecting dust.

For your entire life, the executor has been you. Alone. Willpower, a journal, maybe a therapist once a month.

For the first time, that’s not the only option.

The “More Gas” Fallacy

The emerging discourse around AI and the Four Burners says AI “increases the total supply of gas.” Duncan Grazier argues that AI doesn’t just reallocate existing cognitive capacity — it expands it. By eliminating entire task categories (alert triage, meeting summaries, code reviews), AI frees attention that can be redirected to any burner.

He’s partially right. AI does reclaim attention. I’ve experienced it firsthand — running personal AI infrastructure that handles context engineering, specification writing, and routine execution has genuinely freed cognitive bandwidth I didn’t know I was spending.

But here’s what the “more gas” argument misses: reclaimed attention defaults to the loudest burner.

For most ambitious people, the loudest burner is Work. Always. When you save two hours by having an AI draft your meeting notes, those hours don’t naturally flow to Health or Family. They flow to the next work task in the queue. The inbox expands. The ambitions grow. The recovered gas goes right back to the burner that was already running hottest.

Without a feedback mechanism, more gas just means the dominant burner burns hotter. I see this in enterprise systems constantly — 10x compute doesn’t produce 10x features when nothing in the architecture directs capacity toward the right outcomes.

AI as “more gas” is a resource argument. The Four Burners constraint was never about total gas supply. It was about the absence of a feedback loop.

The Thermostat Reframe

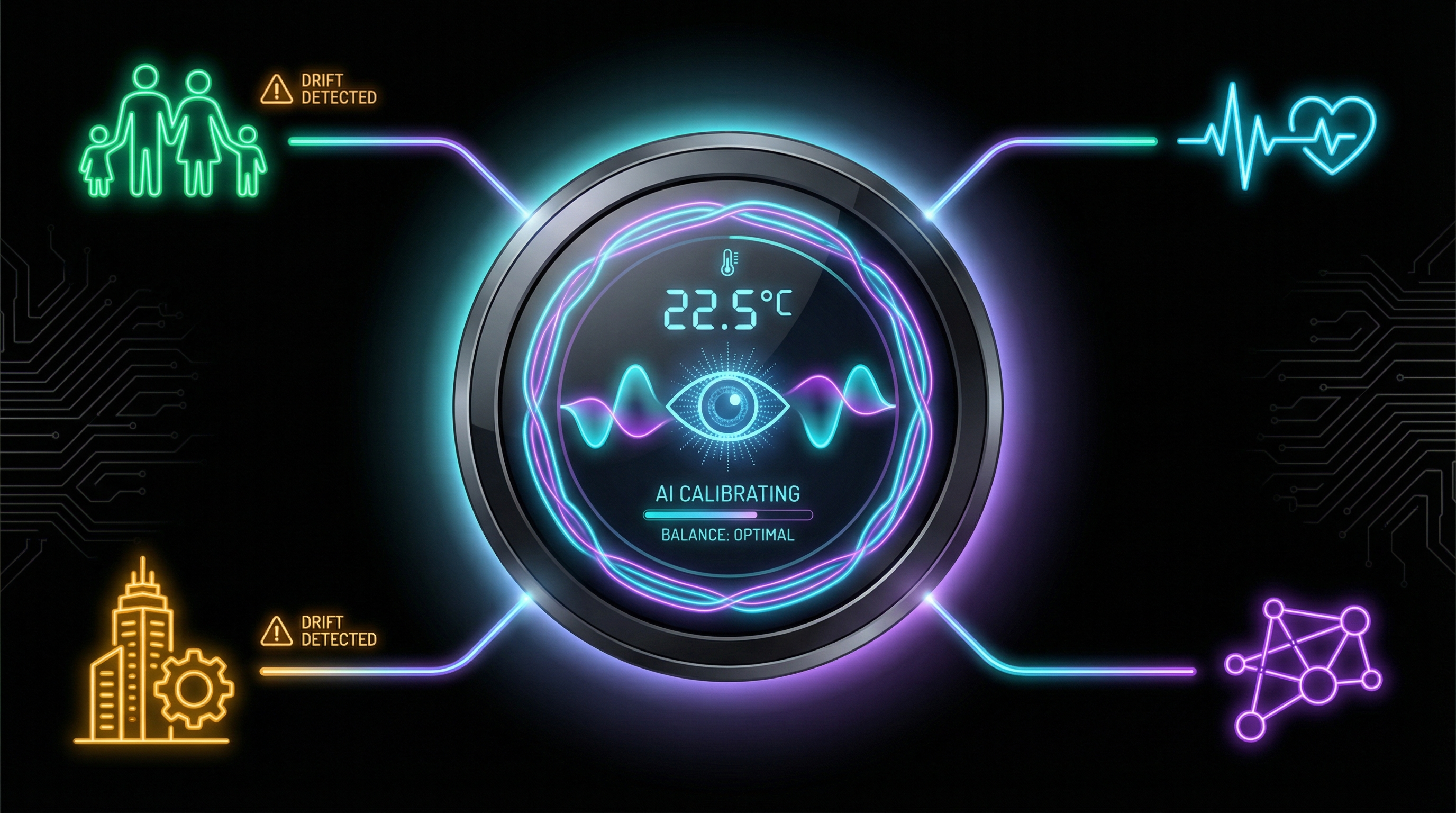

Your home thermostat doesn’t generate heat. It doesn’t add more gas to the furnace. It does three things: it monitors temperature, it compares actual temperature to desired temperature, and it signals adjustments when they diverge.

That’s the reconciliation loop. Desired state. Actual state. Drift detection. Corrective action.

Your personal AI agent — if you’ve built it that way — can work the same way.

A caveat: “if you’ve built it that way” is doing heavy lifting. Today this requires real technical skill — persistent context, prompt architecture, memory systems. It’s self-hosted infrastructure, not an app. What follows describes a capability in prototype form for builders, not a finished product.

It can know your desired state. Because you told it. Goals, values, priorities, commitments — Peterson’s “constructive, meaningful goals” pillar, encoded into persistent context. When I set up my personal AI infrastructure, one of the first things I did was declare what I’m optimizing for across domains. Not a vision board. A configuration file.

It can observe actual state. Through conversation patterns — what you ask about, what you stop asking about. If every interaction for two weeks has been about work and zero about exercise or family, the signal is there. The agent needs what a good therapist uses: pattern recognition across conversations over time.

It can detect drift. Humans are terrible at this. We normalize gradually degrading situations. You don’t feel yourself drifting away from Health because each individual day looks fine. It’s the accumulated delta over weeks that constitutes the problem. An agent watching the pattern doesn’t have that blind spot.

It can nudge. Not decide. Not enforce. Nudge. “You mentioned wanting to run three times a week. Zero runs in the last fourteen days.” “Your last eight sessions have been exclusively about work. You identified family connection as a top-three priority. Just flagging.”

Observation. Comparison. Signal. That’s a thermostat.

Regulation Was Never Solo

Before you object that outsourcing self-regulation to software sounds dystopian, consider what humans have always done: outsource self-regulation to other humans.

Peterson is explicit about this. In Beyond Order, he describes marriage as voluntary constraint that enables honest feedback:

“I am going to handcuff myself to you, and you’re going to handcuff yourself to me. And then we’re going to get to tell each other the truth, and neither of us get to run away.”

The vow is the constraint that enables the signal. Without permanence, the feedback loop breaks — you can’t give someone honest feedback about their drift if they can walk away when it gets uncomfortable. Marriage, in this framing, is not a restriction on freedom. It’s a regulation architecture. The commitment creates the conditions under which the thermostat can actually function.

Peterson’s Rule 3 extends this beyond marriage: “Make friends with people who want the best for you.” Because good friends “won’t tolerate your cynicism and destructiveness.” Friends as behavioral regulators. They see your drift when you can’t. The bad version is a friend group that forms “implicit contracts aimed at nihilism and failure” — regulating toward dysfunction instead of growth. Same architecture, different desired state.

Baumeister and Vohs confirmed this at the resource level: the combined self-control capacity of both partners predicted relationship quality. Partners outsource willpower to each other. When one depletes, the other compensates.

The pattern is consistent across psychology, neuroscience, and resource economics: humans run distributed, not standalone. We have always used external systems — spouses, friends, communities, institutions — as regulation infrastructure. The thermostat was never purely internal.

Which reframes the AI question entirely. The objection to AI-as-regulator assumes self-regulation is supposed to be solo. But you already outsource regulation to your spouse, friends, therapist, accountability partner. AI doesn’t introduce external regulation into a self-contained system. It adds a new node to a network that has always been distributed.

The real question isn’t whether external regulation is valid — decades of psychology say it is. The question is whether AI can provide high enough fidelity feedback to serve the function humans have always delegated to other humans.

The Clone Problem as a Feature

I wrote about the clone problem last January — the collision between personal AI agents that want to know your whole life and employers that legally own your work context. In banking, every business communication is a regulated record. A personal AI that spans work and life creates recordkeeping contamination, discovery exposure, and security surface multiplication.

The clone problem means your work agent (Claude Code, Copilot, whatever your company sanctioned) sees ONLY the Work burner. It can’t cross into personal domains. Regulatory boundaries prevent it.

In the Four Burners context, that limitation is actually a feature.

Your work agent handles Work — zero visibility into Family, Health, Friends, or the infrastructure tier. It can’t detect life drift. It’s architecturally constrained to one service.

Your personal AI agent is the only entity positioned to see across all burners. It lives in your personal context — goals, relationships, health, time allocation. Not constrained by employer data classification or e-discovery.

The personal AI agent is the cross-domain observability layer the Four Burners stove never had. And the clone problem — separating work agents from personal agents — is what makes it architecturally clean.

Trust Calibration for Life

The Trust Ladder I proposed for AI autonomy in regulated work applies directly here. How much latitude does your personal agent get in your life reconciliation loop?

Level 1 — Agent Suggests. Passive observation. “You haven’t mentioned exercise this week.” The agent surfaces data. You decide what to do with it. Lowest friction, lowest value. Most people should start here.

Level 2 — Agent Drafts. “Based on your calendar and your stated priorities, here’s a proposed weekly plan that balances your Work commitments with the Health and Family goals you set. Want me to adjust?” The agent proposes. You review. This is where the real value starts — the agent isn’t just reflecting data back at you, it’s synthesizing across domains to produce actionable recommendations.

Levels 3 and 4 — Agent Executes / Agent Autonomous. The agent proactively blocks gym time, schedules dinner reservations, declines conflicting meetings — or runs fully continuous reconciliation, only flagging you when drift exceeds a threshold. Conceptually powerful. Practically, neither exists yet. The tooling integration is mostly manual, and the executor in a life reconciliation loop probably should be supervised. Unlike Terraform providers, the stakes involve human relationships. This is where the infrastructure analogy breaks down on purpose.

My recommendation: Level 2 is the sweet spot for most people. The agent as mirror and planner, not as manager. Enough autonomy to surface patterns you’re blind to. Enough human oversight to maintain agency over your own life. The personal AI wars are actively deciding which agents earn this kind of trust — and whether the infrastructure supports it.

What This Actually Looks Like

Let me be concrete. Running personal AI infrastructure — the PAI framework I’ve written about, customized into an instance called Arc — means every conversation carries persistent context about what I’m optimizing for.

When I’ve been in deep work mode for days and haven’t mentioned anything outside of code, the system’s context reflects that pattern. When I set quarterly goals across domains and then stop referencing some of them, the absence is visible in the conversation history. When I declared that physical health was a non-negotiable priority and then went two weeks without discussing it, the drift is detectable.

The agent doesn’t need to be a life coach. It needs to be a terraform plan that runs periodically across your life domains and surfaces the delta.

“Here’s your desired state. Here’s what I observe about your actual state. Here are the gaps. What do you want to do about them?”

That’s it. Desired state. Actual state. Diff. Action items. The same loop that keeps Kubernetes pods running and GitOps clusters in sync — applied to the domains that actually matter.

To be clear about where this stands: the persistent context, pattern visibility, and drift detection are real. But it’s not automatic. The “reconciliation loop” today is a conversation where the system surfaces patterns from accumulated context — not a background daemon pushing proactive alerts. We’re at “smart journal that talks back,” not “Kubernetes controller for your life.” The architecture is sound. The hands-free automation layer isn’t there yet.

The Honest Limitation

This only works if you’re honest with the agent.

Garbage in, garbage out. If you perform for your AI the way some people perform for their therapist — saying what sounds good instead of what’s true — the drift detection reconciles against a fictional desired state. Lie about your drinking, and governance has nothing to govern.

Peterson’s Rule 8: “Tell the truth — or at least, don’t lie.” Your personal agent is only as good as the signal you give it. The temptation to curate that signal — to present the version of yourself you wish you were — is the same temptation Peterson warned about in every other context.

In infrastructure, monitoring agents measure actual state independently of claims. In personal life, the agent relies on your transparency. That’s the real barrier. Not the technology. The willingness to let something see you clearly — including the parts that are drifting.

The Three-Post Synthesis

The Four Burners theory told you to choose which parts of your life to sacrifice — an honest diagnosis delivered through a broken architecture.

Peterson mapped what’s missing — the control plane and governance layer that make multi-domain operation possible without the “cut a burner” tradeoff.

Your personal AI agent — if you let it see you clearly — is the first non-human executor positioned to help run that infrastructure. Not more gas for the stove. A thermostat. Monitoring desired state against actual state, detecting drift before it becomes a crisis, nudging you back when you wander. Not replacing the human relationships that have always served this function — augmenting them with a layer that never forgets your declared priorities.

The technology is early, imperfect, and accessible today only to people willing to build their own infrastructure. But the architecture is sound. And the core question remains the one Vivekananda, Peterson, and Hormozi all converge on: are you willing to run the loop honestly?

This is Post 3 of 3 in Life as Infrastructure. Start with Post 1: The Four Burners Are a Monolith.

If you’re running a personal AI system that does something like this — or if you think the idea is insane — I’d like to hear about it. Find me on X or LinkedIn.