The Personal AI Wars: A Developer's Guide

The personal AI assistant space exploded in early 2026, driven by OpenClaw (formerly Clawdbot) — 150,000+ GitHub stars in 72 hours, the fastest-growing open source project in history. This triggered alternatives (NanoClaw, Nanobot, ZeroClaw) and a wave of startups building infrastructure around it.

Simultaneously, Anthropic started aggressively locking down its platform to prevent third-party agents from using consumer subscriptions. On January 9, 2026, they silently blocked all third-party tools from using Claude Pro/Max OAuth tokens. On February 19, they formalized it legally — no consumer subscription tokens in any third-party tool, including their own Agent SDK.

The most exciting consumer AI movement of the decade runs directly into the economic interests of the labs powering it. Here’s what it means for developers choosing where to build.

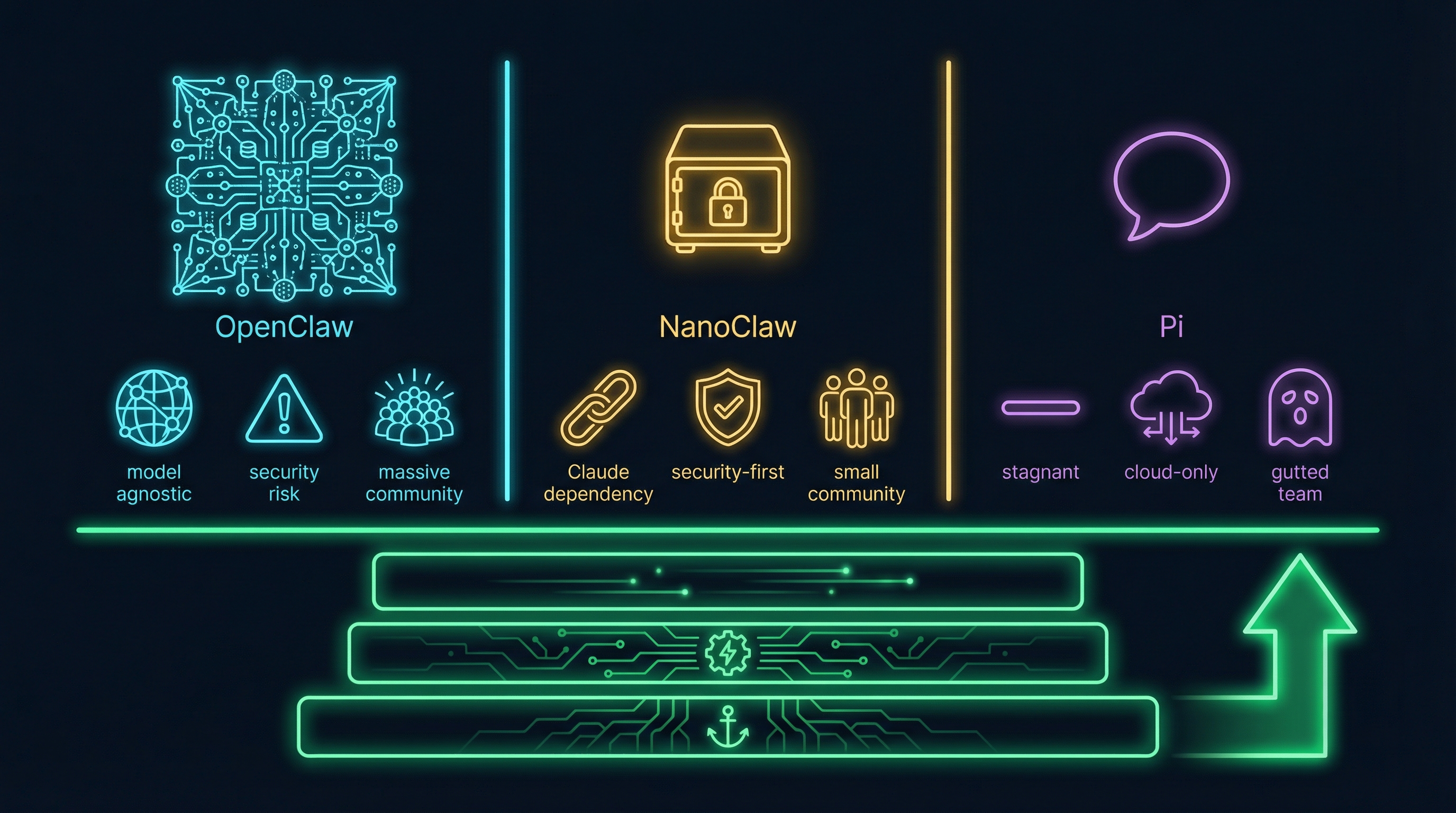

The Players

1. OpenClaw

An open-source personal AI agent that connects to WhatsApp, Telegram, Discord, Slack, Signal, and iMessage. It doesn’t just chat — it acts: managing email, browsing the web, running shell commands, and executing multi-step workflows autonomously. Creator Peter Steinberger (@steipete) announced on February 14 that he’s joining OpenAI. OpenClaw is moving to a foundation, sponsored by OpenAI.

The numbers: 150K+ stars, 20K+ forks, 50+ integrations, 700+ skills on ClawHub. The architecture: 52+ modules, ~400,000 lines of code, single Node.js process with shared memory. Model-agnostic — works with Claude, GPT, DeepSeek, and local models.

The catch: The ClawHavoc security incident exposed 341 malicious skills (11.9% of ClawHub) with Atomic Stealer malware and 7,000+ downloads before detection. Heavy users report $300-$750/month in API tokens. The codebase is too large for most people to audit, and with Steinberger at OpenAI, governance falls to the community.

2. NanoClaw

A lightweight, security-focused alternative built as a direct response to OpenClaw’s complexity. Created by Gavriel and Lazer Cohen (Qwibit), it runs agents in isolated Linux containers — OS-level sandboxing rather than application-level permission checks. The core is ~500 lines of TypeScript, auditable in 8 minutes.

7,200+ stars in four weeks. Built directly on Anthropic’s Claude Agent SDK. Each chat group gets its own isolated container with separate memory and filesystem. Philosophy: “skills over features” — you extend it with Claude Code skill files, not pull requests.

The catch: Hard Claude dependency is the single biggest risk factor. 10 contributors vs. OpenClaw’s hundreds. WhatsApp-only by default. Requires a Claude Code subscription to set up.

3. Pi (the Coding Agent)

Not a personal AI agent — a coding agent toolkit. Think Claude Code but community-built and model-agnostic. Pi provides a unified multi-provider LLM API, an agent runtime, and a terminal UI. Created by Mario Zechner (@badlogic), 17,100+ stars, 127 contributors. Critically, Pi is the engine that powers OpenClaw under the hood.

The architecture is a modular monorepo: pi-ai (unified LLM API across every major provider), pi-agent-core (agent loop), pi-coding-agent (CLI), and more. ~500 lines in the core agent. No MCP integration — Pi’s philosophy is that agents should generate their own tools rather than download them from a marketplace.

The catch: Developer-only audience. No messaging integrations. Creator on OSS vacation until March 2. But the model-agnostic design means the Anthropic crackdown is exactly the scenario Pi was built to survive.

The Elephant in the Room: Anthropic’s Crackdown

This is the single most important dynamic in this space.

Anthropic sells subscriptions priced for “human-speed” usage with built-in rate limits. Third-party agents remove those limits and run autonomous overnight loops that consume far more tokens than the subscription justifies. Heavy Claude Code usage could cost $1,000+/month via API — but users were paying $200/month flat. The “buffet analogy”: Anthropic offers all-you-can-eat, but you’re supposed to eat slowly. Agents eat at 10x speed.

The Industry Split

Google copied Anthropic’s playbook within a week. But OpenAI went the opposite direction entirely.

While Anthropic blocked OAuth tokens and banned third-party harnesses, OpenAI hired Steinberger, sponsored OpenClaw as a foundation, open-sourced their own Agents SDK (which supports non-OpenAI models), and allowed Codex to accept ChatGPT Plus/Pro subscriptions in third-party tools.

This isn’t an industry trend — it’s an industry split. Anthropic and Google are consolidating the harness layer. OpenAI is betting that openness pulls developers away from closed competitors.

Impact: OpenClaw is low risk — model-agnostic and backed by OpenAI. NanoClaw is critical risk — hard dependency on the wrong side of the split. Pi is low risk — model-agnostic by design, and the split makes its unified LLM API more valuable.

What Makes a Platform Worth Building On?

Five factors matter when deciding where to build your workflows:

1. Model Independence. Can you swap providers without rewriting everything? OpenClaw and Pi win — both model-agnostic. NanoClaw is dangerously coupled to Anthropic.

2. Data Portability. All three store data locally (SQLite, filesystem, session trees). No cloud dependency. Your context stays yours.

3. Security Architecture. NanoClaw wins definitively. Container-level isolation vs. OpenClaw’s application-level checks that failed in ClawHavoc.

4. Community Momentum. OpenClaw dominates (150K+ stars, 9 YC startups building on it). Pi has 17K+ stars with 127 contributors. NanoClaw has 7K stars and 10 contributors.

5. Extensibility. OpenClaw gives breadth (50+ integrations). NanoClaw gives auditability (skill files, lean core). Pi gives self-sufficiency (agents write their own extensions).

Where the Value Actually Lives

Don’t build on the agent. Build on the infrastructure beneath it. Nobody builds a business on the Linux kernel — you build on what makes it usable, secure, and extensible.

Layer 1: Managed Agent Infrastructure. Hosted instances, one-click deployment, managed updates. Already 9 YC W26 startups here.

Layer 2: Security and Trust Tooling. After ClawHavoc (11.9% malicious skills), this is the urgent gap. No serious team adopts personal AI agents without it.

Layer 3: Model-Agnostic Orchestration. The Anthropic crackdown proves single-provider dependency is existential risk. The most defensible layer to build on.

Verdict: If You Had to Pick One

For building a personal AI workflow: NanoClaw. Sound security, auditable codebase, lean design. But solve the Anthropic dependency first.

For building on the largest ecosystem: OpenClaw. Most integrations, most skills, OpenAI backing. Trade-off: 400K lines you can’t audit and a security model that already failed once.

For the model-agnostic bet: Pi. The infrastructure layer hiding in plain sight — the engine beneath OpenClaw without the surface-level risks.

For regulated industries: NanoClaw. Container isolation maps to what compliance teams understand.

The nuance: These aren’t three competitors in the same ring. OpenClaw and NanoClaw are personal AI agents. Pi is a coding agent toolkit — the engine layer beneath them. The real question isn’t “which one wins” but “which layer do you want to build on.”

The Platform Pattern

This is exactly the pattern I wrote about in The Browser Is Not an API and explored with the clone problem. But unlike the classic pattern, the labs aren’t unified. OpenAI is building a wider bridge while Anthropic and Google pull up the drawbridge — the same bet Android made against iOS.

For developers, this split is leverage. OpenAI is currently the only major lab willing to meet developers where they are. That won’t last forever — but the window is open.

The lesson: bet on model-agnostic infrastructure regardless of which lab is friendliest today. The model is the commodity. The harness is the product. The data layer is the moat. And the lab that’s open today can close tomorrow.

If you’re thinking about where the personal AI space lands, you might also find the OpenClaw trust ladder analysis relevant — it digs into the security model specifically.

Follow the conversation on X @orestesgarcia or connect on LinkedIn.