The Clone Problem: Why Your AI Personal Agent Can't Follow You to Work

Your AI assistant knows your grocery list, your sleep patterns, and your kids’ soccer schedule.

It also knows you’re working on the Q3 integration for a major client, that you have concerns about the vendor’s security posture, and that you’ve been texting your colleague about the deadline pressure.

Now imagine a regulator asking for all communications related to that client engagement. Your “personal” AI just became discoverable evidence.

This isn’t hypothetical. It’s the collision I see coming—and nobody’s building for it.

The Whole-Self Promise

The pitch for AI personal agents is seductive: one system that knows everything about you. Your calendar, your health data, your work projects, your family commitments. A digital twin that can act on your behalf, make decisions aligned with your values, and free you from the cognitive overhead of managing a fragmented digital life.

Daniel Miessler calls his system PAI—a unified cognitive operating system with 65+ skills that learns continuously from every interaction. Companies like Viven.ai are building “digital twins” that ingest every email, meeting, and document to create AI replicas of knowledge workers. The vision is compelling: your AI gets smarter about you over time, across every domain of your life.

But here’s the tension nobody’s addressing: what if your employer legally owns part of that context?

The Regulatory Reality

In banking, every business communication is a regulated record. The SEC requires broker-dealers to retain all business-related communications for at least six years. FINRA mandates preservation in tamper-proof formats. These aren’t suggestions—they’re requirements with teeth.

Since 2021, regulators have imposed over $3.5 billion in fines for “off-channel communication” violations. The crime? Using WhatsApp and personal devices for work messages that weren’t captured by compliance systems. Individual brokers are now facing suspensions—not just firm-level fines—for responding to client questions on personal phones.

The convenience that made personal messaging attractive is exactly what made it a regulatory liability. Now imagine that dynamic applied to an AI that has context on everything.

The Architecture of the Problem

I’ve spent fifteen years as an enterprise architect in financial services. I built Claude Buddy specifically because AI coding tools weren’t designed for regulated environments. The same pattern applies here, but worse.

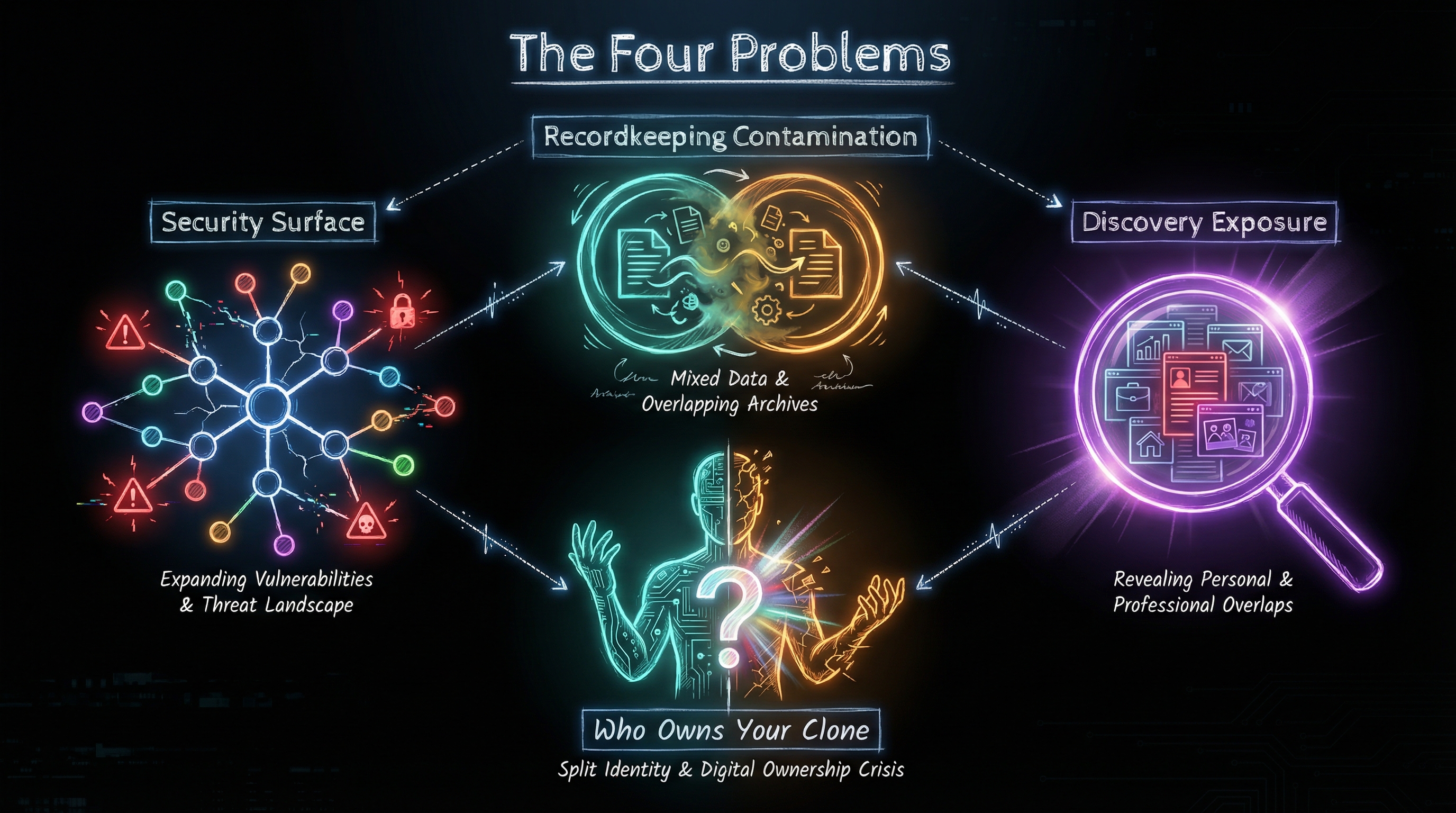

An AI personal agent that spans work and life creates at least four unsolved problems:

1. Recordkeeping Contamination

If your AI has context from both personal and work interactions, where does one record end and another begin? When you ask your agent to “summarize my priorities for next week,” it might synthesize your daughter’s recital, your doctor’s appointment, and your client presentation. That synthesis—if it touches work context—may be a business record subject to retention requirements.

The regulatory frameworks weren’t designed for AI that thinks across boundaries. They assume discrete communications: this email is personal, that one is business. An AI that contextualizes everything breaks that assumption.

2. Discovery Exposure

In litigation, parties can request all relevant documents—including communications. If your personal AI maintains a unified context that includes work discussions, that entire context could become discoverable. Your personal journal entries, your health concerns, your family conversations—all potentially exposed because they’re stored alongside business context.

This isn’t paranoia. It’s how e-discovery actually works. If you can’t cleanly separate personal from business data, you may have to produce both.

3. The “Who Owns Your Clone” Question

When you leave a job, what happens to the work knowledge your AI accumulated? If your digital twin learned patterns from your employer’s codebase, absorbed institutional knowledge from internal documents, and developed expertise specific to your role—does that knowledge leave with you?

One researcher framed it bluntly: “What if one of our senior people leaves and his or her Digital Twin is left behind? Who owns this information?” In regulated industries, that question has compliance implications. Trade secrets, client information, proprietary methodologies—all potentially embedded in an AI that you thought was “yours.”

4. Security Surface Multiplication

AI personal agents need broad access to function. Your calendar, your email, your documents, your browser history. In a personal context, you’re accepting risk for yourself. But if that same agent has work context, you’re accepting risk for your employer—and potentially for clients whose data flows through your systems.

A breach of your personal AI isn’t just your problem anymore. It’s a potential regulatory notification event.

The False Solutions

The obvious answer is separation: keep work AI and personal AI completely distinct. Many regulated firms already mandate this—use approved tools for work, keep personal tools personal, never cross the streams.

But this defeats the entire value proposition of unified AI. The productivity gains come from context that spans domains. The AI that knows your work deadlines AND your family commitments can actually help you make tradeoffs. Two separate AIs with no shared context are just… two separate tools.

The other obvious answer is employer-controlled personal AI. Let the bank provide your digital twin, with full compliance controls baked in. But this raises different problems:

- Complete Separation — Loses cross-domain productivity gains

- Employer-Controlled — Privacy concerns, data portability issues

- Unified Personal AI — Regulatory exposure, discovery risk

- Status Quo — Missing the AI productivity revolution entirely

Neither extreme works. We need something in the middle.

Toward an Architecture

I don’t have a complete solution—but I have architectural intuitions from building governance-first AI tooling.

Hard Partitioning with Defined Interfaces

The AI might need separate data stores, separate model contexts, separate identities for work and personal domains. Not just logical separation—actual isolation where the work context literally cannot access personal data and vice versa.

But here’s the catch: you’d need defined interfaces where context can cross boundaries under controlled conditions. “Block next Thursday afternoon for personal—don’t schedule work meetings” requires the work AI to know something exists in personal time, without knowing what.

Compliance-Aware Context Filtering

What if the AI could classify context in real-time? Work-related content gets routed to compliant storage. Personal content stays personal. The AI reasons across both, but the underlying data maintains separation.

This is technically hard. Classification errors have regulatory consequences. But it’s the direction that preserves the unified experience while respecting compliance boundaries.

The “Work Sim” Model

Some researchers propose split personas—a “Work Sim” that prioritizes productivity within compliance constraints, and a “Personal Sim” that prioritizes privacy and autonomy. The concept builds on agent-based modeling research like WorkSim, which demonstrates how heterogeneous agents can operate within institutional constraints while maintaining individual decision-making autonomy. The sims can coordinate at defined touchpoints, but they’re fundamentally separate agents with separate governance.

This feels closest to viable. It’s essentially what I do today with Claude Buddy for work and other tools for personal use—just formalized into a coherent architecture.

What This Means for Banking

If you work in regulated industries, here’s my honest take: you probably can’t have a unified personal AI that spans work and life. Not with current tooling. Not with current regulations. The liability exposure is too high, and the architectural solutions don’t exist yet.

What you can do:

Accept the partition. Use approved tools for work, personal tools for personal. Don’t let them cross. It’s not the unified future we were promised, but it’s compliant.

Push for organizational clarity. If your firm is adopting AI tools, ask the hard questions now. What’s the retention policy? What’s discoverable? What happens when employees leave? Better to answer these before the first regulatory inquiry.

Watch the tooling space. Someone will eventually build governance-first personal AI that actually addresses these problems. When they do, regulated industries will be the first buyers—because we’re the ones who need it most.

The Uncomfortable Truth

The dream of a single AI that knows your whole life assumes you have a single life with unified governance. But most knowledge workers don’t. We have employers with legal claims on our work output, regulators with requirements for our communications, and personal boundaries we want to protect.

The AI personal agent revolution will arrive differently for us. Not a unified clone that follows you everywhere, but a federation of specialized agents with carefully designed interfaces. Work AI that’s compliant. Personal AI that’s private. And a lot of architectural work to make them coordinate without contaminating each other.

It’s less elegant than the vision. But it’s the version that actually works in industries where every line of code—and every line of communication—is a liability.

That’s not pessimism. It’s the reality I’ve navigated for fifteen years. And like with Claude Buddy, I suspect the solution won’t come from consumer AI companies building for startups. It’ll come from enterprise architects who understand the constraints and build anyway.

If you’re thinking about this problem—or building toward it—I’d love to hear your approach. Find me on X or LinkedIn.