When the Regulation Hits Home: What a Government Developer Needs to Know About Staying Current in the AI Era

I started the first one because my son texted me. I wrote the second one because my daughter asked at dinner. Nobody asked me to write this one.

That’s the whole point.

Orestes saw headlines about a legal AI plugin and wanted to know what “restructure your workflow” actually means for a litigation practice. Direct question, direct answer. Annette read the post I wrote for her brother and asked the harder question: what about the people building the tools? Both of them came to me. Both conversations started with a question that had urgency behind it.

Sebastian didn’t ask. And that’s exactly why I’m writing this.

Sebastian is a computer engineer working as a government contractor in California. He works for the Department of Defense, has a stable role, and a paycheck that arrives on time. From the outside, he’s the sibling with the least to worry about. He’s fine.

That’s the problem with invisible risks. They feel like stability right up until they don’t.

The risk that doesn’t feel urgent is the most dangerous one.

The Wall He Works Behind

His situation isn’t a personal failing. It’s a structural one.

The gap between how the public sector and private sector use generative AI is staggering. The Boston Consulting Group reports that roughly 12% of public-sector organizations have meaningfully adopted generative AI, compared to 78% in the private sector. That’s a 6.5x adoption gap — not because government developers are behind, but because the systems they work inside move at the speed of procurement, not the speed of technology.

The irony is sharp. GitHub Copilot — which 68% of developers use — doesn’t have FedRAMP authorization. Meanwhile, Claude (through AWS GovCloud) and Azure OpenAI Service have FedRAMP High authorization, but most agencies haven’t deployed them as developer tools. The infrastructure exists. The policy pipeline hasn’t caught up.

This is the environment he works in. Not by choice. By regulation.

About 80% of federal IT budgets go to maintaining legacy systems — systems that he touches daily. This isn’t glamorous work, but it’s real and it matters. Someone has to keep the systems running that the military depends on.

The policy environment makes it worse. The Biden administration’s Executive Order 14110 established over 100 AI requirements for federal agencies. Then the incoming Trump administration revoked it on Day 1 with EO 14179, taking a deregulatory approach. The strategic whiplash creates paralysis. Developers like him sit in the middle of this uncertainty.

This maps to the same access-restriction dynamics I described in banking — where restriction becomes a cage that protects the organization while constraining the people inside it.

The Risk Nobody Warns You About

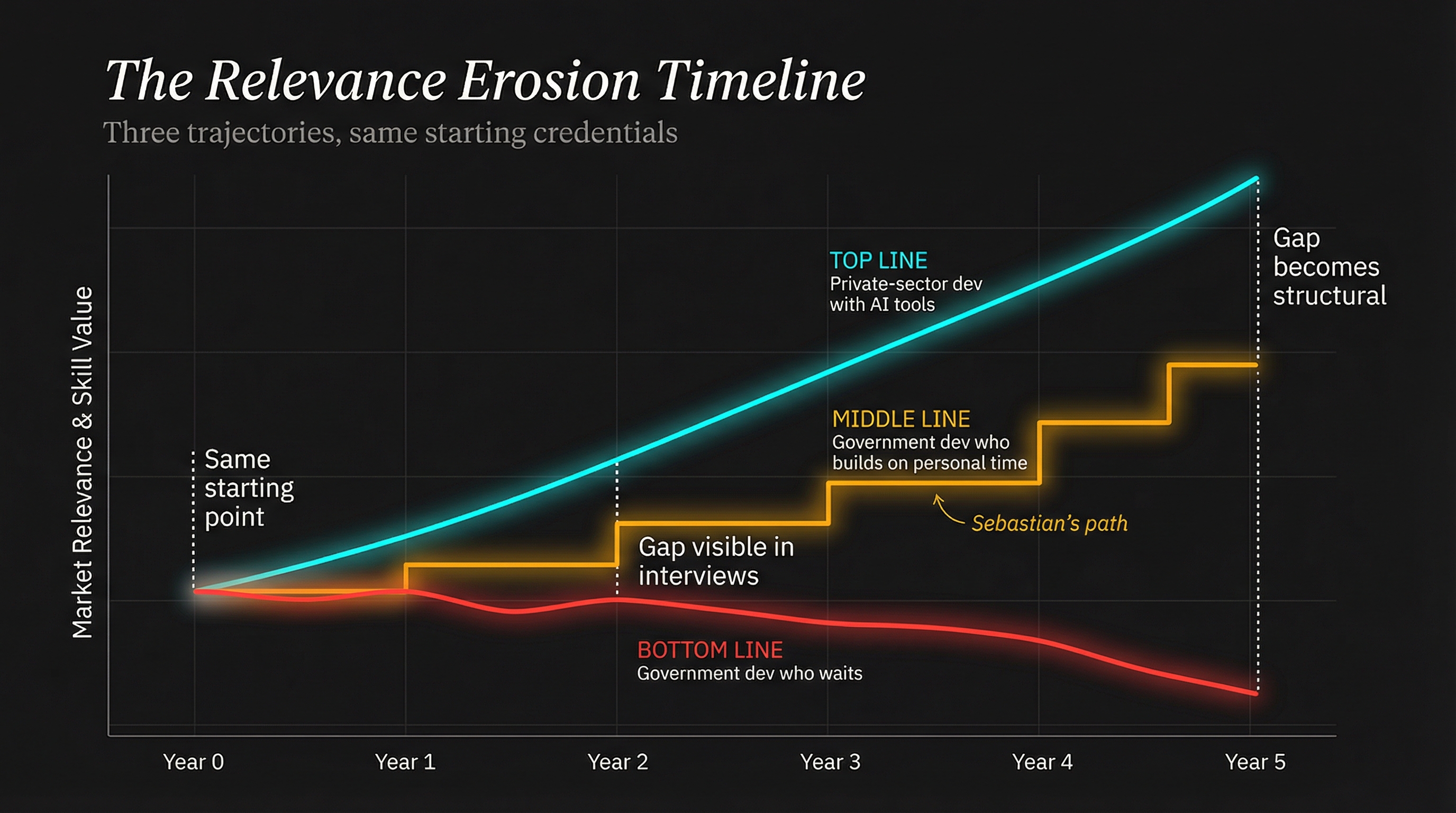

Each of my kids faces a different version of the same technological shift. Orestes faces visible revenue compression — his billable hours squeezed by AI tools. Annette faces visible market compression — entry-level jobs disappearing while she’s still in school.

Sebastian faces skills compression. The gap between what he practices daily and what the market increasingly demands is widening quietly, behind a firewall, on someone else’s timeline. The threat is invisible, chronic, and long-term. He can’t see it on any dashboard because it doesn’t show up in his performance reviews, his salary, or his position. It shows up five years from now, when the skills he hasn’t been developing become the skills employers require.

This is the hardest risk to take seriously because nothing feels wrong. The paycheck arrives. The work is meaningful. The role provides stability. But Deloitte’s Human Capital research estimates that technical skills now have a half-life of 2.5 to 5 years — down from roughly 30 years in 1987. The clock is running whether he watches it or not.

A METR study on experienced developers using AI tools found they were actually 19% slower initially. The learning curve is real. The ones who start now, on their own time, bank that learning before it’s urgent.

Andrew Ng recently said, “I just don’t hire people like that anymore” — referring to experienced engineers who treat AI as optional.

The hardest career challenge isn’t the one that scares you. It’s the one that doesn’t.

What the Position Actually Buys You

Let me give Sebastian full credit for what he has that his siblings don’t.

A Department of Defense engineering role is a genuine market asset. The regulatory requirements create a barrier to entry that keeps supply permanently constrained. The average salary for DoD professionals sits around $119,000 — real money by any measure. The government premium, typically $15,000–$25,000 above equivalent commercial roles, is a direct function of restricted supply.

Large-scale systems experience matters. Navy systems operate at massive scale with requirements that most commercial developers never encounter. He deals with software engineering for systems where failure isn’t an option — as daily work, not theoretical concepts.

Legacy systems knowledge has genuine value. The same AI tools that are disrupting private-sector development are about to become the primary instruments for modernizing the federal legacy estate. The Air Force’s DAFBOT platform is already using large language models to assist with COBOL-to-Java conversion on legacy Department of Defense systems. And it’s exactly the kind of work someone with Sebastian’s background — experienced with DoD systems, grounded in government compliance — could lead.

And the government can move fast when it wants to. GenAI.mil reached 1.1 million military users within its first six months. That adoption curve suggests that when AI arrives as a developer tool inside his environment — and it will — the government engineers who already understand the technology will be first in line.

But here’s the thing he needs to hear clearly: a government role is a multiplier, not a substitute. Multiply zero AI capability by a government premium and you still get zero. The position amplifies whatever skills you bring to the table.

The clone problem makes this harder. Personal AI agents — the kind you build and customize at home — can’t follow you through the regulation boundary. The skills are portable. The tools are not. Which means he has to become fluent in the thinking patterns of AI-assisted development even if he can’t use the specific tools at work.

The Numbers Behind the Quiet Exit

The salary math tells its own story.

His government contractor average of $119,000 is solid. But the average AI engineer salary has climbed to roughly $206,000. The gap is $87,000 per year. The government premium of $15,000–$25,000 that once made government contracting financially competitive becomes proportionally less meaningful as the AI premium grows.

He doesn’t need to chase that number. But the market is telling a story about where value is migrating — and the government workforce data confirms it: 83% of government professionals report considering a job change. The opportunity cost of staying without growing is becoming visible.

He doesn’t need to leave. But the cost of staying without growing increases every year.

The Playbook — What to Do From Behind the Wall

Here’s the concrete answer — the playbook for a government developer watching AI reshape the industry from behind a regulation wall.

-

Use AI tools on personal time — seriously. This isn’t a nice-to-have. Build real projects with Claude Code, Cursor, or whatever tools match the problems you want to solve. The METR study showed that experienced developers need real ramp-up time with AI tooling — you can’t just flip a switch and get faster. Start that clock now, on your schedule, with no workplace restrictions. An evening project that ships is worth more than a year of reading about what AI can do.

-

Learn context engineering, not just coding. This is the same skill I recommended to Annette and Alejandro, but Sebastian has something they don’t — engineering maturity. Context engineering is the discipline of structuring information so AI agents produce useful output on the first pass. He already thinks in systems. The translation is shorter than he thinks.

-

Study agentic workflows. Multi-agent architectures, function-calling patterns, CI/CD integration with AI agents. These sit at the exact intersection of his current development and systems skills and where the industry is heading. The bridge between “I build software for government systems” and “I build AI-augmented software for government systems” is shorter than most people realize.

-

Double down on what AI can’t replace. System design, architecture, security thinking, threat modeling. His government role already develops these skills daily — but in ways that might not be explicitly marketable. Make them explicit. Document architectural decisions. Write about tradeoffs. Build a portfolio of judgment, not just code.

-

Keep fundamentals razor-sharp. When AI generates code, he’s the one who spots what the model confidently missed. The Trust Ladder framework places code review and security analysis at the high-judgment rungs where human expertise is non-negotiable. Those skills compound. Let them.

-

Build in public. Open-source contributions, a GitHub portfolio, technical writing. Evidence of growth that exists outside the government title and beyond the regulation wall. The government community often defaults to invisible work behind restricted boundaries. That’s fine for security. It’s terrible for career portability.

-

Get comfortable with adjacent technology. Kubernetes, Terraform, cloud-native patterns. This is the infrastructure that AI agents run on. His engineering background translates directly — he already thinks about systems that have to work.

The key insight across all of these: build portable AI knowledge — skills and thinking patterns that transfer regardless of what tools your employer allows. The regulation wall restricts tools. It doesn’t restrict learning.

What I Don’t Know

I owe Sebastian — and anyone reading this — an honest accounting of what I can’t predict.

Government AI adoption timelines are genuinely unpredictable. GenAI.mil reached 1.1 million users in six months. Other agencies are still debating whether to allow ChatGPT on open networks. His agency might deploy AI developer tools next quarter or next decade. Neither outcome would surprise me.

The government premium may recover or evaporate. If adoption accelerates, government AI-capable engineers become scarce and premium. If it stalls, the premium becomes an anchor to a shrinking segment. Both scenarios are plausible.

I’m a technologist in banking, not government. I can analyze the technology, the economics, and the strategic implications. He lives inside constraints I can’t fully see — classification levels, program-specific restrictions, organizational cultures that vary wildly between agencies and contractors. The best advice I can give is filtered through a technology lens. The adaptation has to come from practitioners who understand the constraints I’m describing from the outside.

What Happens Next Weekend

I could have said nothing. He didn’t ask, and from the outside everything looks fine. But the risk that nobody warns you about deserves better than silence. It deserves the same thing his brother and his sister got — a thorough, precise answer that respects the complexity of his situation.

Orestes got “What Happens Next Monday” — his growth happens at work, restructuring his practice between client calls. Annette got “What Happens Next Semester” — her growth happens at school, building an AI-native portfolio before graduation. Sebastian gets “What Happens Next Weekend” — because his growth happens outside the regulation wall, on his own time, building skills the government hasn’t asked for yet but will need soon.

Three kids. Three different pressures. Same truth: adapt deliberately, or adaptation happens to you.

Think of your current role as a foundation — not a ceiling. The DoD experience, the systems background, the engineering skills — these are real assets. They compound. But they compound only if you keep adding to them.

The tools are changing. The job isn’t. At least not the parts that matter — the system design, the security judgment, the ability to keep mission-critical systems working when everything else breaks.

The regulation wall is real. But the engineer behind it? More valuable than ever. If he’s building on both sides of it.

If you’re navigating the AI shift from inside a regulation wall — or if you’re a parent watching your kids figure this out in real time — I’d like to hear your perspective. Find me on X or LinkedIn.