When the Plugin Hits Home: What Anthropic's Legal AI Means for Your Local Litigation Practice

My son texted me on a Wednesday night. He’d seen something about a legal AI plugin and a market crash, and he had questions. Not panic—curiosity. The kind of direct, cut-through-the-noise question that litigators ask:

“Interesting. What are your thoughts? Not sure I fully understand what he means by restructuring your entire workflow. Like restructure it how?”

That’s the right question. Not “should I be worried?” but “what does this actually mean in practice?”

My son Orestes is a business litigation attorney at a local Miami firm. Not BigLaw. Not a legal tech startup. A real firm with real clients who walk through the door with vendor disputes, partnership breakups, and contract disagreements. He’d seen the headlines—Thomson Reuters down 18%, LegalZoom cratered nearly 20%, $285 billion in software market cap gone in 48 hours—and wanted to know what “restructure your workflow” actually looks like when you’re running a litigation practice.

I wrote about this from the banking technology side—what the repricing means for software vendors and per-seat pricing models. His question deserved a different answer. Not what it means for the technology industry. What it means for his practice. For his Monday morning.

What the Plugin Actually Does

Before we get to the “how,” it helps to know what we’re actually talking about.

Anthropic’s legal plugin is a set of capabilities bolted onto Claude that handle specific legal workflows. Here’s what it does, based on publicly available information:

- Contract review — reads agreements clause-by-clause and flags risks using GREEN/YELLOW/RED severity markers, highlighting non-standard terms and potential exposure

- NDA triage — categorizes non-disclosure agreements into buckets: standard (auto-approve), needs-counsel (flag specific clauses), or full-review (route to attorney)

- Vendor agreement checks — identifies non-standard terms in vendor contracts, comparison against common market terms

- Legal briefings — generates case law summaries and precedent research for specific legal questions

- Templated responses — produces first drafts for litigation holds, discovery responses, and data subject access requests

- Compliance workflows — walks through frameworks like GDPR, CCPA, and data processing agreements with structured checklists

The implementation itself is Markdown prompts and JSON configuration files. No compiled code. No proprietary model. Anthropic labels the whole thing a “research preview” and explicitly warns against using it for regulated legal work without attorney oversight.

Here’s the thing that matters more than any individual feature: this plugin exists. It’s open source under Apache 2.0. Anyone can fork it, improve it, and deploy it. The capability gap between “research preview” and “production tool” has been closing faster than most people in regulated industries expect.

What matters isn’t what it does today. It’s what its existence signals about tomorrow.

The Billable Hour Faces Structural Pressure

Let’s talk about the economics of a local litigation practice.

The revenue model is straightforward: attorneys multiplied by billable hours multiplied by hourly rate. A business litigation associate billing 1,800 hours a year at $350/hour generates $630,000 in revenue. The firm takes its cut, covers overhead, and the math works.

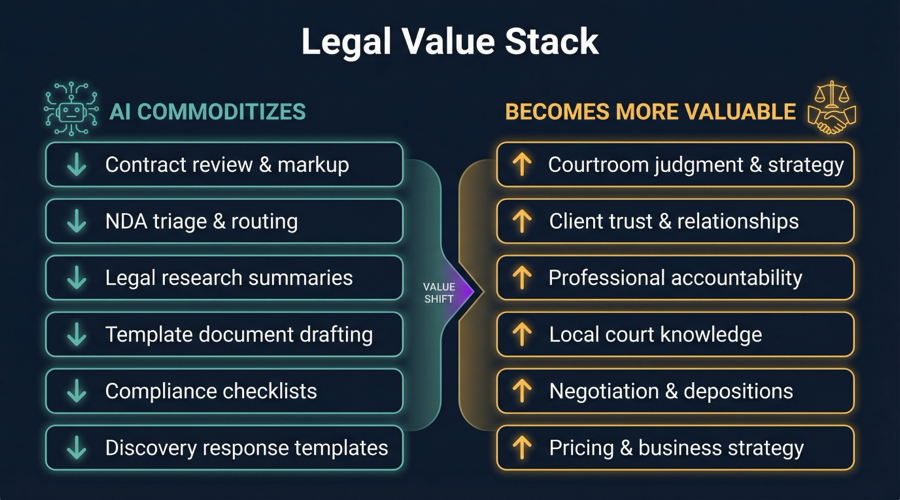

Now consider what happens when AI compresses the mechanical parts of that work. Contract review that took six hours now takes 45 minutes of review-plus-validation. A litigation hold letter that took two hours of drafting and research takes 20 minutes of editing an AI-generated draft. Discovery response templates that consumed an afternoon now arrive in minutes.

The pricing dilemma is immediate and uncomfortable: do you bill for the time it actually took (and watch revenue collapse), or do you bill for the value delivered (and fundamentally change your business model)?

This is the same structural pressure I described in the repricing post when talking about per-seat SaaS collapse. The unit of value shifts from “time spent” to “outcome delivered,” and every business model built on the old unit faces a reckoning.

Here’s the counterintuitive part: BigLaw is actually more exposed than local firms. The BigLaw model is leveraged on billing junior associate hours at high rates for exactly the kind of work AI handles best—document review, contract markup, research memos. A firm with 200 associates billing $500/hour for work that AI can draft in minutes has a massive revenue hole to fill. A local firm with 8 attorneys who already compete on relationships and judgment has less to lose.

Judgment, Accountability, and the Courtroom

Now for what AI can’t touch. And this is where answering “restructure it how?” gets interesting.

Courtroom judgment is irreplaceable. Reading a judge’s temperament during a hearing. Knowing when to push for trial and when to settle. Adjusting deposition strategy in real-time based on a witness’s body language. These are skills built through years of practice in specific courtrooms with specific judges. No model trained on legal text captures this. It’s embodied knowledge—the kind that comes from standing in the room.

Client trust can’t be automated. When a business owner is facing litigation that could shut down their company, they want to look their lawyer in the eye. They want someone who knows their business, their industry, their risk tolerance. An AI can draft a brief. It can’t sit across from a client at 9 PM and say “here’s what I think we should do, and here’s why.”

Accountability has legal weight. Malpractice insurance exists because someone has to be responsible when legal advice goes wrong. That plugin? Apache 2.0 license. Zero liability. Zero accountability. When the Florida Bar asks who gave the advice, “Claude suggested it” isn’t an answer. The attorney’s name on the filing is. In regulated practice, accountability isn’t overhead—it’s the product.

Local knowledge compounds. Southern District of Florida judges have patterns. Local rules have quirks. The opposing counsel community in Miami-Dade has a reputation economy. Knowing that Judge X is strict on discovery deadlines, that opposing counsel Y always bluffs on motions to compel, that the mediator Z gets deals done—this is competitive intelligence that no model has access to.

This maps directly to the Trust Ladder framework—not all tasks deserve the same level of AI autonomy. Contract review sits at the lower rungs where AI can operate with light oversight. Courtroom strategy sits at the top where human judgment is non-negotiable. The attorneys who thrive will be the ones who know which rung each task belongs on.

Why Smaller Firms Win This Transition

Here’s what I told my son that surprised him: his firm’s size is an advantage, not a liability.

No leverage model to protect. BigLaw’s entire economic engine runs on billing junior associate hours at senior rates for mechanical work. When AI handles that work, BigLaw has to reinvent its revenue model while carrying the overhead of hundreds of associates. A 12-person firm doesn’t have that structural burden.

Lower switching costs. Convincing 12 attorneys to adopt a new workflow is hard. Convincing 500 is a multi-year organizational change initiative. Local firms can experiment, iterate, and adapt in weeks rather than years.

Client relationships are already the product. BigLaw sells institutional prestige and bench depth. Local litigation firms sell “I know your business, I know your judge, I pick up the phone when you call.” That value proposition gets stronger when the mechanical work gets commoditized, not weaker.

Pricing flexibility. A local firm can experiment with flat-fee litigation packages, value-based pricing for contract review, and hybrid models without disrupting a $2 billion revenue machine. The compliance tax that burdens large institutions with regulatory overhead doesn’t hit local firms the same way—they can move faster on pricing innovation.

Lower compliance burden for AI adoption. A BigLaw firm adopting AI tools faces data governance reviews, client confidentiality audits, ethics committee approvals, and partnership votes. A local firm can start using open-source tools in a sandbox tomorrow and build calibration over weeks.

”Like Restructure It How?”

Here’s the concrete answer to his question—the playbook for a local litigation practice navigating this transition:

-

Start using it now. The plugin is free, open source, and available today. Don’t wait for your bar association to issue guidance. Build your own calibration in a sandbox environment. Learn where the AI is strong (contract markup, research summaries) and where it hallucinates (novel legal arguments, jurisdiction-specific rules). The attorneys who start building this judgment now will have a two-year head start.

-

Invest in what AI makes more valuable. When mechanical legal work gets cheaper, the premium on judgment-heavy work increases. Depositions, negotiations, courtroom advocacy, client counseling—these skills become more valuable, not less. Allocate professional development time toward the work that AI can’t replicate.

-

Rethink pricing before your clients do. If a corporate client learns that contract review now takes 45 minutes instead of 6 hours, they’re going to ask why they’re paying for 6 hours. Get ahead of that conversation. Propose flat-fee packages for routine work. Position value-based pricing for high-judgment work. The firm that redesigns its pricing proactively earns trust. The firm that gets caught billing the old way loses it.

-

Build your AI-assisted workflow. Map every recurring task in your practice to the Trust Ladder. Which tasks are mechanical enough for AI to handle with light review? Which ones require your full attention? Which ones sit in between? Build standard operating procedures that match AI involvement to task risk.

-

Document your judgment calls. Every time you override an AI recommendation—change a risk flag from green to red, reject a suggested clause, modify a research summary—write down why. This documentation becomes three things: your training data for calibrating future AI use, your malpractice defense if a recommendation goes wrong, and your evidence of professional judgment that justifies premium billing.

What I Don’t Know

I owe my son—and anyone reading this—an honest accounting of what I can’t predict.

The timeline is genuinely uncertain. “Research preview” today, but the gap between preview and production-ready has been compressing across every AI application I’ve watched. It could be six months. It could be two years. Betting on “it’ll take longer than people think” has been a losing bet in AI for the past 18 months.

Regulatory guidance hasn’t caught up. The Florida Bar hasn’t issued guidance on AI at this capability level. The ABA’s existing guidelines address AI in general terms but not tools that can autonomously triage NDAs or generate compliance frameworks. The regulatory vacuum creates both risk and opportunity—risk for firms that adopt carelessly, opportunity for firms that develop principled approaches early.

The market crash may overstate magnitude but not direction. $285 billion in market cap evaporation in 48 hours is probably an overreaction in degree. Markets overshoot. But the direction—that AI compresses the value of mechanical legal work while increasing the premium on judgment—is structural. The correction may give back some of the losses. The underlying shift won’t reverse.

I’m a technologist, not a lawyer. I can analyze the technology, the economics, and the strategic implications. My son lives this daily. He knows things about running a litigation practice that I never will. The best advice I can give is filtered through a technology lens. The implementation has to come from practitioners who understand the constraints I can’t see from the outside.

What Happens Next Monday

He could have gotten a two-line text back. Something like “you’ll be fine, the plugin is just a research preview.” But “restructure it how?” deserved better than that. It deserved a thorough, precise answer—the kind you can actually act on Monday morning.

So I wrote this instead.

Download the plugin. Run it against a recent contract. See where it’s right, where it’s wrong, and where it’s dangerously close to right. Start building the judgment that separates “attorney who uses AI” from “attorney replaced by AI.”

The tools are changing. The job isn’t. At least not the parts that matter—the judgment, the accountability, the 9 PM text when a client’s business is on the line.

The billable hour is under pressure. But the attorney behind it? More valuable than ever. If they’re paying attention.

If you’re a practitioner navigating this shift, I’d like to hear your perspective. Find me on LinkedIn or X.