Agent as a Service Is Real. The Vendor Pitch Isn't.

Jensen Huang stood on the GTC 2026 stage and declared that “every SaaS company will become an Agent-as-a-Service company.” When I wrote about the convergent personal AI stack two weeks ago, I noted that quote as a signal worth testing. Fifty-seven percent of companies already have AI agents in production, according to G2’s enterprise survey. Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027.

Both numbers are real. The architect’s job is understanding why both can be true simultaneously.

The Third Infrastructure Shift

We’ve seen this movie twice. On-prem to cloud, roughly 2006 to 2010. Monoliths to microservices, roughly 2012 to 2016. Now: human-first tools to agent-first primitives. SaaS scaled tool access — you got a dashboard and a login. AGaaS scales execution capacity — you get an agent that does the work. The customer for the new infrastructure is the agent, not the human.

The parallels to what I described in the four-layer regulated AI stack are direct. I defined layers: foundation models, development tools, productivity tools, orchestration. AGaaS collapses the middle layers. The agent consumes the foundation model AND performs the productivity work. The orchestration layer becomes everything.

But “everything is an agent now” is today’s version of “everything needs to be microservices” from 2018. We know how that ended — sprawl, debugging nightmares, and a lot of Kubernetes consultants. Gartner themselves estimate only about 130 of the thousands of “agentic AI vendors” are real. The rest are agent-washing — rebranding chatbots and RPA. Salesforce served 11.14 trillion tokens in a single quarter and closed over 22,000 Agentforce deals. The volume is real at the top. The fog is thick everywhere else.

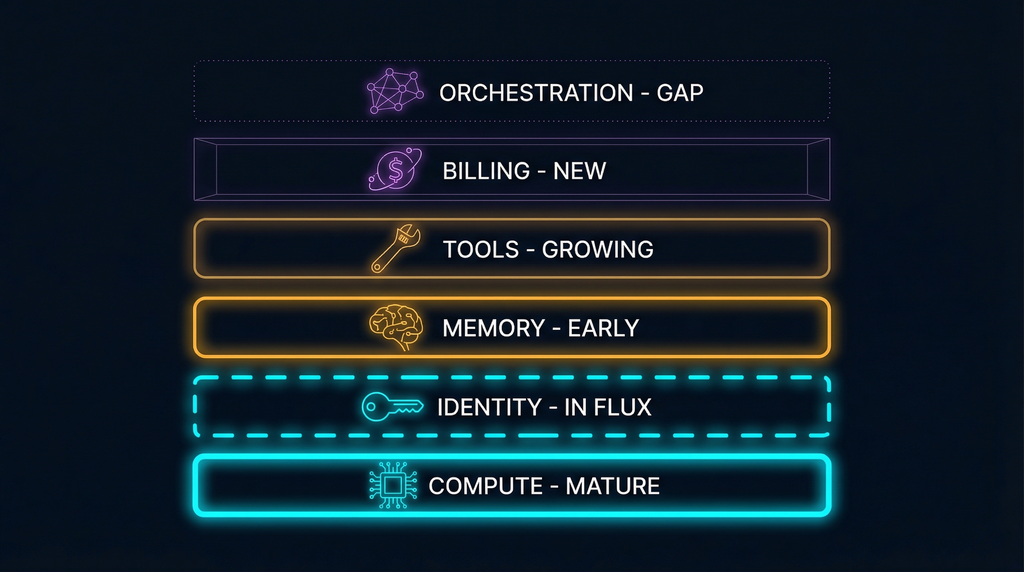

The Infrastructure Map: What’s Load-Bearing and What’s Scaffolding

An infrastructure stack is assembling in public with billions of capital behind it. Six layers, each at a different stage of maturity. The question isn’t whether the stack exists — it’s which layers are load-bearing infrastructure and which are temporary scaffolding that’ll be replaced in 18 months.

Compute and sandboxing is load-bearing. Agents need isolated execution environments — not your laptop, not production, not unsupervised. E2B raised $32M and uses Firecracker microVMs, the same technology behind AWS Lambda. Daytona raised $24M and bets on Docker containers with 90ms cold starts. Browserbase is valued at $300M and gives agents headless browser automation. The architectural split between ephemeral sandboxes (treat each session as disposable) and persistent state (agents come back later with context intact) is a design decision that cascades through every layer above it. It’s not a preference. It’s a bet on how long agent sessions will run and whether state matters.

Identity is still scaffolding. This is where the agent impersonation problem meets AGaaS head-on. I wrote about AI agents borrowing human credentials — committing code as you, sending emails as you, querying databases as you. That problem doesn’t get better when the agent becomes a service. It gets worse. Ninety percent of deployed agents hold more privileges than they need, and the identity layer that should scope those permissions barely exists.

AgentMail raised $6M from General Catalyst on the thesis that email is the identity primitive — every SaaS service needs one at signup, every verification flow routes through one. It’s a pragmatic bet. Email works because it’s everywhere, not because it’s the right protocol for agent identity. The serious attempt at agent-native identity is the A2A protocol, launched by Google and now a Linux Foundation project with over 100 partners including Salesforce, SAP, and ServiceNow. A2A treats agents as opaque — they collaborate without revealing internal logic, preserving IP and data privacy. It’s built on HTTP, JSON-RPC, and SSE — deliberately boring infrastructure standards, which is exactly what you want at this layer.

Memory is early but real. In the context engineering blueprint, I defined memory architecture as Building Block 3: layered storage with different lifecycles, not a single vector store. The AGaaS memory layer follows the same pattern. Mem0 raised $24M and became AWS’s exclusive memory provider for its agent SDK. Their architecture is a hybrid — network graph plus vector database plus key-value store. Their core insight matches mine: memory isn’t saving the conversation. It’s active curation — storing what matters, deliberately forgetting what’s outdated, recalling only what’s relevant to the current task.

The existential risk at this layer is real. Every frontier lab is building memory into the model itself. If memory becomes a model-level feature the way search became native to ChatGPT, the standalone memory layer dissolves. The counter-thesis is portability. No one should own your context. I believe the portability argument, but I also know that model providers have a track record of absorbing adjacent capabilities once they mature enough.

Tools and integration is growing but fragile. Without middleware, every builder independently manages OAuth flows, rate limits, API schema changes, and error handling for every tool — an N×M nightmare that scales badly. Composio raised $29M to solve this with managed integration, pre-built connectors, and observability on every tool call. But MCP standardization is the long-term threat to this layer. If MCP becomes truly universal, the value of managed integration middleware diminishes. The bet these companies are making: enterprises move slowly. Slow adoption creates the gap where the entire business lives.

Billing and provisioning is brand new. Stripe Projects just shipped the first credible trust layer for agent-to-service transactions. Database provisioning in 350ms. Scale-to-zero when inactive. Every design choice optimized for agent speed, not human dashboard clicking. What’s missing is equally telling: agent-to-agent payments, metered billing mapped to compute patterns, dynamic budget allocation where Agent A can spend $X without human approval but Agent B needs sign-off. This is FinOps for agents, and it barely exists as a category.

Orchestration is the defining gap. Gartner reported a 1,445% surge in multi-agent system inquiries between Q1 2024 and Q2 2025. In The Stack Nobody Designed, I called multi-agent coordination “the emerging fourth pillar” — the pattern forming at the edges of the convergent architecture. It’s also the most immature layer in the AGaaS stack. Current tooling from LangGraph and CrewAI is framework-level, not infrastructure-level. Three agents in a notebook? Fine. Fifty agents across enterprise systems with failure recovery, cost controls, and audit trails? You’re hand-rolling everything.

This is structurally the same problem Kubernetes solved for containers. Not the compute itself — the scheduling, scaling, health checking, and lifecycle management. Whoever solves orchestration at infrastructure grade owns the most valuable position in the agent stack.

The Math That Changes Your Architecture

Three numbers every architect needs before making AGaaS decisions.

The reliability product. When your agent depends on five primitives, end-to-end reliability is the product of five reliabilities. 99% times 99% times 99% times 99% times 99% equals 95%. At 97% each, you’re at 86%. You are compounding liabilities every time you add a layer. This is why monolithic agents keep beating multi-agent architectures in production — not because they’re better designed, but because they have fewer failure surfaces. The microservices lesson applies directly: distribute only when the coordination cost is lower than the complexity cost of keeping things together.

The cost ceiling. Production agents cost $3,200 to $13,000 per month in operating expenses — LLM API fees, vector database hosting, monitoring, prompt tuning, security. Inference alone accounts for 85% of the enterprise AI budget. Two startups shut down agentic products when edge case costs spiked from $0.15 to $7.50 per execution — a 50x increase that made the unit economics structurally impossible. Model routing — sending 80% of routine traffic to cheaper models — saves 60 to 80%, but you need that discipline from day one, not after the first bill shock.

The autonomy gap. The Upwork study is the clearest data point: GPT-5 completed 30% of engineering tasks solo, rising to 50% with human input. Gemini 2.5 Pro: 17% solo, 31% with humans. The vendor pitch says “autonomous digital workers.” What actually works in production: tightly scoped, human-supervised agents with narrow tool access and robust monitoring. The gap between those two descriptions is where Gartner’s 40% cancellation rate comes from. Teams that deploy expecting autonomy and get supervised task execution aren’t failing on technology. They’re failing on expectations.

What to Actually Build Now

Not everything. Not nothing. Four investments that survive regardless of which AGaaS scenario plays out over the next two years.

Agent versioning as infrastructure. Agents aren’t code deployments. They’re four-dimensional versioning problems. You need independent version tracking across reasoning logic, prompt and policy rules, model runtime, and tool/API interfaces. Model drift causes 40% of production agent failures. Tool versioning causes 60%. Pin everything. This is the same discipline we learned from database migration versioning — except agents have four migration dimensions instead of one. A complete agent version identifier looks like customer-support-agent:ALV-2.3.1_PPV-4.1.0_MRV-claude-4-20260401_TAV-1.4.2. If that looks excessive, you haven’t debugged a production agent failure yet.

Token economics from day one. Model routing. Prompt compression. Per-agent budgets with hard caps. Don’t learn cost discipline from a production incident. This is the cloud cost optimization playbook applied to a resource — tokens — that has even less predictable consumption patterns than compute. The CIO-level conversation is already shifting: enterprises are starting to evaluate agents like consultant contractors rather than software licenses. Budget accordingly.

Governance-first deployment. Shadow agents before canary rollouts. Behavioral evaluations, not just unit tests. You need six test types: golden tests for deterministic validation, behavioral tests with human-scored rubrics, adversarial tests for jailbreak resistance, stress tests for 50-plus exchange conversations, regression tests for version-to-version comparison, and multi-agent compatibility tests for coordination and handoff validation. OneDigital treats agents like hires — intern to apprentice to full-time, with the same vetting rigor as human hiring. That mental model is right. You wouldn’t give a new contractor admin access on day one.

Protocols over platforms. MCP for tool connectivity. A2A for agent-to-agent communication. These are becoming the HTTP and REST of the agent era. Every proprietary connector you build is a bet that the vendor survives and the protocol doesn’t standardize underneath them. In 18 months, some of today’s infrastructure layers won’t exist as separate products. Build on the protocols, not the products.

What I Don’t Know Yet

The determinism gap is real. You can’t write a unit test for an agent that reasons differently every time it runs. Six specialized test types help. They don’t eliminate the problem. The fundamental challenge of verifying non-deterministic systems is unsolved, and anyone who tells you otherwise is selling something.

Orchestration is where the defining company of this era will be built. It doesn’t exist yet at infrastructure grade. The 1,445% inquiry surge tells you the demand is there. The absence of a clear winner tells you the solution isn’t.

I keep coming back to the MIT Technology Review’s framing — the “Great AI Hype Correction.” Executives are talking less about autonomy and more about supervision and co-piloting. McKinsey reports roughly 90% of vertical use cases stuck in pilot. We’re early. But “early” doesn’t mean “fake.” The infrastructure is assembling. The question is speed, not direction.

The architects who navigate this well are the ones who understand which layers are load-bearing and which are scaffolding that’ll be replaced in 18 months. Version your agents. Budget your tokens. Govern before you scale. Build on protocols, not products.

Gartner’s 40% cancellation isn’t a prediction about the technology. It’s a prediction about the discipline. The technology is real. The discipline is optional. The survivors will be the ones who chose it.

Sources

Gartner: 40% Cancellation Prediction · G2 Enterprise AI Agents Report · Upwork/VentureBeat: AI Agent Solo Completion Rates · Salesforce Q4 FY2026 Earnings · AWS Bedrock AgentCore · Google A2A Protocol · Linux Foundation A2A Project · CIO: Agents-as-a-Service Enterprise Interviews · MIT Technology Review: The Great AI Hype Correction · Deloitte: AI Token Spend Dynamics · NJ Raman: Agent Versioning and Lifecycle Management · DEV Community: Agentic AI Cost Failures · Nate B Jones: Building AI Agents on Layers That Won’t Exist in 18 Months

The convergent architecture thesis is in The Stack Nobody Designed. The context engineering blueprint is here. The agent identity problem is here. The four-layer regulated stack is here.

Find me on X or LinkedIn. I write about what happens when AI infrastructure meets regulated reality.