The Documentation Dividend: What AI Replacing Knowledge Workers Actually Looks Like Inside a Bank

If you’ve worked in banking IT long enough, you’ve sat through this incident review. A wire transfer batch fails. The root cause is a timeout threshold that got changed during a platform migration. The engineer who understood why the original value was set that way left months ago. Nobody documented it. The new team inherited a config file with a magic number and no context. They changed it because it “looked wrong.” It wasn’t wrong — it was a workaround for a downstream system’s retry behavior that nobody had written down either.

I’ve sat in that room more than once across my career. So when Daniel Miessler published a long piece arguing AI will replace knowledge workers — not because AI is brilliant, but because the bar is “on the floor” — I recognized the world he was describing. Most organizations run on undocumented processes, inconsistent execution, and institutional knowledge that walks out the door every two to three years. The $50 trillion global knowledge work economy is built on dysfunction that even mediocre AI can outperform.

He’s not wrong. But he’s describing the problem from 30,000 feet. Anyone who’s worked IT operations in banking knows this problem at ground level — the ServiceNow tickets that exist because one engineer’s departure erased knowledge nobody captured. Daniel’s frameworks are compelling. Here’s what they actually look like when you’re the one filing change tickets and sitting through CAB reviews.

The Bar Is on the Floor (I Can See It from My Desk)

Daniel’s first move is reframing the comparison. Don’t compare AI to your best engineer. Compare it to the average across your org — including the people doing bare minimum work, ignoring SOPs, and producing wildly different output for the same task. By that measure, AI clears the bar today.

Banks are interesting here because the compliance infrastructure creates an illusion of operational maturity. ITSM processes. Change management. Runbooks and control frameworks and audit programs. From the outside, it looks buttoned up. From the inside — from the perspective of anyone who’s worked enterprise architecture in banking — it looks like what Daniel describes.

The pattern repeats across every bank I’ve touched in my career: change failure rates that would embarrass a startup. Mean time to restore on P1 incidents measured in hours because the on-call engineer didn’t have context on the system that paged them. Runbooks that reference architecture diagrams from three years ago that no longer match production. SOPs that describe deployment processes nobody uses anymore. Half the incident retrospectives end with “improve documentation” as an action item, and the follow-through rate on those items is close to zero.

Sol Rashidi’s 200+ deployment postmortems confirm what Daniel describes from a different angle: 70% of failure is organizational, not technical. In banking IT ops, I’d push that number higher. The technology works. The Kubernetes clusters run. The CI/CD pipelines deploy. What breaks is the human knowledge layer — the context that tells you why that timeout is set to 30 seconds instead of 10, or why that batch job runs at 2 AM instead of midnight.

The Expertise Gap Is a ServiceNow Ticket Waiting to Happen

Daniel’s core insight: the gap between human expertise and AI capability isn’t an AI limitation. It’s a documentation failure. Knowledge is trapped in individual brains instead of captured in formats AI can use. The gap closes the moment you write it down.

In banking IT, this plays out in a specific, painful pattern that anyone in the industry will recognize. A senior engineer owns a critical system — say, a real-time payments gateway or an OFAC screening integration. They’ve been running it for eight years. They know every edge case, every vendor quirk, every undocumented dependency. They carry the on-call pager and resolve P1s in twenty minutes because they just know where the problem is.

Then they leave. Or they get promoted into management. Or they transfer to the digital banking team because it’s more interesting. And suddenly the team that inherits their system is flying blind. The runbook says “restart the service.” It doesn’t say “but check the downstream queue depth first, because if it’s above 10,000 the restart will trigger a cascade that takes out the batch settlement process.” That knowledge lived in one person’s head. Now it lives nowhere.

This isn’t just an engineering problem. The OCC’s heightened standards require banks to identify and mitigate key-person dependencies. FFIEC operational resilience guidance requires documented procedures for critical processes. When your payments engineer quits and the exception handling logic lives only in their head, that’s not just Daniel’s “expertise documentation gap.” That’s an audit finding waiting to happen.

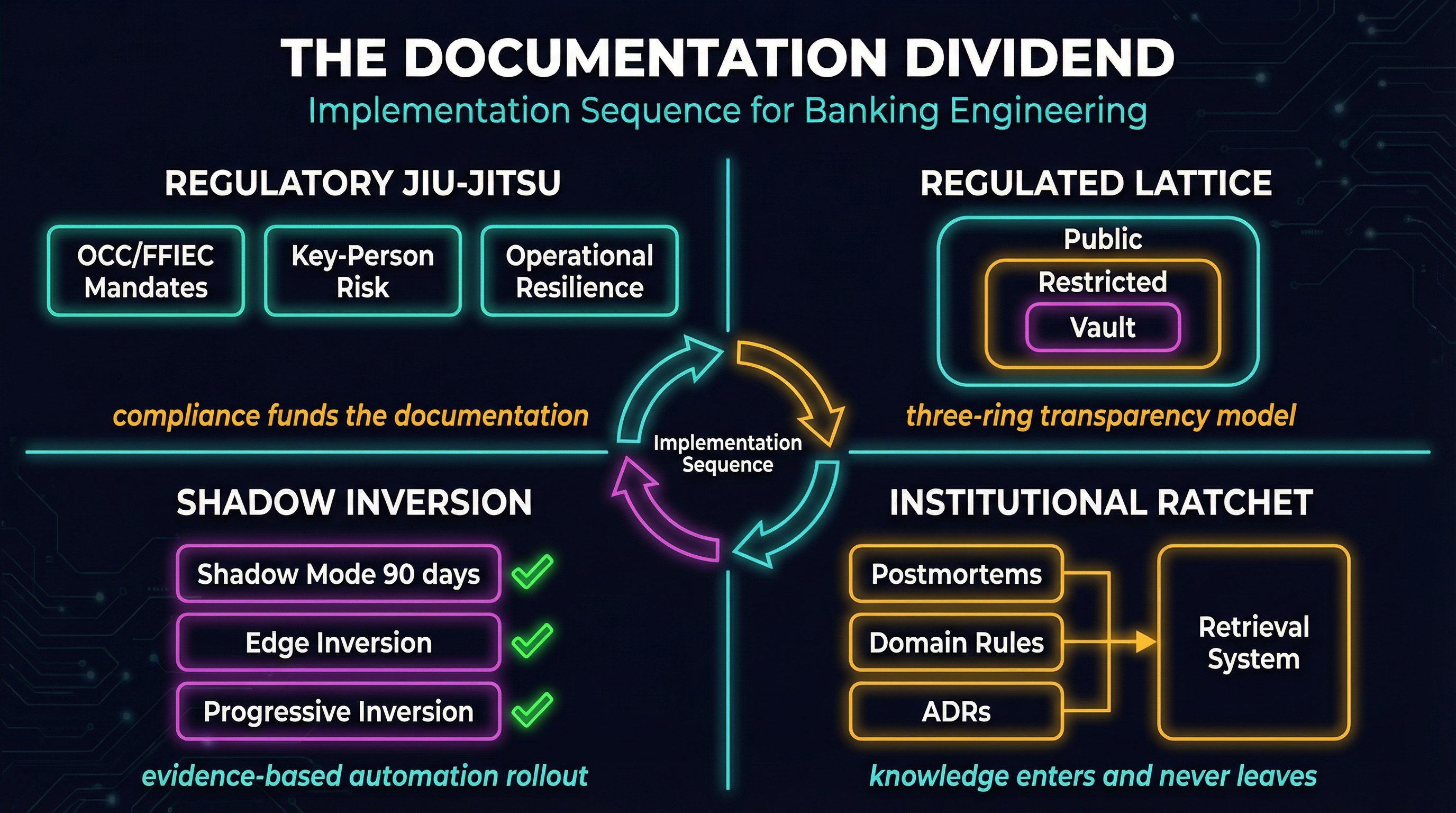

Which is exactly why I’d use what I’m calling Regulatory Jiu-Jitsu — using the compliance infrastructure that usually slows us down as the forcing function for AI readiness.

Here’s the tactical move: the budget for key-person risk mitigation already exists in every bank’s operational risk framework. The mandate for documented procedures already exists under FFIEC. Instead of pitching “AI readiness documentation” — which gets deprioritized the moment budgets tighten — you frame the work as fulfilling existing regulatory requirements. The key-person risk assessment says the wire transfer team has single points of failure? Good. Pull those engineers into structured knowledge capture sessions. Record their incident response decision trees. Document the vendor quirks and undocumented dependencies. Format it as machine-readable context, not just another Word doc in SharePoint.

You’re satisfying the OCC and building the knowledge base your AI agents will query. Same work, two outcomes. The compliance tax that usually slows everything down becomes the funding mechanism for the thing that speeds everything up.

I haven’t done this at scale yet. But the pitch writes itself — because you’re not asking for new budget. You’re asking to redirect work the risk team already knows it needs to do.

The Lattice, But Through ServiceNow and ADRs

Daniel proposes a “Lattice Architecture” — every organizational tier broadcasts its goals, SOPs, metrics, and work items through APIs. Full transparency from top to bottom. No more executives hiring McKinsey to tell them what their own company does.

I love the idea. But anyone who’s sat through data classification reviews in banking knows you can’t broadcast everything to everyone. Customer data has regulatory boundaries. Exam findings are confidential. Even incident details have sensitivity levels — a P1 involving a specific customer’s transaction can’t land in a general-access dashboard.

But you don’t need full transparency to get most of the value. What I’d build is a Regulated Lattice with three tiers that map to access levels banks already manage:

Tier 1: Open context. Department OKRs, team charters, architecture decision records, release calendars, non-sensitive runbooks, tech radar. Any engineer or AI agent queries this without a classification review. This is where 80% of the organizational context lives — and right now, most of it is scattered across Confluence pages that nobody maintains, Slack threads that disappear after 90 days, and one person’s head.

The concrete starting point is ADRs. Most engineering departments already have them, or should. But the typical bank ADR says something like “we chose Kafka over RabbitMQ for event streaming.” That’s not useful context for an AI agent triaging an incident at 3 AM. Expand the ADR to say: “We chose Kafka because the payments team needs exactly-once delivery guarantees for BSA transaction monitoring. RabbitMQ didn’t meet throughput requirements during the 2024 holiday load test. If Kafka consumer lag exceeds 5 minutes, BSA reporting may be delayed — escalate to compliance before restarting.” Now that ADR is a lattice node. Version-controlled, auditable, and queryable by an agent that needs to understand why this system exists, not just what it does.

Tier 2: Role-gated context. Incident details, system configurations, performance metrics, vulnerability scan results. Accessible to authenticated agents with RBAC matching what banks already enforce for contractors and offshore teams. An agent triaging a P2 gets access to relevant system configs. An agent drafting the weekly ops report gets dashboards. Neither gets customer data or regulatory correspondence.

Tier 3: Human-only. Customer PII, regulatory correspondence, exam findings, board materials. AI access only at Trust Ladder Level 1 — agent summarizes what it can see, human decides what to do with information from this tier.

You don’t need a platform build for this. You need to stop letting organizational context die in Slack threads and start putting it where agents — and new engineers — can find it.

Shadow Mode Before You Flip the Switch

Daniel’s most provocative move: flip the default from “humans + automation where needed” to “automation + humans where necessary.” The factory analogy — you wouldn’t add humans to a perfectly automated production line.

Try telling that to a Change Advisory Board. In banking IT, you need to prove the automation works before anyone lets you reduce human oversight. “Trust me, the agent handles it” gets you a polite smile and a twelve-week pilot proposal that ends in a PowerPoint.

So instead of fighting the CAB, I’d work with it. Build what I’m calling a Shadow Inversion — run the AI in parallel with existing processes and let the evidence do the convincing:

Phase 1: Shadow Mode (90 days). Pick three operational workflows — say, incident triage, change risk assessment, and standard code review. AI agents process the same inputs humans process, independently. Neither sees the other’s output. At the end of each week, compare. The agent classified this P3 as a P3. The human classified it as P3. The agent flagged this change as high-risk because it touches the payments database. The human flagged it as high-risk for the same reason.

You’re building a concordance dataset — the kind of evidence that actually moves a risk committee. And you’re closing the perception gap I wrote about in Everything Is Skill Issue. METR found developers believe AI makes them 20% faster when it actually makes them 19% slower. Shadow mode gives you the actual numbers for your team, your systems, your regulatory context. No vibes. Data.

Phase 2: Invert the edges. Where shadow mode showed greater than 95% concordance, flip to agent-first for the low-criticality tasks. Runbook formatting. Boilerplate change ticket descriptions. Test scaffolding. Standard code review comments that flag style violations. The agent handles them. A human spot-checks a sample. The ratio inverts — from “human does, agent assists” to “agent does, human verifies.”

Phase 3: Progressive inversion. Move up the criticality stack as evidence accumulates. Each tier gets its own evidence package for the CAB. Incident triage. Change risk scoring. Architecture review recommendations. Every step maps to a higher level on the agentic engineering maturity model. You’re not moving fast. You’re moving with evidence. The CAB can’t argue with a 90-day dataset that shows 97% concordance on incident classification.

The Ratchet That Never Forgets

Daniel describes what he calls the ratchet effect: every documented process, every captured piece of expertise makes all AI instances permanently smarter. Knowledge enters the pool and never leaves. Humans can’t compete — individual expertise takes years to build and vanishes when someone changes jobs.

Inside banking IT, the ratchet has a specific shape. Every P1 incident retrospective, every change failure analysis, every “lessons learned” that gets written up and filed in SharePoint where nobody reads it again. That knowledge exists. It’s just locked in a format that doesn’t compound.

What I want to build is an Institutional Memory API. Structured capture that turns dead retrospectives into queryable context:

Incident retrospectives get tagged. Not just a narrative in a Word doc — system affected, root cause category, resolution steps, controls impacted, escalation path that actually worked. When a new P1 fires on the payments gateway at 2 AM, the on-call engineer’s agent queries the Memory API: “Last time this system threw this error, the root cause was a vendor API change that bypassed our screening logic. Resolution required restarting the consumer with a specific flag and coordinating with compliance before resuming the batch. Time to resolve was 45 minutes. Here’s the runbook that worked.”

That’s the knowledge that walks out the door every time a senior engineer leaves. Captured, tagged, retrievable. The next on-call engineer doesn’t spend an hour guessing.

Code review domain rules get extracted. When a senior engineer catches something in a PR that only domain context explains — “this threshold needs to match the OFAC screening SLA, not the default timeout” — that comment becomes a domain rule in the Memory API. The agent queries it before generating code in that area. The senior engineer’s knowledge survives their eventual departure.

ADRs link to incidents. Not just “why we chose Kafka” but “the last time someone changed this Kafka configuration without reading the ADR, here’s the P1 that resulted.” Context that tells an agent — or a new engineer — not just what the system does, but what happens when you break it.

This is the reconciliation loop’s context store. The institutional memory that lets agents reconcile toward an ideal state based on real operational history, not just code syntax.

The compliance benefit — key-person risk mitigation — funds the engineering benefit — AI-ready institutional memory. Same work. Two dividends. That’s the documentation dividend.

The Senior Engineer Becomes the Domain Architect

Daniel’s vision of “Human 3.0” is about shifting from execution to vision. AI handles the doing. Humans handle the deciding.

In banking IT, Human 3.0 isn’t a philosopher. It’s the domain architect — the engineer who knows why the system works the way it does and can define the ideal state agents reconcile toward.

Think about how most banks structure engineering promotion criteria. Today, senior engineers advance by solving harder incidents faster, holding more system context in their heads, and writing more complex code. In the world I’m describing, they advance differently:

- Defining ideal states. Not “write the Terraform” but “define what a well-governed payments architecture looks like, including the operational constraints your examiner will ask about, and encode it in a format agents can verify against.”

- Building the ratchet. Running the knowledge capture sessions. Tagging the retrospectives. Extracting domain rules from code reviews. This is the work that makes every future engineer — and agent — more effective.

- Calibrating trust levels. Deciding which agent actions get Level 1 treatment (suggest and wait) versus Level 3 (execute within guardrails). That’s judgment work — and it’s the scarcest capability on any ops team in banking.

- Making “should we?” calls. The agent can tell you a change is technically safe and within policy. It can’t tell you whether the timing is right — whether deploying on Thursday before a holiday weekend is worth the risk, or whether the second-order effects on a downstream batch process justify waiting until Monday.

This isn’t demotion. It’s what senior engineers already do informally. The difference is making it the explicit career path instead of treating it as overhead that takes away from “real work.”

The Honest Part

I haven’t built most of this yet. These are patterns I’ve been thinking through based on years of watching the same problems repeat across banking IT. The Institutional Memory API is a schema concept. The shadow inversion is a proposal I’d bring to a risk committee. The Regulated Lattice starts with ADR expansion that any engineering team can begin tomorrow.

The shadow approach is slow. Ninety days of parallel runs while fintechs ship agent-driven ops in weeks. That’s a real competitive gap — unless you believe (as I do) that the evidence base is what prevents the “automated chaos” pattern that kills most AI deployments.

The Regulatory Jiu-Jitsu framing depends on having examiners who view AI-ready documentation as fulfilling key-person risk mandates. That interpretation is plausible under current OCC guidance but not guaranteed. Your mileage will vary with your examination team.

And the biggest risk is what banks always do with frameworks: adopt the language without doing the work. “We have a lattice” becomes a slide in a steering committee deck while the engineering department still can’t tell you where their runbooks live. “We’re automation-first” becomes a talking point while the CAB adds three more approval gates for agent-driven changes. The documentation dividend only pays out if you actually document.

Daniel’s right that the $50 trillion knowledge work economy is built on a foundation AI can outperform. Anyone who’s worked banking IT has seen it — incident reviews where the root cause is “knowledge that left with a person.” Runbooks that describe systems that no longer exist. The gap between what ITSM processes say happens and what actually happens at 2 AM when the pager goes off.

The banks that build the documentation infrastructure — the institutional memory, the expanded ADRs, the evidence-based trust inversion — will absorb more operational complexity with the same team. The banks that skip the organizational work and jump straight to headcount reduction will automate their chaos faster. And we already know how that ends.

The starting point doesn’t require permission or budget. Expand your ADRs. Tag your retrospectives with structured metadata. Build the pitch for shadow mode on incident triage. It’s not a transformation. It’s documenting what the industry should have documented years ago — and making it queryable by the agents that are coming whether we’re ready or not.