Automating Chaos Produces Automated Chaos

My manager handed me Sol Rashidi’s Your AI Survival Guide as a work assignment. I expected another AI hype manual — the kind where a consultant tells you to “embrace the future” and “move fast” without mentioning the organizational wreckage that usually follows. Instead, I got 200+ deployment postmortems from someone who’d been Chief Data Officer and Chief AI Officer across Fortune 500 companies for thirteen years. It was uncomfortably accurate.

Then I watched Nate B Jones argue the opposite — that AI should make companies expand ambition, not contract headcount — and realized they’re both right. They’re describing the same broken machine from different angles. Sol is underneath it, cataloging exactly which gears are stripped. Nate is standing back, asking why we keep feeding it more fuel instead of fixing the transmission. What neither of them says explicitly — but what their arguments demand when placed side by side — is that the surplus from AI-compressed execution should fund the organizational readiness that determines whether AI actually works.

The 70% Nobody Wants to Talk About

Sol Rashidi isn’t theorizing. Thirteen years. Over 200 AI deployments. CDO and CAIO roles at companies including Merck, Estee Lauder, and Bristol Myers Squibb. She’s been in the room where these things succeed and fail, and her numbers are bleak: 74% of AI initiatives stall at the MVP stage. 88% of proofs of concept never reach production. The overall failure rate sits around 80%.

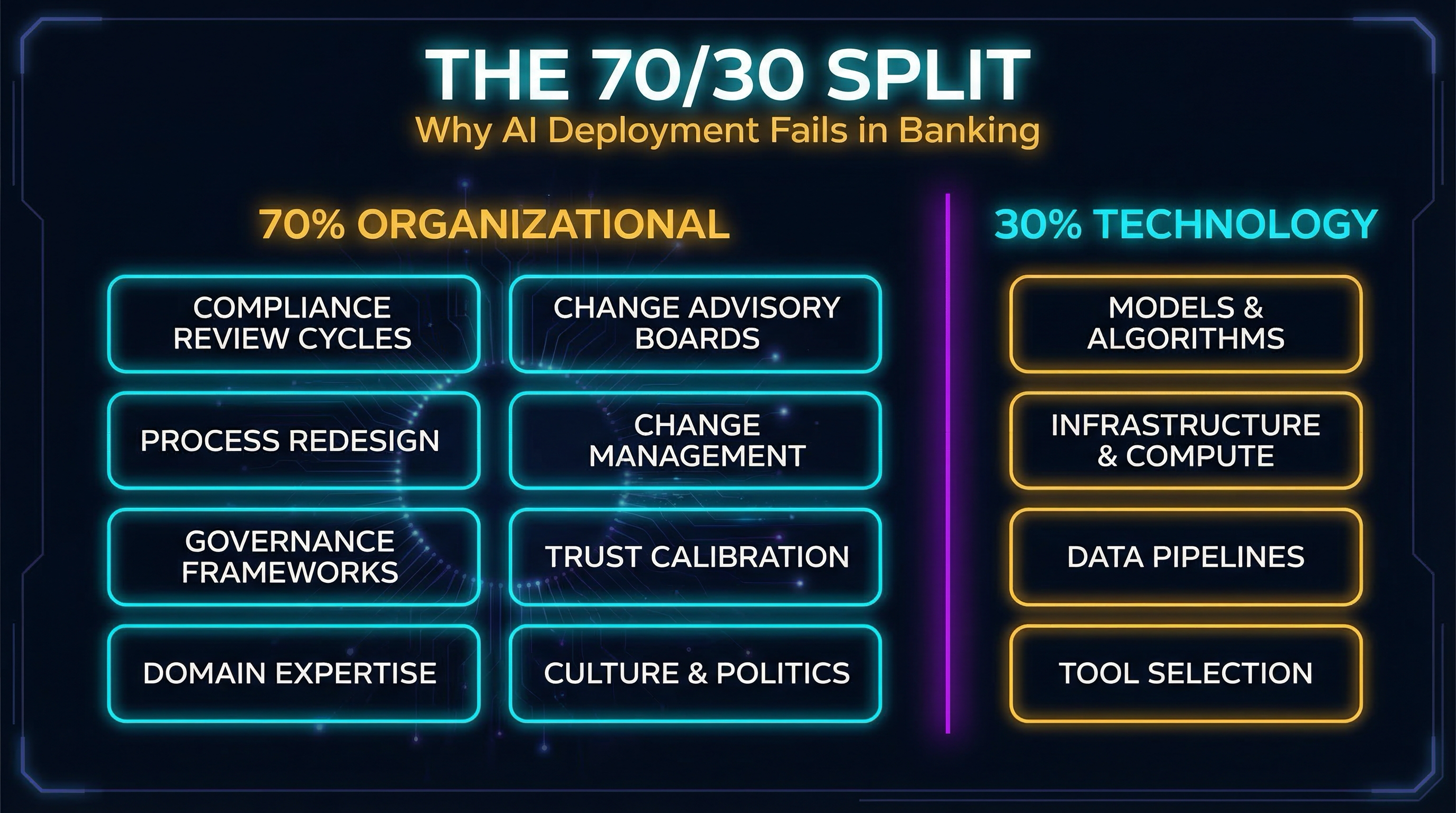

The natural assumption is that AI isn’t ready — the models aren’t good enough, the infrastructure isn’t mature, the technology needs another generation. Sol’s data says otherwise. “70% of the issues I’ve encountered when doing the deployments are all human-based.” Not model accuracy. Not compute costs. Not data quality — though that matters. People. Process. Politics. Culture.

In regulated environments like banking, the ratio may be even worse. Every AI initiative I’ve seen collide with reality hit the same walls: compliance review cycles measured in quarters, not sprints. Change advisory boards that treat model updates like mainframe releases. Governance frameworks that nobody built because the governance gap wasn’t visible until the AI vendor was already in procurement. The 70% problem isn’t a statistic — it’s the lived experience of anyone who’s tried to move AI from a demo into a production workflow inside a bank.

The Jevons Paradox for Intelligence

While Sol catalogs why AI deployments fail, Nate B Jones makes the economic case for why the response to cheaper AI execution should be more ambition, not less headcount.

His argument is Jevons paradox applied to intelligence. When the cost of a resource drops dramatically, rational actors don’t use the same amount and pocket the savings — they use dramatically more. Coal-powered engines got more efficient; the world used more coal, not less. The same logic applies to AI-compressed execution costs. When it costs a tenth as much to build, analyze, and iterate, the rational response is to build ten times as much — not to fire the people who used to do the building.

Nate points to Whoop as a case study: a company that doubled its headcount while investing heavily in AI, because cheaper execution meant they could pursue opportunities they’d previously shelved. And he names the bottleneck that remains after execution costs collapse: “The bottleneck shifts from can we build it to should we build it? And that’s a human question.” That’s the judgment-execution inversion articulated through economics. When building is cheap, judgment about what to build becomes the scarce resource.

Same Insight, Different Altitude

Sol and Nate are looking at the same phenomenon from different altitudes.

Sol at the operational level: fix the organization before you automate it. Her 200+ deployments prove that technology substitution without organizational readiness produces failure at scale. The models work. The infrastructure works. The humans and processes around them don’t.

Nate at the strategic level: expand ambition with the surplus from cheaper execution. When AI compresses the cost of building, companies should reinvest that surplus into doing more — more products, more markets, more capability. The Jevons paradox for intelligence means the demand for human judgment and expertise grows, not shrinks.

The bridge between them is what I’ll call the Organizational Readiness Dividend. When AI compresses execution costs — when what used to take a team of five now takes one person directing agents — the surplus isn’t free money to pocket through layoffs. It’s a budget that should fund the 70% problem. Process redesign. Change management. Governance frameworks. Trust calibration. Domain expertise development. The organizational work that Sol’s data proves is the actual bottleneck.

Companies that reinvest the dividend expand. Companies that pocket it by cutting headcount are doing exactly what Sol warns against — substituting technology for organizational capability. They’re automating the same broken processes with fewer people to notice when things go wrong. The irony is sharp: the technology works better than ever, and organizations are failing faster because they’re using it to amplify dysfunction instead of resolve it. “Automating chaos produces automated chaos.” Sol meant it as a warning. Most enterprises are treating it as a business plan.

What This Looks Like in Banking

Here’s a concrete example. A regional bank’s loan origination team processes applications through a workflow that involves manual document review, credit analysis, exception handling, and compliance checks. AI can compress the document review and initial credit scoring dramatically — what took two analysts a day now takes forty minutes with an AI pipeline.

The cost-cutting response: reduce the team from eight to four, keep the same workflow, celebrate the savings. The CFO reports a win. Six months later, exception rates climb because the remaining analysts are overwhelmed. Compliance gaps emerge because nobody redesigned the review process for AI-augmented output. The AI-generated credit summaries have subtle formatting issues that downstream systems don’t handle — but nobody catches them because the people who understood the downstream systems were in the first round of cuts. You’ve automated the chaos. The workflow moves faster now, which means it produces bad outcomes faster too.

The readiness-dividend response: keep the team, but redirect the freed capacity. Two analysts move into redesigning the exception handling workflow for AI-augmented decisions. One builds validation hooks for AI-generated credit assessments — the kind of human-in-the-loop verification that actually works because the human understands credit. One develops the training program so the rest of the team can evaluate AI output rather than reproduce it. The governance framework that the trust ladder demands gets built by people who know the domain. The team produces more with the same headcount. The workflow actually works at the new speed. You’ve invested the dividend.

Sol’s framework for sequencing AI adoption maps perfectly here. Her Criticality-versus-Complexity matrix says start with low-criticality, low-complexity use cases — document summarization, data extraction, report generation — before touching high-criticality decisions like credit underwriting. “If you only choose based on business value you end up in Perpetual POC Purgatory.” Everyone wants to automate the high-value decision. Nobody wants to do the organizational work required to automate it responsibly. That’s exactly what earned maturity looks like — crawl-walk-run applied to AI adoption, not just technology selection.

Sol also insists on what she calls the “Day-in-the-Life” measurement: shadow the actual workflow, not the documented one. Every bank I’ve worked at has a gap between the process flowchart and what people actually do at their desks. AI that automates the documented workflow automates a fiction. AI that automates the actual workflow requires someone to go sit with the analysts for two weeks and understand what they really do. That’s organizational investment. That’s the readiness dividend at work.

Her observation that meaningful AI ROI takes six to seven years of operationalization aligns with banking’s platform lifecycle expectations. Banks don’t replace core systems in eighteen months. They don’t transform workflows in a quarter. The organizations that succeed at AI will be the ones who plan for the long game — and Sol’s timeline gives cover to architects fighting against quarterly pressure to show ROI.

Brain Rust and the Expertise Paradox

Sol’s most focused treatment of what she calls “brain rust” comes from her TEDxCharleston talk. The metaphor is visceral: just as metal corrodes when left exposed and muscles atrophy when unused, cognitive abilities erode through chronic over-dependence on technology. Not AI specifically — technology generally. But AI accelerates the problem by orders of magnitude.

The distinction she draws between masters and learners is the key. A software developer who has spent years mastering code can use AI to accelerate — they know what good code looks like, they can spot errors, they understand the reasoning behind the patterns. They’ve internalized the “why” behind the syntax, so when the AI generates something structurally wrong, they feel the wrongness before they can articulate it. A student who never learned to code can’t evaluate what the AI produces. They see syntactically valid output and assume it’s correct. “If you’re not good at code, how can you spot bad code? You can’t.” The master uses the tool. The learner is used by it.

This creates a bifurcation that Sol sees as the defining fault line of the future workforce: critical thinkers versus passive consumers. The people who maintain the discipline of deep learning — who build mental models from first principles and use AI to accelerate rather than replace their thinking — will pull further ahead. The people who outsource their cognition wholesale will become increasingly dependent on systems they can’t evaluate, troubleshoot, or override.

“We are short circuiting the learning process, and if we short circuit learning, we stop learning.” Sol is describing something specific here — not a vague concern about attention spans, but a structural threat to the expertise pipeline that organizations depend on. The WEF projects 80 million jobs displaced by end of 2025. Goldman Sachs estimates 300 million impacted by 2030. AI learns roughly 1% per day in capability while most humans stagnate. The gap between those who actively maintain their expertise and those who outsource their thinking to AI is becoming a chasm.

This connects directly to the rest of the argument. If judgment is the moat — and the case for that is strong — and if AI deployment is 70% human, then brain rust destroys the exact capability that makes AI deployment succeed. The validation hooks that enable higher trust require humans who can actually validate. A human-in-the-loop who has outsourced their domain expertise to the AI they’re supposed to be overseeing isn’t a safety net — they’re a rubber stamp.

Nate’s framework reinforces this from the demand side. If the rational response to cheaper execution is expanding ambition, then demand for insight, judgment, and expertise is growing, not shrinking. But only for those who haven’t rusted. The expansion of opportunity and the erosion of capability are happening simultaneously — and the people who maintain sharp thinking while the world around them outsources it will capture disproportionate value.

Sol’s prescriptions are deliberately analog: productive boredom. Cook without recipes. Navigate without GPS. Write without AI. Consume less, create more, think deeper. “Artificial intelligence was created for us to outsource our tasks, not to outsource our thinking.” The discipline isn’t about rejecting AI — it’s about maintaining the cognitive infrastructure that makes AI useful. You can’t direct what you don’t understand. You can’t evaluate what you haven’t learned. The readiness dividend requires people who are ready, and readiness requires the active maintenance of expertise.

What I Don’t Have Figured Out Yet

Sol has thirteen years and 200+ deployments across Fortune 500 companies. I have one regulated environment and a personal AI infrastructure I’ve built to test these ideas. The gap matters.

The Organizational Readiness Dividend is prescriptive, not proven. It’s a framework I’m proposing — derived from Sol’s data and Nate’s economics, filtered through my own observations in banking — not something I’ve measured in production. But the banks I’ve observed are mostly stuck in what Sol calls POC Purgatory — cycling through proofs of concept without the organizational investment to operationalize any of them. I haven’t seen the readiness-dividend approach succeed at scale because almost nobody is trying it. The measurement problem is real: how do you quantify organizational readiness in a way that survives a CFO’s scrutiny? Sol’s data gives you failure rates. It doesn’t give you a readiness score.

There’s also the political reality Sol names directly: “I had to exchange popularity for progress.” Her willingness to tell executives that their organization isn’t ready for the AI initiative they just announced to the board — that’s a career risk most enterprise architects won’t take. Her two-year operationalization timelines and six-to-seven year ROI horizons conflict with quarterly earnings pressure. Every quarter, someone in leadership asks why the AI initiative hasn’t shown returns yet, and the honest answer — “because you haven’t done the organizational work” — is rarely the career-advancing one. Trading popularity for progress is easier when you’re a CDO with a track record of transformations than when you’re a mid-level architect trying to keep your seat at the table. I don’t have a clean answer for that tension. But I know that pretending it doesn’t exist is how organizations end up automating chaos and calling it innovation.

The Market for Ambition

“Use artificial intelligence perhaps to outsource your tasks but do not outsource your critical thinking.” Sol compressed the trust ladder into a single imperative. The judgment moat stated as a personal discipline. The readiness dividend stated as an organizational commitment.

Nate is right — the market for ambition is through the roof. AI is making execution cheaper every quarter. The question is whether your organization has the readiness to match it — whether you’ve invested the dividend in the people, processes, and governance that Sol’s thirteen years prove are the actual bottleneck. The companies that treat AI savings as a headcount reduction will automate their chaos. The companies that reinvest will find themselves with more capability than they’ve ever had and the organizational foundation to actually use it.

Sol’s book is Your AI Survival Guide. Nate’s argument is in the video linked above. If the 70/30 split resonated, the Trust Ladder provides a framework for calibrating which AI decisions need human oversight — and Judgment Is the New Moat explores why the human side of that equation is becoming more valuable, not less.