The Reconciliation Loop: Desired State Is Not New. The Executor Is.

I watched Daniel Miessler’s “The Great Transition” and had a familiar feeling. Not the “this is revolutionary” feeling. The “I’ve been doing this since 2017” feeling.

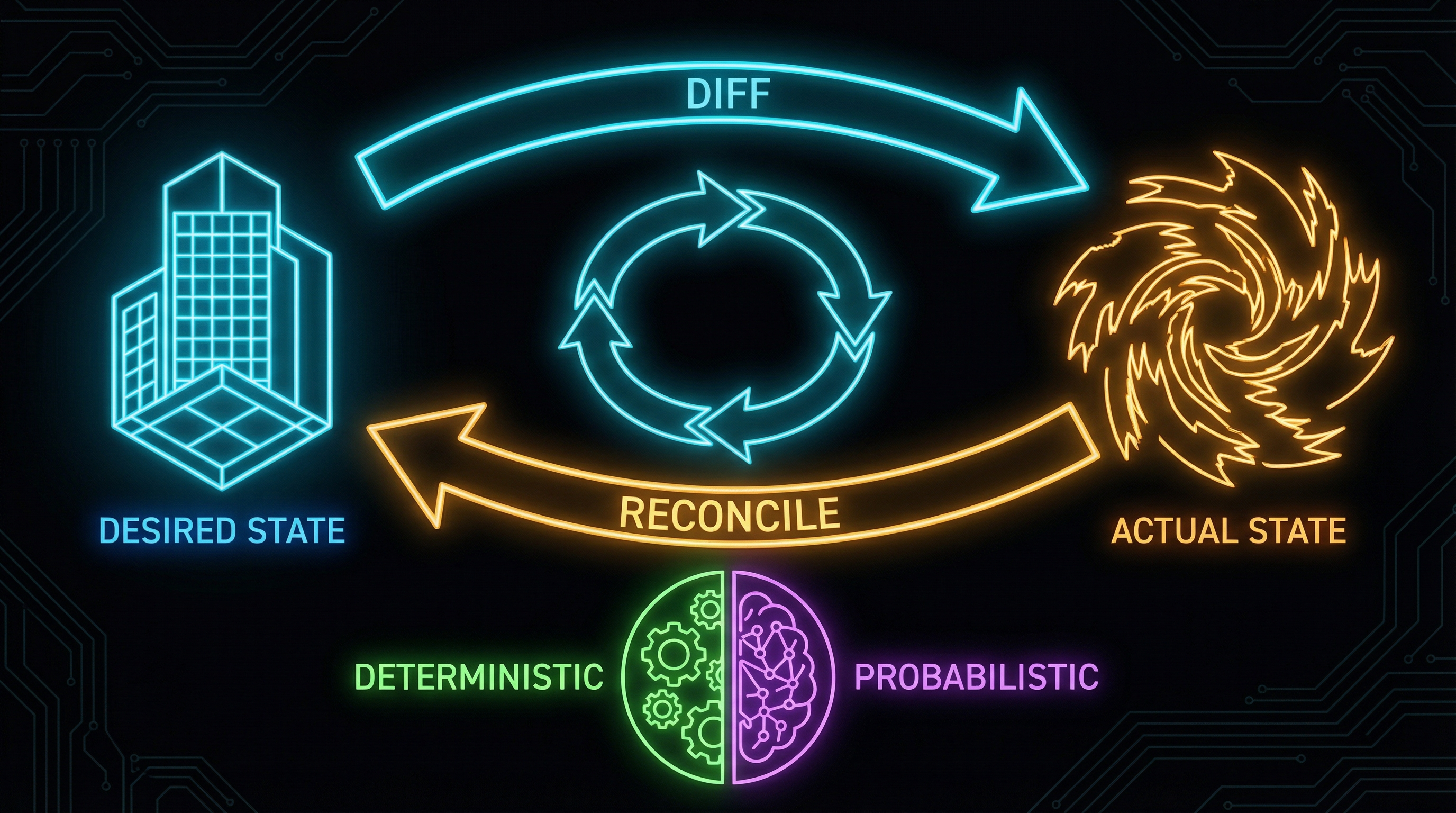

Define what ideal looks like. Measure where things actually are. Close the gap. Repeat.

Daniel calls it “ideal state management” — the core function of AI in enterprise. He positions companies as “graphs of algorithms” where agents continuously migrate actual state toward ideal state. It’s a compelling framework. It’s also something infrastructure engineers have been running for a decade under a different name.

We call it the reconciliation loop.

You Already Know This Pattern

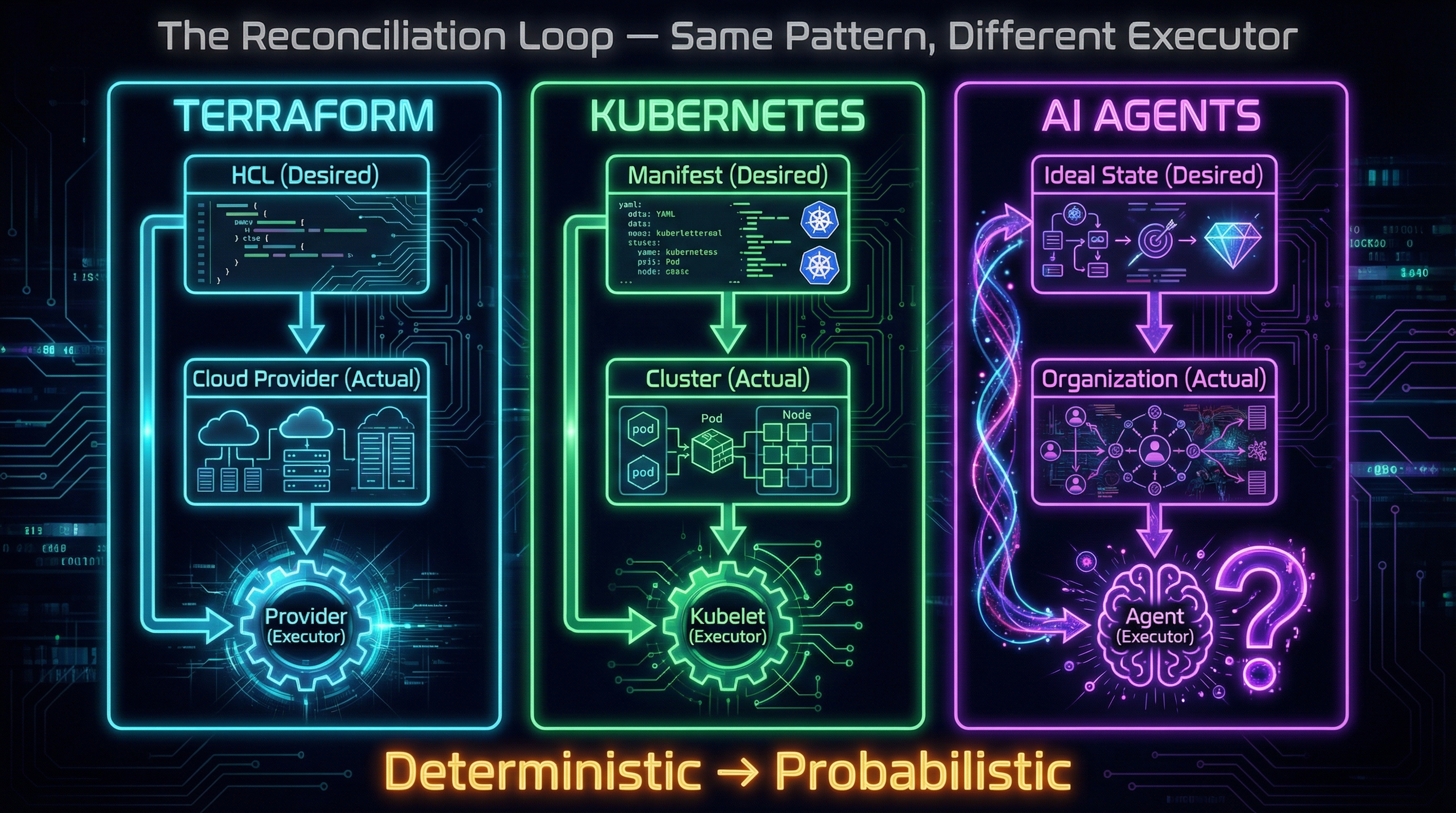

If you’ve touched infrastructure-as-code, you’ve lived inside this pattern. Three examples — same structure, different systems.

Terraform. You write HCL declaring desired state: these resources, this configuration, these relationships. You run terraform plan. The engine reads actual state from your cloud provider, diffs it against desired state, and shows you every gap. You run terraform apply. The provider closes each gap — creates, modifies, deletes — until actual matches desired. If someone manually changes something in the console, the next plan catches the drift. The executor is the Terraform provider. Deterministic. Scoped. Auditable.

Kubernetes. You submit a Deployment manifest: three replicas, this container image, these resource limits. The controller watches actual state. A pod crashes. The controller sees the delta — two replicas running, three desired — and tells the kubelet to spin up a replacement. The reconciliation loop runs continuously, not on demand. The executor is the kubelet. Deterministic. Scoped. Auditable.

GitOps. FluxCD or ArgoCD treats a Git repository as the source of truth for desired state. The operator polls the repo, diffs it against the live cluster, and reconciles. Someone kubectl edits a deployment directly? The next sync cycle reverts it. Git wins. The executor is the GitOps operator. Deterministic. Scoped. Auditable.

Three systems, one pattern. Declared desired state. Observed actual state. A diff engine that detects gaps. An executor that closes them.

And critically: every executor in these systems is deterministic. It does exactly what the diff tells it to do. No judgment. No ambiguity. No improvisation. That’s not a limitation — it’s a design choice. When you’re reconciling infrastructure, you want the executor to be boring. Predictable. The kind of thing you can put in a runbook and hand to an auditor.

Daniel’s Insight: Same Loop, Different Executor

This is what Daniel gets right in “The Great Transition” — and what most people reading it will miss.

The pattern isn’t new. What’s new is two things happening at once.

The executor changed. Terraform providers are deterministic. They follow instructions. AI agents are probabilistic. They interpret. They infer. They improvise. Sometimes they hallucinate. Same reconciliation loop, fundamentally different trust characteristics. A Terraform provider will never decide that your desired state was probably wrong and do something creative instead. An AI agent might.

The scope expanded. Terraform reconciles server counts and network configurations. Kubernetes reconciles container states. Daniel is talking about reconciling everything — customer onboarding processes, compliance postures, vendor relationships, employee performance, organizational strategy. From “how many pods are running” to “is this company operating at its ideal state.” From infrastructure to the $50 trillion global knowledge worker economy.

Andrej Karpathy put it well: “Previous software was you can make anything. The next generation of software will be you can verify anything.” That’s the reconciliation loop restated. Define ideal. Verify actual. Close the gap. The difference is what you’re verifying and who — or what — is doing the closing.

This is exactly why frameworks like the Trust Ladder matter. When the executor was a Terraform provider, trust was binary — either the provider supported the operation or it didn’t. When the executor is an AI agent, trust needs calibration. How much latitude does a probabilistic executor get? That’s not a theoretical question. It’s an operational one that every enterprise adopting agentic workflows will need to answer.

What the Scope Expansion Actually Looks Like

Let me make this concrete with three scenarios from banking — the industry I work in.

Compliance monitoring. Desired state: zero SOX control deficiencies. Actual state: 47 open findings from last internal audit. Today, a compliance analyst manually reviews each finding, maps it to control evidence, identifies what’s missing, and drafts a remediation plan. An agent can do most of that — read the finding, pull relevant control documentation, identify the evidence gap, and draft the remediation. The reconciliation loop runs monthly instead of annually. The analyst’s role shifts from data gathering to judgment: is this remediation actually sufficient, or is the agent missing context about how this control operates in practice?

Customer onboarding. Desired state: three-day account opening with full KYC verification. Actual state: eleven days average, 40% manual rework. An agent can trace the process, identify where applications stall — usually at document verification or beneficial ownership resolution — and propose workflow changes. It can draft exception-handling procedures for the edge cases that cause rework. The human decides which changes are safe to implement and which need regulatory review first.

Vendor risk management. Desired state: all critical vendors assessed quarterly. Actual state: 60% assessed, the rest overdue because nobody had time. An agent pulls vendor financial data, pre-populates risk assessment templates, flags material changes since the last review, and routes urgent cases to the right analyst. The analyst focuses on the 15% of vendors that actually need human attention instead of spending 80% of their time on data entry for the 85% that are routine.

Same reconciliation loop in every case. Declare what “done” looks like. Measure reality. Close the gap. But the executor isn’t running aws_instance.create. It’s reading documents, making inferences, drafting communications. That’s a different trust profile entirely.

Defining that ideal state — deciding what “good” looks like for your specific organization, your specific risk appetite, your specific regulatory context — is pure judgment work. An agent can execute toward a defined ideal. It cannot define the ideal for you. That’s why architects and domain experts matter more in the agent era, not less.

The Banking Problem: When the Executor Needs Permission

In Terraform, when plan identifies drift, apply fixes it. No committee meeting. No change ticket. No evidence package for the examiner. The executor just runs.

In a bank, nothing just runs.

Here’s what the reconciliation loop actually looks like in a SOX-regulated environment:

Change Advisory Board. Every agent-driven change to a production system needs a change ticket, risk assessment, and approval chain. The agent can identify the gap and draft the remediation. It cannot execute without human sign-off. For anything touching financial data, that sign-off involves at minimum the system owner, a risk representative, and someone from technology operations. The loop runs, but it runs through a governance gauntlet.

Audit trail requirements. It’s not enough for the agent to close the gap. It needs to explain why it chose that remediation, what alternatives it considered, and how it verified the result. The compliance team needs documentation that makes sense to an OCC examiner who has never interacted with an AI agent and doesn’t care about your model architecture. The paper trail that a deterministic executor generates naturally — “applied resource X with config Y” — is trivial. The paper trail a probabilistic executor needs to generate is not.

Segregation of duties. The agent that identifies the gap cannot be the same agent that approves the remediation. This is a fundamental SOX control. If the reconciliation loop runs inside a single agent context, you’ve violated segregation of duties by design. The loop needs at least two separate approval gates — one for detection, one for execution — with different authorization contexts.

Model risk management. If the agent is making decisions — not just drafting recommendations — the model itself falls under SR 11-7 and OCC 2011-12 model risk guidance. That means the reconciliation engine needs initial validation, ongoing performance monitoring, and periodic re-validation. Your Terraform provider never needed a model risk assessment. Your AI agent does.

I’m not listing these to say the pattern doesn’t work in banking. It does. But the reconciliation loop runs slower here, with more friction, and with mandatory human checkpoints at every stage. That’s the compliance tax applied to a new type of executor — and recognizing it early is the difference between a realistic implementation and a demo that never survives contact with your risk team.

A Practical Path: The Supervised Reconciliation Loop

So how does a bank actually implement this? Not in theory — in practice, in a way that survives an audit.

The answer is a supervised version of the same loop, with human checkpoints calibrated to risk level:

-

Define desired state. This is the human layer. Humans define what “good” looks like, encoded in reviewable artifacts — policy documents, control matrices, process specifications. In regulated environments, agents do not define ideal state. Humans do. The agent’s job starts after the ideal is defined.

-

Observe actual state. This is where agents add immediate, low-risk value. Reading systems, pulling metrics, comparing against baselines, generating gap reports. Low autonomy, high leverage. This maps to Trust Ladder Level 1 — agent observes and reports, human decides.

-

Generate remediation plan. The agent drafts the fix. The human reviews. The agent shows its work: what it found, what it recommends, what alternatives it considered, why it picked this approach. Trust Ladder Level 2 — agent drafts, human approves. No execution happens here.

-

Execute with approval. Human approves the plan. Agent executes within scoped permissions — specific systems, specific change types, specific time windows. Every action logged, every decision traceable. Change ticket auto-generated with the agent’s reasoning attached. Trust Ladder Level 3 for low-risk, routine changes. Level 2 for anything touching production financial systems.

-

Verify and attest. Agent runs verification checks — did the change achieve the desired state? Are there secondary effects? Compliance reviews the attestation package. The loop closes with evidence, not just execution.

This is slower than terraform apply. It’s supposed to be. The reconciliation loop in regulated environments is optimized for defensibility, not speed. When the examiner asks “why did this change happen, who approved it, and how did you verify it worked?” — every answer needs to be documented, traceable, and human-reviewed. That’s a feature, not a bug.

The reconciliation loop is not a new concept. It’s the oldest pattern in infrastructure automation — declare what you want, measure what you have, close the gap. What changed is the ambition. We used to reconcile server counts and container states. Now we’re talking about reconciling entire business operations against an ideal.

The people who will do this well are not the ones who just discovered the pattern from a blog post or a video. They’re the ones who have been running it for a decade in Terraform and Kubernetes and GitOps — and who understand what breaks when the executor stops being deterministic. Infrastructure engineers. Platform teams. Enterprise architects who know that “close the gap” always sounds simpler than it is.

We have HCL for infrastructure desired state. Kubernetes manifests for container desired state. GitOps for deployment desired state. What we don’t have yet is the equivalent for business operations — a declarative format for expressing organizational ideal state in a way that AI agents can reconcile against. The “Terraform for organizational processes” that nobody has built yet.

That’s the next frontier. And the people who build it will be the ones who learned from infrastructure that desired state is only as good as the format it’s encoded in — and the executor you trust to act on it.

If this resonated, you might also enjoy The Trust Ladder — a framework for calibrating how much autonomy to give AI agents based on task risk.