Context Engineering Is Infrastructure, Not a Skill

I open a Claude session and the system already knows my project context. It knows my preferences, my architectural decisions, why I made them. It remembers that the last time I touched this service I broke the batch settlement process because of a timeout change — and it knows not to suggest the same approach. It loads my steering rules, my active tasks, my feedback from past sessions. Before I type a single character, there are thousands of tokens of context already in the window.

This isn’t magic. It’s scaffolding. Seven building blocks of scaffolding, specifically.

I described context engineering as one of four disciplines in Prompting Split Into Four Skills — the practice of curating the right tokens an LLM sees. That post was about the discipline. This one is the blueprint.

The discourse treats context engineering as a new skill to learn. It’s not. It’s the latest name for a problem that’s been partially solved and partially failed for fifty years. Expert systems, the semantic web, knowledge graphs, enterprise knowledge management, RAG — each generation got part of it right and part of it wrong. The building blocks are now clear enough to define as infrastructure. Here’s what they are, why each matters, and what happens when you skip one.

The Fifty-Year Pattern

Context engineering feels new. The problem isn’t.

Expert systems (1970s-80s). MYCIN diagnosed blood infections. Cyc tried to encode all common-sense knowledge. They solved capture — domain experts hand-crafted rules and knowledge bases. They failed at retrieval. Matching was brittle. The systems couldn’t generalize beyond their encoded rules. The lesson: human-authored knowledge doesn’t scale. It’s too expensive to create and too rigid to query.

The Semantic Web (2001). Tim Berners-Lee’s vision: machine-readable knowledge everywhere. RDF, OWL, SPARQL — the full stack for structured, interlinked data. Solved the schema beautifully. Failed at capture entirely. The authoring burden killed adoption. Nobody outside academia was going to annotate their web pages with RDF triples. The lesson: if capturing knowledge requires specialized tooling, nobody will do it.

Knowledge Graphs (2012+). Google Knowledge Graph, Wikidata, Facebook’s entity graph. Succeeded where the semantic web failed by being pragmatic — automated extraction from existing sources, probabilistic linking, “good enough” beats “perfect.” But they’re centralized, expensive to maintain, and optimized for structured factual queries, not the kind of fuzzy, contextual retrieval AI systems need.

Enterprise Knowledge Management (1990s-2010s). Lotus Notes. SharePoint. Confluence. Notion. Every enterprise bought the pitch: capture institutional knowledge in a shared system. And they did — they captured it everywhere. Retrieval was terrible. Search was keyword-based. The knowledge existed but was unfindable. Anyone who’s tried to find a specific runbook in a bank’s SharePoint knows the feeling. The lesson: capture without retrieval is a write-only database.

RAG (2020+). Lewis et al. flipped the script with Retrieval-Augmented Generation. Retrieval-first: find relevant documents, inject them into the prompt, let the model synthesize. This was the breakthrough — but naive RAG fails in production for reasons we’ll get to.

Here’s the pattern across all five generations: each one solves capture and fails at retrieval, or solves retrieval and fails at capture. None of them solved the identity problem — WHO needs WHAT context WHEN. None of them solved the budget problem — fitting the right context into a finite window while leaving room for the actual work.

This generation — context engineering as infrastructure — has the building blocks to solve both sides simultaneously. Not because AI is magic, but because the failure modes of every previous generation are now well-documented enough to design around.

Why “Just Use RAG” Doesn’t Work

The most common advice I hear: stand up a vector database, chunk your documents, embed them, retrieve the top-k results, stuff them into the prompt. It works for demos. It fails in production for predictable reasons.

Chunking destroys context. A paragraph about a timeout configuration loses meaning without the preceding paragraph explaining why the timeout exists. Fixed-size chunking at 512 tokens doesn’t care about semantic boundaries. You get fragments that are technically retrieved but semantically incomplete.

Vector similarity is not semantic relevance. The most similar chunk in embedding space isn’t always the most useful chunk for the current task. A chunk about “database connection timeouts” might be more similar to your query than a chunk about “why we set the batch window to 30 seconds” — but the second one is what you actually need.

No identity awareness. The same query from a junior engineer and a principal architect should surface different context. The junior needs the how. The principal needs the why and the trade-offs. Without knowing WHO is asking, retrieval is one-size-fits-none.

No budget management. Stuffing every retrieved chunk into the context window wastes tokens on low-value context and crowds out high-value context. Even with million-token windows, retrieval quality degrades as you add more — the model has to find signal in an ever-growing haystack.

No access control. The “confused deputy” problem: the AI retrieves context the user shouldn’t see. In a banking environment, a customer service agent’s AI assistant shouldn’t surface internal risk ratings or exam findings. Without identity-scoped retrieval, you’re either too open (security failure) or too closed (useless).

I wrote about identity resolution as the data problem banks can’t solve. It’s also the first building block of any context system — because WHO determines WHAT.

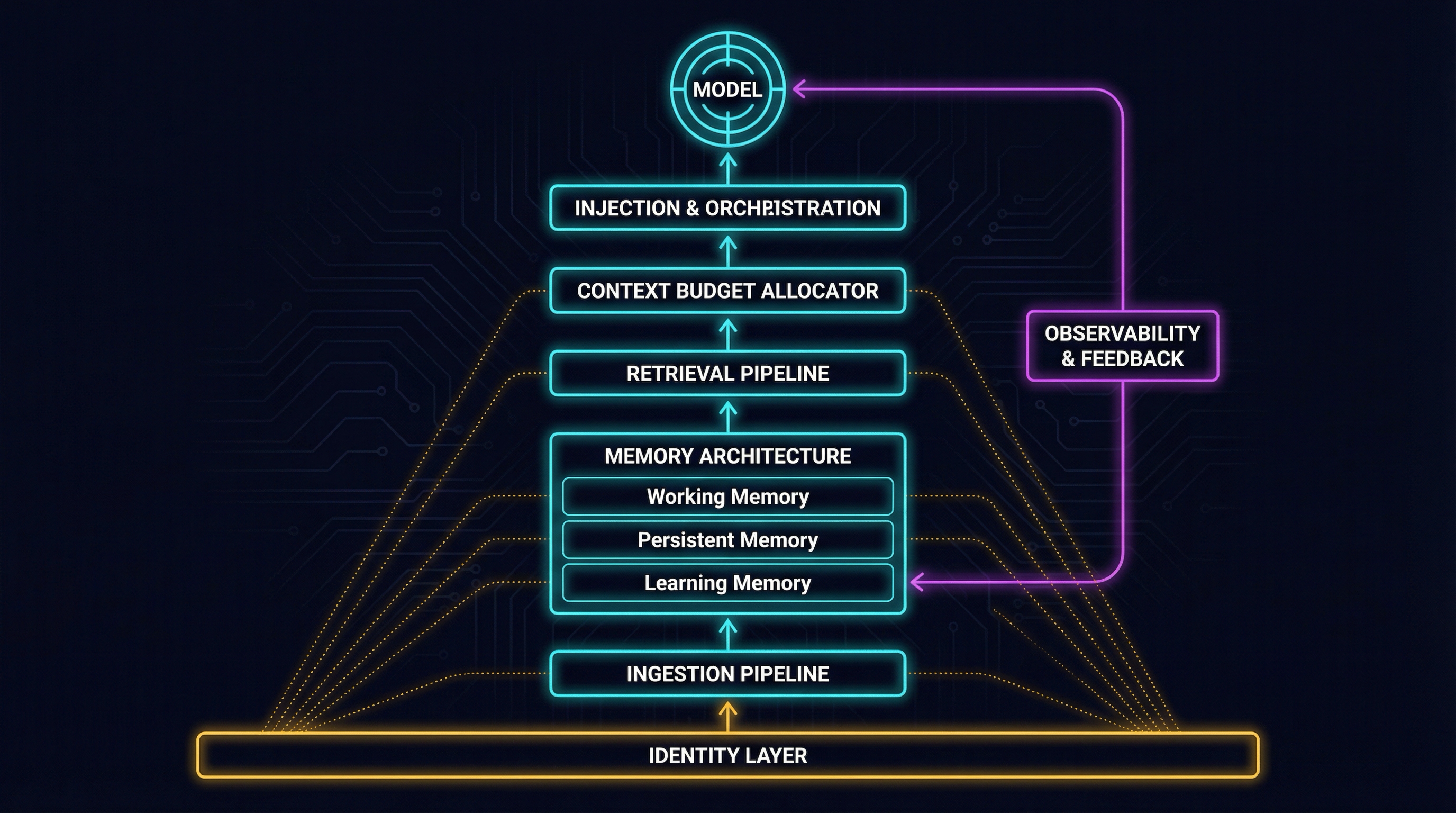

Seven Building Blocks

These are the building blocks I’ve identified from running a context system for months and studying why previous generations failed. Each one addresses a specific failure mode. Skip any one and the system degrades in a predictable way.

Building Block 1: Identity Layer. The system that determines WHO is requesting context and WHAT they’re authorized to see. Identity scopes retrieval. Without it, context systems are either too open or too closed. Session continuity across conversations depends on it. Progressive context enrichment — where the system gets more useful as it learns about you — depends on it. At the personal scale, this is a profile document loaded at session start. At the team scale, it’s RBAC on context stores. At the enterprise scale, it’s integration with identity providers and row-level security on vector databases. This is the problem I explored in Same Person, Five Systems — entity resolution determines which records belong to you across every system. Skip it, and your context system either surfaces everything to everyone or requires manual scoping every session.

Building Block 2: Ingestion Pipeline. The system that takes raw knowledge — documents, conversations, code, decisions, incidents — and transforms it into context-ready material. The semantic web failed because authoring was manual. Enterprise KM captured everything in formats retrieval couldn’t use. Ingestion must be automated and continuous. That means document-aware chunking (not fixed-size), where chunk boundaries respect semantic structure. It means contextual embeddings — Anthropic’s approach of prepending document-level context to each chunk before embedding, which reduced retrieval failures by 49%. It means metadata extraction: source, date, author, domain, confidence level. The documentation dividend pays out here — captured expertise becomes ingestion source material. Skip it, and it’s garbage in, garbage out. Bad chunking produces context that’s technically retrieved but semantically useless.

Building Block 3: Memory Architecture. Layered storage with different lifecycles, not a single vector store. Not all context has the same shelf life or access pattern.

- Working memory. Current session or task state. High churn, tight scope. What you’d hold in your head during a focused work block. Expires or archives when the task completes.

- Persistent memory. Project knowledge, architectural decisions, domain rules, preferences. Medium churn. Organized by topic, not chronology. Survives across sessions. This is where ADRs, design decisions, and domain conventions live.

- Learning memory. Reflections, feedback loops, pattern recognition across tasks. Low churn. The system’s accumulated wisdom — what worked, what failed, what to try differently. This is the ratchet that makes the system smarter over time.

Each layer can be a different store. Working memory in conversation state. Persistent in structured files or a knowledge base. Learning in a separate collection with temporal metadata. Skip it, and everything has the same priority — yesterday’s debugging session competes with last month’s architectural decision for context window space.

Building Block 4: Retrieval Pipeline. The multi-stage system that finds and ranks relevant context. Vector search alone is insufficient — the field moved from naive RAG to advanced RAG to modular RAG for a reason. The pipeline: query understanding (decompose intent into retrieval queries), hybrid retrieval (dense vector search plus sparse BM25 — Anthropic’s contextual retrieval paper showed hybrid outperforms either alone), re-ranking (cross-encoder models or LLM-as-judge to score actual relevance, not just embedding similarity), and deduplication (multiple retrieval paths surface overlapping content). For structured domains, GraphRAG adds knowledge graph structure that pure vector search misses — entities and relationships provide context that pairwise similarity cannot. Skip it, and low-relevance context wastes tokens, confuses the model, and produces worse outputs than no retrieval at all.

Building Block 5: Context Budget Allocator. The system that decides how to distribute finite context window tokens across competing sources. Even with million-token windows, you can’t and shouldn’t stuff everything in. Retrieval quality degrades with window size. Cost scales linearly. Latency increases. The budget problem is permanent. System prompt, retrieved context, conversation history, user input, and tool results all compete for space. Each has a minimum viable allocation and a diminishing-returns ceiling. Implementation: priority-based allocation where identity and steering rules get guaranteed slots, retrieved context gets budget proportional to query complexity, and history gets compressed when it exceeds its allocation. Claude Code’s auto-compaction — conversation compression when context grows too large — is this building block in action. MemGPT’s virtual context management — paging context in and out like an OS manages RAM — is the academic formalization. Skip it, and the model sees everything and prioritizes nothing.

Building Block 6: Injection and Orchestration. The system that assembles the final context payload and delivers it to the model. MCP (Model Context Protocol) lives here — it’s the emerging standard for delivering context to LLMs. But MCP is a transport protocol, not an architecture. You still need orchestration logic above it. Hook-based injection — firing at session start to load identity, steering rules, project context — is the pattern I’ve been running through PAI. The assembly order matters: system prompt first (identity, rules), then persistent context (project state, domain rules), then retrieved context (query-specific), then history (compressed), then user input. Priority decreases down the stack when budget is tight. Skip it, and context arrives unstructured — the model gets a wall of text with no signal about what matters most.

Building Block 7: Observability and Feedback. The system that monitors context quality, tracks what was retrieved, and feeds outcomes back into the pipeline. Without observability, you can’t debug bad outputs. Was the context wrong? Was it right but poorly ranked? Was the right context available but not retrieved? Log what was retrieved for each query. Track whether retrieved context was actually used in the output. Capture user corrections as negative feedback signals. Monitor retrieval latency, hit rates, and relevance scores. This building block feeds back into learning memory (Building Block 3) — the system improves because it tracks what works. Skip it, and the context system is a black box. When outputs degrade, you can’t tell whether the problem is ingestion, retrieval, ranking, budget, or injection.

The Honest Part

These building blocks are clear in theory. Implementation is where every generation before this one stalled.

The cold start problem. A context system with no content is useless. The ingestion investment is real — and it’s the same authoring burden that killed the semantic web. The difference is that LLMs can now assist with ingestion: auto-summarization, metadata extraction, chunk enrichment. But “LLMs help with ingestion” is still a hypothesis at scale, not a proven architecture. Someone has to curate the initial knowledge. Someone has to decide what’s worth ingesting.

Context poisoning is unsolved. If your knowledge base contains wrong information, the retrieval pipeline will faithfully surface wrong context. Adversarial content in shared knowledge bases — whether from a malicious actor or just an outdated document nobody archived — is a real attack vector. There is no production-grade solution for verifying context truthfulness at retrieval time. The best current approach is provenance tracking (knowing where context came from) and freshness scoring (knowing when it was last validated). Neither is sufficient.

The portability trap. I wrote about this in The AI Context Portability Problem — where context lives determines who controls it. Build your context system inside a vendor’s platform and your knowledge is locked in. Build it yourself and you carry the maintenance burden. Build it on open formats and you’ll spend time on integration that a managed platform would handle. This trade-off has no clean answer. The architecture should at least make the trade-off explicit rather than invisible.

Evaluation is primitive. Retrieval precision and recall metrics exist, but they don’t capture the question that actually matters: did the model produce better output because of this context? End-to-end evaluation of context quality — measuring whether the complete system produces more useful, more accurate, more contextually appropriate outputs — is still an open research problem. You can measure retrieval. You can’t easily measure whether retrieval made the final answer better.

Maintenance tax. Memory needs pruning — stale entries degrade retrieval quality. Embeddings need refreshing as models improve. Chunking strategies need revisiting as document types change. A context system that nobody maintains doesn’t stay neutral. It actively degrades output quality over time, as outdated context competes with current context for window space. Unmaintained context infrastructure is worse than no context infrastructure at all.

What I’m Building

The fifty-year pattern is clear. Expert systems, semantic web, knowledge graphs, enterprise KM, RAG — each generation got closer. This generation has the building blocks to build durable context infrastructure. Not because the problem is simpler, but because the failure modes are documented.

The seven building blocks are implementation-agnostic. You can build them with PAI and Claude, with LangChain and OpenAI, with a custom stack on Postgres and pgvector. The architecture matters more than the tooling. If you’re starting from scratch, here’s where I’d begin.

Start with memory architecture. Three layers is the minimum viable design. Most systems have one — the conversation buffer. Add persistent memory for project knowledge and learning memory for accumulated feedback. The difference between “the AI forgot what I told it last week” and “the AI remembers my architectural preferences” is this building block.

Add identity before scaling retrieval. WHO determines WHAT. Get identity scoping right before investing in retrieval infrastructure. A simple profile document loaded at session start — name, role, project context, preferences — already transforms the quality of AI interactions. Row-level security on your knowledge base can come later.

Instrument everything. You can’t improve what you can’t measure. Observability from day one, even if it’s just logging what context was retrieved and whether the output was useful. The feedback loop between observability and learning memory is what separates a static RAG pipeline from a system that gets smarter.

I’m building this. The seven building blocks are the architecture I’m implementing on top of PAI — Daniel Miessler’s Personal AI Infrastructure that I’ve been extending and customizing for months. The next post in this series will be the implementation journal: what I chose, what I built, what broke, and what I learned.

The engineers who treat context as infrastructure will build systems that get smarter over time. The ones who treat it as a skill will keep manually curating context every session and wondering why their AI doesn’t remember them.

Every generation thought they were building the knowledge system. The expert systems researchers. The semantic web architects. The enterprise KM vendors. The RAG pipeline builders. They all got pieces right. The pieces are finally clear enough to assemble. The question isn’t whether context infrastructure is worth building. It’s whether you’ll build it deliberately or stumble into something that partially works and quietly degrades.

I know which one I’m choosing.