Same Person, Five Systems: The Data Identity Problem Banks Still Can't Solve

A call center agent at a bank picks up a call. The ANI — automatic number identification, the caller ID the phone system feeds to the agent’s screen — matches one customer record. The caller’s name matches three. Their email matches a fourth record entirely. The last four of their SSN match two of those three — but not the one with the right phone number. The agent has thirty seconds to decide: is this one person or four?

Now consider the same problem happening silently across the rest of the bank. A lead form hits the CRM — is this a new prospect or an existing customer in a different line of business? The nightly ETL pipeline runs deduplication — did it merge two real people into one, or correctly collapse four records into a single entity? The AML system scans transactions — is it catching structuring across what it thinks are unrelated accounts, or missing it because the accounts are linked to name variants it doesn’t recognize as the same person?

I wrote about the authentication side of identity — OIDC, FAPI 2.0, consolidating dozens of portal logins into a single identity layer. That post was about proving you are who you claim to be. This one is about the other half: proving which records belong to you across every system that’s ever seen you. The data identity problem. And in the age of AI agents, these two worlds are about to collide.

$5.2 Billion in Proof

This isn’t a theoretical data quality discussion. US regulators have made the cost of fragmented customer identity brutally concrete.

TD Bank, $3.09 billion (2024). The largest Bank Secrecy Act penalty in US history. The core failure: 92% of the bank’s domestic transaction volume went unmonitored by its AML program. Not because the models were bad — because the identity data feeding those models was fragmented across systems that couldn’t agree on which transactions belonged to which customers. When FinCEN says “willful failure to maintain an adequate AML program,” that failure starts with identity resolution.

Citibank, $536 million (2020, amended 2024). The OCC’s consent order cited data governance as the primary violation — not a rogue trader, not a model failure. The bank couldn’t produce a reliable, consolidated view of its own risk exposures across business lines. The data existed. It just lived in systems that didn’t talk to each other, with customer records that didn’t link.

The industry pattern. Only 2 of 31 globally systemically important banks are fully compliant with BCBS 239 — the Basel standard for risk data aggregation — after more than a decade of trying. That standard essentially requires what MDM has always promised: a reliable, timely, accurate view of customer and counterparty data across the enterprise. The fact that 29 of the world’s largest banks still can’t deliver this tells you something about the difficulty of the problem.

FinCEN’s 2026 modifications to the Customer Due Diligence rule raise the bar further, requiring persistent tracking of customer relationships and beneficial ownership across the lifecycle — not just at onboarding. Regulators don’t care whether you call the program MDM, Customer 360, entity resolution, or something a consulting firm invented last quarter. They care whether you can answer one question: who is this person across all your systems?

The Problem That Gets Renamed Every Decade

The banking industry has been trying to answer that question since the mainframe era. The terminology changes every cycle. The underlying problem doesn’t.

In the 1970s through the 1990s, banks maintained a Customer Information File (CIF) — a COBOL-era construct sitting on IMS or VSAM that mapped account numbers to customer records. The CIF was account-centric. If you had a checking account, a savings account, and a mortgage, you might appear three times. The CIF tried to link them. It worked reasonably well when one branch served one geography and products were simple.

By the early 2000s, as banks consolidated through M&A and product lines multiplied, Gartner coined Customer Data Integration (CDI) and then Master Data Management (MDM). META Group published its first MDM definition in December 2004. Gartner’s first CDI Magic Quadrant appeared in April 2005. IBM acquired DWL (a Java-based MDM hub out of Toronto) in 2005 and Initiate Systems (probabilistic matching) in 2010. Informatica bought Siperian for $130 million in 2010. The pitch was the same as the CIF, just with Java instead of COBOL: create a “golden record” — a single, authoritative representation of each customer.

The mid-2010s brought Customer Data Platforms (CDPs), which repackaged identity resolution for marketing teams who didn’t want to wait for IT to finish the MDM project that had been running for three years. Then the data engineering community adopted entity resolution as the preferred term — cleaner, more precise, less burdened by the MDM vendor baggage.

Same problem rebranded every decade, tracking organizational power shifts: IT owned the CIF. Compliance drove the MDM investment. Marketing bought the CDP. Data engineering now runs entity resolution pipelines. Each generation acts like they invented the question. They didn’t.

The “golden record” sounds simple. In practice, it requires organizational consensus on what a customer is — and retail banking, commercial lending, and wealth management have legitimately different answers. A retail customer is a person with deposit accounts. A commercial customer is an entity with a credit facility, multiple authorized signers, and subsidiary relationships. A wealth client is a household with a portfolio allocation strategy. Forcing these into a single canonical record creates a lowest-common-denominator view that satisfies nobody.

M&A makes it worse. Every acquisition brings another customer master with different schemas, different quality standards, and different definitions of “active.” I’ve seen banks running five years post-merger that still haven’t consolidated customer data — not because they lack technology, but because the business lines can’t agree on survivorship rules for conflicting records.

Three architectural patterns have emerged for dealing with this:

Hub-Spoke (centralized). A physical golden record in a central MDM system. Highest data quality when it works. Twelve to twenty-four months to implement. Requires sustained organizational authority that most banks can’t maintain across leadership changes and budget cycles.

Registry (federated). A cross-reference layer that maps identifiers across source systems without physically moving data. Fastest to deploy — three to six months. But data quality depends entirely on the source systems, and you’re building an index over records you don’t control.

Coexistence (hybrid). Local masters in each business line with bidirectional synchronization to a central hub. Most realistic for large banks with legacy constraints. Also the most complex to operate — “synchronization” is the word where every MDM project stalls.

Gartner abandoned the Magic Quadrant for MDM entirely, switching to a Market Guide. The market is too fragmented to rank. That tells you something about the state of the problem.

The Architecture Nobody Draws on One Diagram

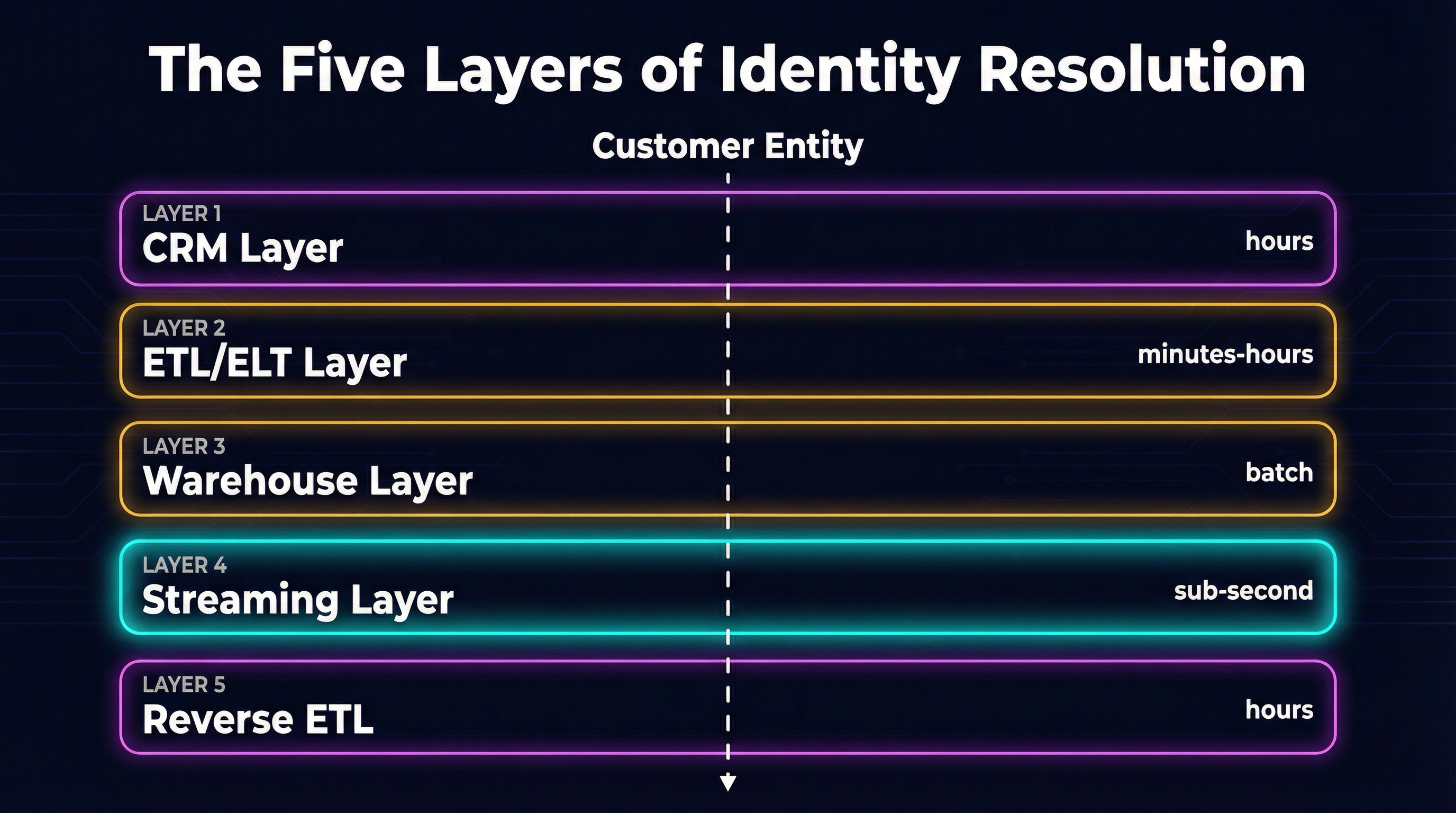

The reality in a large bank is not one of those three patterns. It’s all of them running concurrently at different layers of the data stack, maintained by different teams, with different latency characteristics and different definitions of “resolved.”

CRM layer. Salesforce Data Cloud does real-time profile unification using match and reconciliation rules. It works within Salesforce. It creates a CRM-centric identity silo that doesn’t flow cleanly to downstream systems. The industry-wide duplicate rate across Salesforce orgs is around 45%. That’s not an outlier — that’s the baseline when identity resolution is embedded in the application layer instead of treated as infrastructure.

ETL/ELT layer. dbt models or Spark jobs running batch matching — Splink for probabilistic linkage, Zingg for ML-powered deduplication. The output is only as fresh as the last batch run. A customer who opened an account at 9 AM and called the contact center at 10 AM may not be linked yet. The pipeline hasn’t run.

Warehouse/Lakehouse layer. Snowflake and Databricks are both positioning as the system of record for resolved identities — the “composable CDP” thesis where the warehouse is the customer platform. This is the current battleground. Snowpark and Databricks DLT give you the compute. What they don’t give you is the matching logic, the survivorship rules, or the governance framework. That’s still your problem.

Streaming layer. Kafka plus Flink for sub-millisecond identity matching on event streams. Required for real-time fraud detection where you need to link a transaction to a resolved identity before the authorization window closes. Operationally the most complex layer — most banks still enrich streaming events with pre-resolved identities from batch rather than resolving in-stream.

Reverse ETL layer. Hightouch, Census (acquired by Fivetran in 2025), and similar tools pushing resolved identities from the warehouse back to operational systems. This is the “last mile” that determines whether the identity resolution you did in Snowflake actually reaches the call center agent’s screen. Without it, you have a clean golden record in the warehouse and dirty data everywhere people actually work.

The vendor landscape reflects the fragmentation. The identity resolution market is roughly $1.5 billion in 2026, growing at about 11% CAGR, with the top five vendors holding only 49% share. Graph-based approaches are the real architectural differentiator — Reltio for graph-native MDM, Quantexa for graph analytics in financial crime. AWS Entity Resolution is essentially rule-based matching with partner pass-through. Google and Azure have no dedicated entity resolution service. Open source options like Zingg and Senzing exist but the “free” label is misleading — expect $300K to $800K over three years in engineering labor to operate them at banking scale.

Only 14% of organizations have achieved a true Customer 360. That’s not a technology gap. It’s a governance gap.

Every Layer Is Doing the Same Thing

Here’s what nobody draws on the architecture diagram: the call center agent, the CRM rep, the ETL pipeline, and the AML system are all solving the same problem. They just call it different things and operate under different latency constraints.

The call center agent authenticates a caller using ANI (automatic number identification), knowledge-based authentication, and increasingly voice biometrics. They’re doing entity resolution at the interaction layer — matching a live person to a known record, in real time, with a confidence threshold that determines whether to proceed or escalate.

The CRM rep identifies an incoming lead by matching email, fuzzy name comparison, and manual review against existing contacts. They’re doing entity resolution at the data layer — deciding whether this lead is a new person or an existing customer in a different context. The answer determines whether they create a new record or enrich an existing one.

The ETL pipeline runs deterministic and probabilistic matching across batch loads — exact SSN match, Jaro-Winkler string similarity on names, address standardization and comparison. Entity resolution at the integration layer, with confidence scores that determine automatic merge versus human review.

The AML system links transactions across accounts using graph analysis and pattern matching — connecting wire transfers, cash deposits, and ACH transactions that may involve the same beneficial owner under different names. Entity resolution at the compliance layer, where a missed link isn’t a duplicate record — it’s a regulatory violation.

They differ only in latency requirements and confidence thresholds. Sub-second for the streaming fraud check. Seconds for the call center agent. Hours for the batch pipeline. The problem is identical. The SLAs are not.

Knowledge-based authentication — the “what’s your mother’s maiden name?” approach — is failing across the board. Studies show 10-30% false reject rates for legitimate customers who can’t remember their own security answers, while criminals pass KBA challenges over 60% of the time using data purchased from breaches. The authentication world already knows deterministic identity verification is broken.

The data world is reaching the same conclusion. BioCatch, deployed across 280+ banks analyzing behavioral biometrics for 525 million users, stopped $3.7 billion in fraud in 2024 using passive, continuous, probabilistic identity signals — keystroke dynamics, mouse trajectory, cognitive patterns. That’s the direction: not “prove who you are once” but “continuously confirm you’re still who we think you are.” It’s exactly what data entity resolution has been evolving toward for decades — probabilistic matching that gets more confident over time rather than demanding a binary yes/no at a single point.

The AI agent is the forcing function that makes this convergence unavoidable. When an AI agent handles a customer interaction — whether it’s a chatbot, a voice agent, or an autonomous process — it must simultaneously authenticate the caller (IAM layer), resolve which customer record this is (MDM layer), check for fraud signals (compliance layer), and pull the right context from the right systems (data layer). It cannot do this if each layer maintains its own identity silo with its own matching logic and its own confidence model. The agent needs a unified identity resolution capability that spans all four layers.

This is where CIAM (Customer Identity and Access Management) becomes the natural convergence point. Authentication identity and data identity merge at the CIAM boundary — the same system that knows you logged in can also know which customer records are yours. The non-human identity framework I wrote about applies here too: AI agents acting on behalf of customers need both authentication credentials and resolved customer context, delivered through the same identity infrastructure.

The Tiered Resolution Architecture

The AI era doesn’t just force convergence — it provides a new architecture for the matching problem itself. The emerging pattern is a three-tier funnel that allocates compute based on match difficulty.

Tier 1: Deterministic matching. Exact SSN, exact email, exact phone number. Handles the easy cases — typically 60-70% of records — at near-zero cost and sub-millisecond latency. This is what CIF did in the 1970s. It still works for the same cases it worked for then.

Tier 2: Embedding similarity. Convert names, addresses, and other fuzzy fields into vector embeddings using transformer models. Compare embeddings using cosine similarity or approximate nearest neighbor search. Handles the middle tier — transliterated names, address variations, maiden-to-married name changes — that deterministic matching misses. AnyMatch, a 124-million parameter model fine-tuned from GPT-2, achieves 81.96% mean F1 on entity matching benchmarks at 3,899x lower cost than GPT-4. Zero-shot entity resolution is production-ready.

Tier 3: LLM adjudication. For the genuinely hard cases — cross-language names, complex corporate hierarchies, records with conflicting signals — route to an LLM for contextual reasoning. LLM-CER (presented at SIGMOD 2026) moves from pairwise comparison to in-context clustering, yielding 150% accuracy improvement and 5x cost reduction in API calls compared to pairwise LLM matching.

The architectural insight: you don’t need to choose between deterministic rules and AI. You tier them. The cheap, fast approach handles most records. The expensive, smart approach handles the tail. Graph neural networks — still underutilized in production but outperforming flat pairwise matching in research — add relationship context that resolves ambiguities no amount of field-level comparison can catch.

The identity data fabric pattern wins over physical consolidation. Instead of moving all customer data into one MDM hub, you build a resolution layer that queries across source systems with federated matching. It’s the registry MDM pattern reborn — but with AI-powered matching instead of rule-based cross-referencing, and with embeddings instead of phonetic codes.

The Honest Part

None of this is as clean as the architecture diagrams suggest.

MDM projects fail at notoriously high rates. The three-tier AI matching architecture doesn’t fix the governance gap — it can resolve records faster, but it can’t resolve the organizational argument about which business line’s data survives when two records conflict. That argument is political, not technical, and it stalls projects measured in years.

The “golden record” requires consensus on what a customer is. As long as retail banking, commercial lending, and wealth management have legitimately different customer models — and they do, for legitimate business reasons — a single canonical record will always be a compromise. The banks that acknowledge this and build resolution layers that serve multiple views of the same entity will do better than the ones chasing a single source of truth that satisfies nobody.

Data stewardship queues grow forever. Every probabilistic matching system produces uncertain cases that need human adjudication. AI shrinks the queue — maybe dramatically — but it does not eliminate it. And the queue backlog is where most MDM programs quietly die: not in a dramatic failure, but in the slow accumulation of unreviewed matches that erode confidence in the system.

The “boiling the ocean” anti-pattern kills more MDM programs than bad technology. The project that tries to resolve all entities across all systems in a single initiative always stalls. The banks that succeed start with one high-value use case — usually AML transaction monitoring or KYC onboarding — prove the model works, and expand from there.

The convergence thesis — that authentication identity and data identity will merge through AI agent requirements — is directionally correct but the timeline is uncertain. CIAM vendors today are not thinking about MDM. MDM vendors are not thinking about authentication. The convergence will likely happen bottom-up, driven by AI agent teams that need both capabilities and get tired of integrating two separate platforms, rather than top-down through some vendor’s grand unified product vision.

What I’d Do

If you’re an architect or engineering lead at a bank staring at this problem, here’s where I’d start.

Start with your AML/KYC use case. It has the clearest regulatory mandate, the highest executive sponsorship, and the most well-defined success criteria. Regulators are actively fining banks for identity resolution failures in this domain. That makes it the easiest business case to write and the hardest to deprioritize.

Inventory your identity layers. Map which systems perform entity resolution today — CRM, ETL, warehouse, streaming, operational tools. Document what matching logic each uses, what confidence thresholds they apply, and how stale their resolved identities are. You will find more resolution happening than you expected, and less coordination between the systems doing it than you’d like.

Pilot the tiered AI matching architecture on your hardest matching problem. Deterministic for exact matches, embeddings for the fuzzy middle, LLM for the ambiguous tail. Measure F1 against your current approach. If you’re running rule-based matching today, the improvement from embedding-based similarity alone will justify the investment.

Read the authentication identity post alongside this one. If your IAM consolidation program and your MDM program are run by different teams who never talk to each other, you’re building two halves of the same platform separately. The AI agents you deploy next year will need both. Plan accordingly.

The banks that solve data identity resolution won’t just satisfy regulators or deduplicate CRM records. They’ll be the ones whose AI agents can actually answer the question every customer interaction starts with: do you know who I am?

The ones that don’t will keep paying the cost — in nine-figure fines, in call center agents toggling between five screens to identify a caller, in AML alerts that fire on the wrong person, and in AI agents that confidently act on the wrong customer record. The CIF tried to solve this in 1975. We’re still trying. The difference now is that the matching technology has finally caught up. The organizational will is what’s still lagging behind.