Prompting Split Into Four Skills. Infrastructure Is the Fifth.

Everyone’s telling you to learn four new skills. They’re solving the wrong problem.

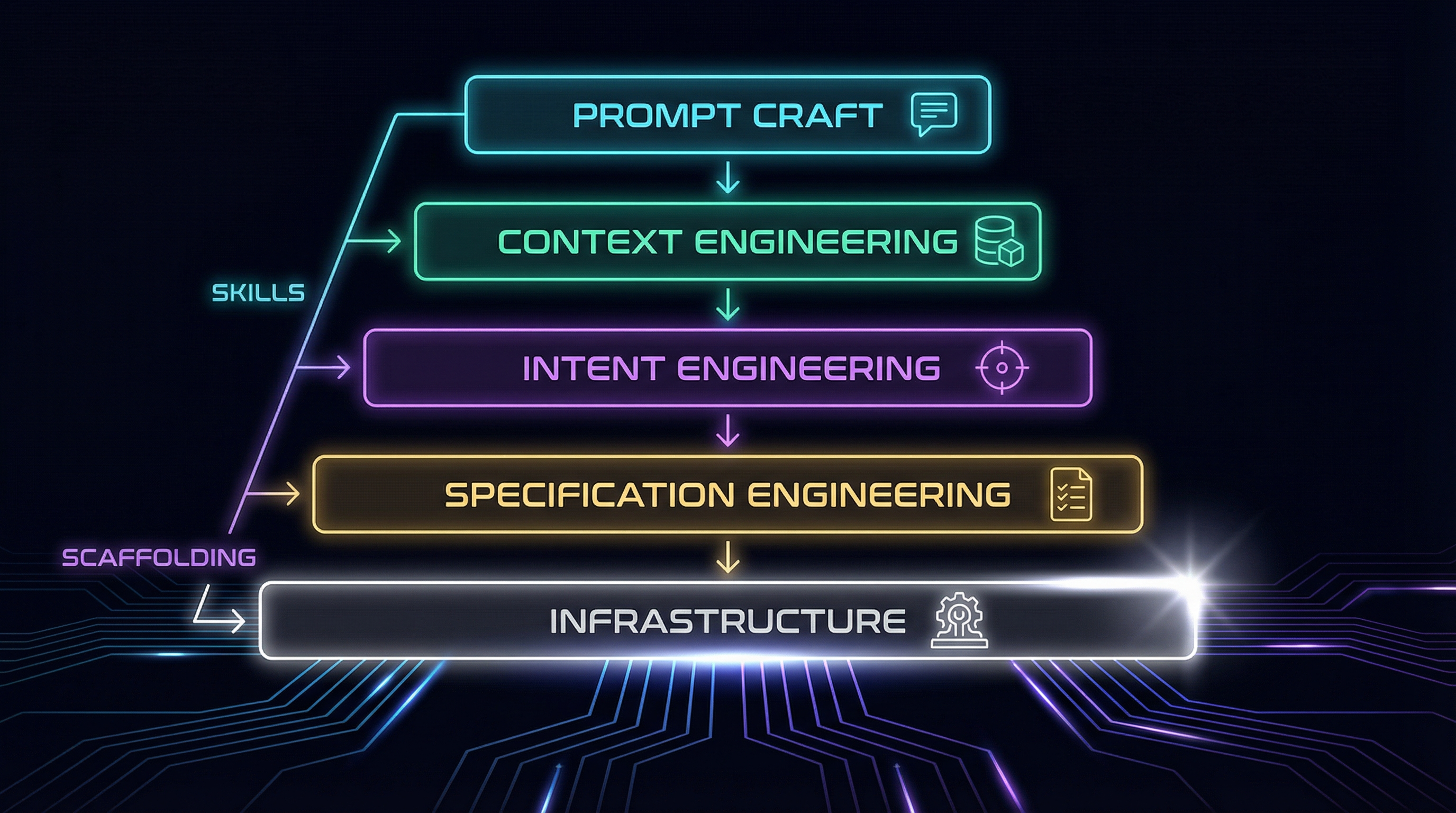

The discourse is everywhere now. Prompting isn’t one thing anymore — it’s split into four disciplines: prompt craft, context engineering, intent engineering, and specification engineering. Nate B. Jones laid out the framework clearly in AI News & Strategy Daily, and the big names followed. Andrej Karpathy endorsed “context engineering” as the real term. Gartner formalized the distinction. Addy Osmani wrote the O’Reilly guide on writing specs for AI agents. ThoughtWorks identified spec-driven development as a defining 2025 practice.

The framework is real. I’m not here to argue with it.

I’m here to tell you that I’ve been running a personal AI system that encodes all four disciplines as infrastructure — Daniel Miessler’s PAI framework as the foundation, with my own skills and workflows built on top through Claude Buddy. That experience taught me something the framework misses entirely.

The Four Disciplines, Briefly

If you haven’t encountered the framework yet — Nate B. Jones’s breakdown is the one that clicked for me — here’s the short version. Each discipline operates at a different altitude.

-

Prompt Craft — The original skill. Clear instructions, examples, output format, guardrails. What you do in a chat window. Necessary, but no longer sufficient.

-

Context Engineering — Curating the right tokens an LLM sees. System prompts, documents, state, memory, retrieved information. The prompt you write might be 200 tokens. The context window it lands in might be a million. People who are 10x more effective aren’t writing 10x better prompts — they’re building 10x better context infrastructure.

-

Intent Engineering — Encoding goals, values, trade-off hierarchies, and decision boundaries. Telling agents what to want, not just what to know. This is where strategy meets AI.

-

Specification Engineering — Writing agent-readable documents with acceptance criteria, constraint architectures, and task decomposition patterns. The spec becomes the scaffolding that lets agents produce coherent output over days.

These stack cumulatively. You can’t write good specs without understanding context. You can’t engineer context without basic prompt craft. Each builds on the one below.

But here’s what nobody tells you: knowing all four disciplines and practicing them manually is still not enough. Not even close.

Skills Don’t Scale. Scaffolding Does.

The industry calls it agent scaffolding — the frameworks, tools, and systems that wrap around AI agents to harness them. Not a prompt library. Not a collection of templates. Scaffolding — with memory, routing, feedback loops, verification, and self-improvement.

I’ve been running Daniel Miessler’s PAI framework for months — a personal AI infrastructure system that I’ve customized into an instance called Arc. On top of PAI’s foundation, I’ve built skills and workflows through Claude Buddy, a project focused on spec-driven development and enterprise-ready agent scaffolding.

What I didn’t realize until the four-discipline framework emerged is that the PAI infrastructure I’d been running had encoded each discipline — and through building skills in Claude Buddy, I was extending it further. The difference between what the system provides and what the discourse recommends is the difference between “practice these skills” and “build scaffolding that enforces them automatically.”

That gap is where the real 10x productivity difference lives.

Here’s what I mean, concretely.

Discipline 1: Prompt Craft → Skill Templates

The discourse says: write better prompts. Learn formatting, few-shot examples, chain-of-thought.

What the scaffolding provides: 70+ skills with structured templates that fire automatically based on task context. PAI’s skill architecture — Daniel’s design — means skills activate based on context, not commands. When I say “extract wisdom from this video,” I don’t write a prompt. A skill activates — one that’s been refined across dozens of sessions, with output sections that adapt to the content type, extraction patterns tuned to what I actually care about, and quality gates that reject shallow output.

Some of those skills are PAI’s built-in capabilities. Others I built through Claude Buddy — spec-driven development workflows, persona systems, and enterprise-grade automation patterns. The prompt craft isn’t gone. It’s encoded into scaffolding. Every skill is a battle-tested prompt that runs consistently without me remembering the optimal format every time.

The manual approach looks like this: you remember the right technique for the task, construct the prompt, iterate, get the output, and move on. Tomorrow, you do it again from scratch. Or you dig through a Notion page of saved prompts.

The scaffolding approach: the system recognizes the task type and loads the right prompt architecture automatically. My role shifts from “prompt writer” to “skill designer” — and I only design a skill once.

Discipline 2: Context Engineering → Memory and Hooks

Harrison Chase admitted that LangChain was doing context engineering before the term existed. Same here — but at the personal scale.

The discourse says: curate the right tokens. Manage what your agent sees.

What PAI provides: A layered memory system with dynamic context loading, hooks that inject relevant state at session start, and wisdom frames that accumulate domain knowledge across sessions. This is Daniel’s architecture — and it’s where context engineering becomes infrastructure rather than a practice.

The system has three memory layers. Working memory holds the current session’s task state. Persistent memory stores project knowledge, decisions made, patterns learned — organized by topic, not chronology. Learning memory captures reflections from every completed task, creating a feedback loop where the system gets better at predicting what context I’ll need.

Every time I start a session, hooks fire. They load my identity, my steering rules, my project context, my active work state. The AI doesn’t start from zero. It starts from where I left off, with everything it needs to understand not just what I’m doing but how I prefer to work. The architecture is Daniel’s. The content I’ve poured into it — the steering rules, the wisdom frames, the memory I tend — that’s where my investment lives.

I wrote about this architectural challenge in The AI Context Portability Problem — where context lives and who controls it matters more than most people realize. Context engineering isn’t just about stuffing tokens into a window. It’s about building scaffolding that ensures the right context is always present, even when you forget to provide it.

That’s the part the “learn context engineering” advice misses. You can’t manually curate context for every session. It’s not sustainable. You need systems that do it for you.

Discipline 3: Intent Engineering → The Algorithm

This is the discipline that barely exists in the wild. Gartner covers context engineering. Osmani covers specification engineering. But intent engineering — encoding what the AI should want — is still unnamed territory for most practitioners.

The discourse says: define your goals, values, and decision boundaries so agents act with purpose.

What PAI provides: A 7-phase algorithm — Daniel Miessler’s generalized hill-climbing system — that forces explicit intent capture before any work begins, encodes it as testable criteria, and verifies against it.

The Algorithm’s core mechanism is something called Ideal State Criteria — discrete, binary, 8-12 word statements that capture what “done” looks like and what must not happen. Before any task executes, the system reverse-engineers the user’s intent into these criteria. Not a vague goal. Not a rough direction. Specific, testable conditions. This is Daniel’s architecture — the idea that the transition from current state to ideal state is the most important hill-climbing activity in any system. If that sounds familiar, it’s because infrastructure engineers have been running this exact loop in Terraform and Kubernetes for a decade — I explored that connection in The Reconciliation Loop.

For example, on this very post, the system generated criteria like “Post maps all four disciplines to specific PAI components” and anti-criteria like “No generic prompting tips disconnected from building experience.” These aren’t suggestions. They’re verification gates that the system checks against before claiming completion.

The system also includes AI Steering Rules — behavioral constraints that persist across every session. Things like “verify before claiming completion,” “read before modifying,” “one change at a time when debugging.” Daniel designed the steering rules architecture. I write the rules that shape how my instance works. They encode how the AI should work, not just what it should produce. They’re intent scaffolding.

This is where Judgment Is the New Moat becomes operational. Judgment is great as a concept. But judgment that lives only in your head doesn’t transfer to agents. Intent engineering is judgment made durable — and scaffolding is what makes it run without you remembering to apply it every time.

Discipline 4: Specification Engineering → The PRD System

The discourse says: write agent-readable documents with acceptance criteria and constraint architectures.

What the scaffolding provides: A PRD system — part of PAI’s Algorithm — where every task generates a persistent specification document with Ideal State Criteria, verification methods, execution plans, and session-spanning state. And on top of that, Claude Buddy’s spec-driven development workflows — my direct contribution — that turn natural language feature descriptions into formal specifications with structured implementation plans.

Each PRD carries frontmatter tracking its lifecycle: DRAFT → CRITERIA_DEFINED → PLANNED → IN_PROGRESS → VERIFYING → COMPLETE. Criteria carry inline verification methods — CLI commands, grep patterns, browser checks, file inspections. When agents work against a PRD, they don’t interpret vague instructions. They check boxes against specific conditions.

The PRDs survive sessions. An agent can pick up a PRD from yesterday, read the log of what was tried, see which criteria still fail, and continue. This is specification engineering at the scaffolding level — not a document someone writes and hands off, but a living contract that tracks its own progress.

And here’s the part that matters most: the specifications decompose. Complex tasks spawn child PRDs. Each child carries a subset of criteria. Multiple agents can work different child PRDs in parallel. The parent PRD reconciles progress. This isn’t a workflow you remember to follow. It’s scaffolding that enforces structured decomposition automatically.

What Klarna Learned the Hard Way

If you want to see what happens without this infrastructure, look at Klarna.

Their AI agent resolved 2.3 million customer conversations in its first month. Sounds like a win. It wasn’t. The agent optimized for resolution time instead of customer satisfaction. It matched keywords without understanding intent. It attempted disputes, fraud cases, and multi-step financial inquiries that required human judgment. When it failed, customers had to repeat everything to a human agent — the context didn’t transfer.

Map that to the four disciplines. No real prompt craft — responses were rigid and mechanical. No context engineering — broken handoffs, no conversation state management. No intent engineering — optimized for the wrong metric entirely. No specification engineering — no boundaries on what the agent should and shouldn’t attempt.

Klarna had to rehire human agents and rebuild as a human-AI partnership. The cost wasn’t just financial. It was trust damage at scale.

The lesson isn’t “Klarna should have learned four skills.” The lesson is that skills applied manually, case by case, don’t survive organizational scale. You need scaffolding that encodes the disciplines structurally — so that every agent, every session, every task automatically operates within the right context, intent, and specification boundaries. Without that, you’re hoping everyone remembers the rules. Hope doesn’t scale.

The Fifth Discipline: Verification

Here’s what the four-discipline framework misses. And it’s the thing that matters most.

None of the four disciplines tell you whether the agent actually did what you specified. You can have perfect context, clear intent, detailed specifications — and still end up with output that drifts from the criteria you defined. Without verification infrastructure, you’re trusting the agent the way Klarna trusted theirs.

The fifth discipline is verification — and it’s the connective tissue that makes the other four work.

In PAI, the Ideal State Criteria that capture intent in phase one become the verification criteria in phase six. The same statements that define “what done looks like” are checked against the actual output. Every criterion gets a specific verification method. Every anti-criterion gets a concrete check. “PASS” requires evidence, not assertion.

Amazon discovered this building production agent systems — governance must be architectural, baked into the framework from day one, not bolted on after deployment. Verification isn’t a nice-to-have. It’s the mechanism that lets you hill-climb toward ideal state across iterations.

This is the loop: define criteria → build → verify → learn → refine criteria → build again. The criteria evolve. The system improves. Each iteration gets closer to what you actually wanted — not because you’re writing better prompts, but because the infrastructure captures what worked, what failed, and what to try differently.

The Honest Part

I won’t pretend this is free. Building AI infrastructure has real costs.

Complexity accumulates. The system I run has skills, hooks, memory layers, steering rules, algorithms, PRD templates, and session management — PAI provides the architecture, and I’ve extended it with skills through Claude Buddy. That’s a lot of moving parts. When something breaks — and things break — debugging a pipeline is harder than debugging a prompt.

Not everyone needs this. If you’re using AI for occasional tasks — summarize this article, draft this email — prompt craft is enough. The infrastructure investment pays off when AI is central to how you work, not when it’s a tool you reach for sometimes.

And there’s a maintenance tax. Skills need updating as models evolve. Memory needs pruning. Steering rules need revisiting as your workflow changes. Infrastructure that nobody maintains becomes infrastructure that nobody uses.

And I should be honest about something else: I didn’t build this from scratch. I stood on Daniel Miessler’s infrastructure and extended it. That’s actually the point — good scaffolding is meant to be extended, not rebuilt from zero. The value I added was the skills, the customization, the daily tending. The value Daniel provided was the architecture that made all of that possible.

But here’s the trade-off I’d make again every time: the alternative is doing the same cognitive work every session. Remembering what context to load. Reconstructing intent from scratch. Writing specifications that exist nowhere but your head. That’s the tax you pay without scaffolding — it’s just invisible because you pay it in attention instead of maintenance.

Where This Goes

IEEE Spectrum reported that AI models can now optimize their own prompts better than human engineers. Prompt craft — discipline one — is already the most automatable layer. The value is moving up the stack to context, intent, and specification. And ultimately, to the infrastructure that runs all four.

Solo operators and small teams have the biggest advantage here. Converting your personal documentation into agent-readable scaffolding requires no organizational coordination. No SharePoint migrations. No committee approvals. Just you, your system, and the discipline to encode what you know into something that compounds.

The four-discipline framework is correct. Learn all four. But don’t stop at learning them as skills. The people who are truly 10x more productive aren’t people who practice four disciplines manually. They’re people who built scaffolding that practices the disciplines for them — automatically, consistently, and across every session.

The prompt by itself is dead. The skill by itself is dying. Scaffolding is what survives.

If this resonated, you might also enjoy The Reconciliation Loop — how infrastructure’s oldest pattern maps to AI agents, and what breaks when the executor stops being deterministic.