The Stack Nobody Designed

Jensen Huang stood on the GTC 2026 stage and declared OpenClaw “the operating system for personal AI.” He compared it to Windows, Linux, and HTTP — foundational infrastructure for a new computing paradigm. He told every CEO in the audience to develop an OpenClaw strategy. NVIDIA’s stock moved. Cloudflare’s stock moved. The framing was bold and the market responded.

The framing is also wrong in an interesting way.

OpenClaw isn’t the operating system. It’s one distribution. The operating system — the actual architectural pattern — keeps getting rediscovered independently by builder after builder. And the fact that they all arrive at the same answer is a stronger signal than any keynote.

Convergent Evolution

In biology, convergent evolution is when unrelated species independently develop the same trait. Eyes evolved independently in vertebrates, cephalopods, and arthropods — not because they copied each other, but because the problem of navigating a light-filled environment has an optimal solution. The constraint space has an attractor.

Technology does this too. Five teams at five companies built message queues — RabbitMQ at Pivotal, Kafka at LinkedIn, ZeroMQ at iMatix, RocketMQ at Alibaba, ActiveMQ at Apache — all arriving at the same core abstraction: producers, consumers, topics, durable delivery. Nobody convened a standards body. The problem shaped the solution.

Something similar happened in personal AI infrastructure. Builder after builder — starting from completely different places, solving completely different problems — converged on the same three-pillar architecture. That convergence tells us something Jensen’s keynote didn’t.

The Two I Run

I’ve spent the most time with two projects that sit at opposite ends of the design spectrum.

Daniel Miessler started from philosophy. His Personal AI Infrastructure (PAI) project began with a question most builders skip: how should a human actually work with AI? Not which model is best. Not which features to ship. How should the relationship work? He built a framework — skills, memory, hooks, a structured algorithm for task execution — and open-sourced the pattern. PAI is a design philosophy that happens to have an implementation, not the other way around.

Peter Steinberger started from frustration. After exiting PSPDFKit, he wanted a local AI assistant that connected to his actual digital life — messages, calendars, workflows. He built OpenClaw, and the world showed up. 150,000+ GitHub stars. The fastest-growing open source project in history. A marketplace of community-contributed skills. His philosophy was explicit: “Build for models, not humans.” Speed over ceremony. Ship code you don’t read.

Different motivations. Different audiences. Different tech stacks. Same architecture.

The Pattern Keeps Showing Up

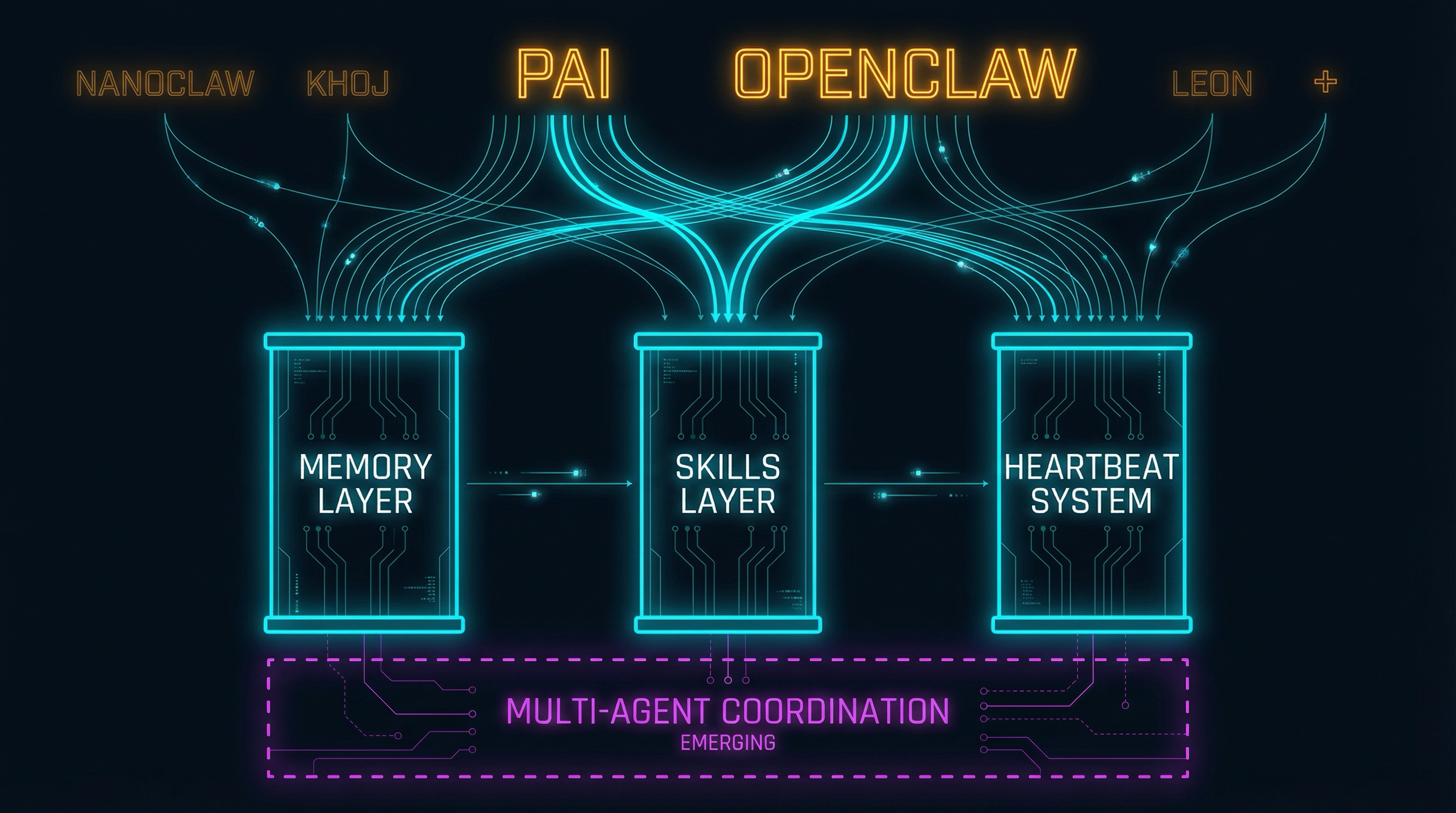

PAI and OpenClaw aren’t alone. The same three-pillar pattern appears across the personal AI landscape:

NanoClaw — a lightweight, container-isolated alternative built on Anthropic’s Agents SDK. ~500 lines of TypeScript, per-group memory files, scheduled jobs. 7,000+ stars in its first week.

Khoj — a self-hostable “AI second brain” with persistent memory across sessions, scheduled automations, and custom agent building. Indexes your docs and learns from them. Works with any LLM provider.

Leon — one of the earliest open-source personal assistants. Node.js and Python with a modular skills/packages architecture, a self-model memory system, and a “proactive pulse” for bounded autonomous behavior.

Cole Medin’s custom second brain — a developer who studied OpenClaw’s architecture, called it “genius,” then rebuilt it in a couple of days with Claude Code and a few thousand lines of Python. He documented the pattern as a teachable framework, proving the architecture is simple enough to replicate from scratch.

Each of these projects was built independently. Each landed on some combination of persistent memory, composable skills, and proactive automation. The specific implementations differ — but the architectural bones are the same.

Pillar 1: The Memory Layer

Every project in this space converges on markdown-based persistent memory loaded at session start.

OpenClaw uses soul.md (agent personality and behavioral guidelines), user.md (evolving user profile), and memory.md (key decisions, lessons learned, important facts). These files are the agent’s identity — who it is, who you are, and what it’s learned.

PAI uses CLAUDE.md for project rules, a MEMORY/ directory structure for accumulated learnings, steering rules for behavioral guidance, and learning signals for performance tracking. The architecture is more elaborate — hooks fire at session start to load the right context — but the core pattern is the same: persistent files that give the agent identity and accumulated knowledge.

The others followed suit. NanoClaw uses per-group CLAUDE.md files. Khoj indexes documents into persistent memory across sessions. Medin adopted OpenClaw’s three-file pattern directly, then added daily logs and a promotion workflow where a scheduled reflection process curates what’s worth keeping. Leon maintains a self-model memory system.

The convergence is striking: persistent identity files, evolving user profiles, accumulated learnings. Nearly all of them implemented it in plain markdown. Not a vector database. Not a knowledge graph. Markdown files that humans can read and machines can load. The memory layer maps directly to what I described as Building Blocks 1 (Identity), 3 (Memory Architecture), and 6 (Injection) in Context Engineering Is Infrastructure, Not a Skill — but none of these builders read that post before building. They discovered it.

The divergence is in compaction. Memory grows. How do you manage it? OpenClaw leaves it mostly manual. PAI uses hooks and a PRD system that captures work products automatically. Medin’s daily reflection process adds an automated curation layer. Different answers to the same inevitable problem.

Pillar 2: The Skills Layer

Every project converges on skills as the capability primitive — composable, file-based units that extend what the agent can do.

OpenClaw built ClawHub, a marketplace where anyone can publish skills. Over 700 skills covering everything from email management to code generation. The marketplace model scales fast. Steinberger’s instinct was right: community contribution accelerates capability growth. The problem is trust. As I covered in OpenClaw and the Trust Ladder, the ClawHub security audit found hundreds of malicious packages — the marketplace that scaled capability also scaled attack surface.

PAI takes the opposite approach: curated, personal skills organized in a structured directory. System skills for common patterns. Personal skills prefixed with underscores for private customizations. Three inference tiers (fast, standard, smart) for graduated reasoning. No marketplace. No community contribution. Complete control. The trade-off is real — you build everything yourself or your AI builds it for you, but nothing comes from strangers.

The same pattern shows up everywhere. Leon uses modular packages. NanoClaw extends through Claude Code skill files. Medin went a step further with self-referential skill creation — one of his agent’s skills is a meta-skill that teaches the agent how to create new skills. Describe a capability conversationally, and the agent generates a properly structured skill file.

The convergence: composable, file-based capability units that extend the agent. The divergence: the trust boundary. Marketplace (OpenClaw), curated/personal (PAI), self-referential (Medin), container-isolated (NanoClaw). The architectural pattern is settled. The security model is not.

Pillar 3: The Heartbeat

The third pillar is proactive, scheduled automation — agents that do things without being asked.

OpenClaw has a built-in heartbeat running on 30-minute cycles. The agent wakes up, assesses what needs attention based on accumulated context, takes action, and goes back to sleep. It manages email, drafts responses, triages notifications — the routine work of a digital life.

PAI achieves proactive behavior through its hooks system — lifecycle events that fire at session start, tool use, and other checkpoints — with emerging heartbeat capabilities through the Claude Agent SDK. The hooks pattern is event-driven rather than schedule-driven, but the intent is the same: the system does useful work without waiting to be asked.

The pattern repeats across the ecosystem. NanoClaw has scheduled jobs. Leon has its “proactive pulse.” Khoj runs scheduled automations. Medin calls the heartbeat “the single biggest time-saver in the entire second brain” — his cron-based implementation gathers context from Gmail, task management, GitHub, and Slack, then reasons about what needs attention. He reports saving at least twelve hours per week.

The convergence: agents that act proactively, using accumulated context to decide what’s worth doing. The divergence: scope of autonomy. OpenClaw gives the heartbeat broad authority. PAI constrains it through hooks and permission models. Others apply zero-trust with selective write access — the agent can read but not post, can draft but not send. Same idea, different risk tolerances.

The Emerging Fourth Pillar

The three pillars — memory, skills, heartbeat — are where the convergence is solid. But there’s a fourth pillar forming at the edges that none of the three have fully solved: multi-agent coordination.

A community member named cristbc published a triad setup guide — a comprehensive template for running PAI and OpenClaw together on separate machines as a coordinated system. The architecture is hub-and-spoke: PAI on a macOS workstation as the primary orchestrator, OpenClaw on a dedicated Linux machine for autonomous task execution, and a human principal directing both. Four communication channels: WebSocket for inter-agent messaging, Telegram for agent-to-human notifications, mobile Telegram for human-to-OpenClaw commands, and Syncthing for file exchange.

In PAI Discussion #542, the community reached an organic consensus: PAI and OpenClaw are complementary, not competitive. PAI excels at interactive, deep collaborative work with refined skills and structured reasoning. OpenClaw excels at autonomous, routine task execution across messaging platforms. One contributor proposed a tiered memory architecture — episodic, semantic, procedural — with episodic memory staying local per agent while semantic and procedural memory gets shared.

This is the CAP theorem applied to agent memory. You’re choosing between consistency and partition tolerance. Shared memory means both agents have the same context but introduces synchronization complexity. Partitioned memory means each agent maintains its own worldview but risks drift.

The unsettled questions are real: How do agents delegate work to each other? How does inter-agent trust work — does one agent blindly execute what another requests? What’s the communication protocol? What’s the security model for the channel between them? Nobody has standardized this. The triad guide is the closest thing to a reference implementation, and it’s a template with variables, not a product.

Jensen Got It Half Right

Back to the GTC stage. “OpenClaw is the operating system for personal AI.” “Probably the single most important release of software, probably ever.” “Every company needs an OpenClaw strategy.” Bold claims. The market believed them.

What Jensen got right: personal AI infrastructure IS as significant as he claims. The comparison to Linux and HTTP is directionally correct — this is foundational infrastructure, not a feature. His mandate to CEOs reflects genuine urgency. When he says “every SaaS company will become an Agent-as-a-Service company,” the convergence across a half-dozen independent projects supports the thesis. The pattern is real.

What he got wrong: calling OpenClaw “the OS” conflates one implementation with the underlying pattern. OpenClaw is Ubuntu, not Linux. The actual operating system — the architectural pattern that makes personal AI work — is memory plus skills plus heartbeat. And that pattern exists in PAI, in NanoClaw, in Khoj, in Leon, in every custom build that someone spins up in a weekend. It’ll exist in whatever comes next, because the constraint space has an attractor.

Jensen’s framing is top-down: a CEO declares the standard. The builder convergence is bottom-up: project after project discovers the pattern independently. When top-down and bottom-up point at the same thing, that’s the strongest possible signal. But the top-down framing overindexes on the product while the bottom-up evidence points at the pattern underneath.

NVIDIA shipped NemoClaw to address the security gap — kernel-level sandboxing, a privacy router, local inference. That’s necessary infrastructure. But as I wrote in that post, the sandbox solves Layer 1. The organizational governance, identity management, and coordination protocols that make agents actually useful at scale — those are still unsolved, and they’re the layers where the broader builder convergence offers more guidance than any keynote.

What the Convergence Tells Us

When project after project independently discovers the same architecture AND the CEO of the world’s most valuable company declares it foundational, the implications are clear.

The pattern is real. Not one person’s opinion, not one product’s architecture — emergent consensus validated from both directions. Memory, skills, and heartbeat are the personal AI stack the same way that compute, storage, and networking are the cloud stack. The abstractions are settling.

The stack is learnable. Medin rebuilt it in days. NanoClaw shipped in ~500 lines of TypeScript. The patterns are simple and composable — a few thousand lines of code and markdown. That’s the barrier to entry for building your own personal AI infrastructure. It’s low enough that “build your own” is a real option for anyone willing to follow a phased approach.

The security model is the differentiator. The three pillars are converged. How you secure them is where the implementations diverge sharply. Marketplace trust, curated trust, zero-trust. Broad autonomy, event-driven autonomy, read-heavy autonomy. The architecture is the same; the trust decisions are where your values show.

Multi-agent is next. The fourth pillar is forming but hasn’t converged yet. When it does — when shared memory protocols, delegation patterns, and inter-agent trust frameworks reach the same level of consensus as the three pillars — personal AI infrastructure takes another step from “impressive personal tool” to “distributed cognitive system.” The triad guide is early, but it’s directionally right.

How Real Standards Emerge

Nobody sat down and designed a standard for personal AI infrastructure. Jensen didn’t design it — he recognized it after OpenClaw proved the demand. Miessler didn’t design it — he discovered it while building a framework for human-AI collaboration. Steinberger didn’t design it — he built what he needed and the world showed up. A dozen other builders didn’t design it either — they each solved their own problem and landed in the same place.

That’s how real standards emerge. Not from committees. Not from keynotes. From convergent evolution — independent builders solving the same problem and arriving at the same answer because the problem itself constrains the solution space.

The memory layer, the skills layer, and the heartbeat. That’s the personal AI stack. The security model and the multi-agent coordination layer are still being written. I’m running PAI daily and watching the broader ecosystem — building on the pattern myself — to see which way those settle.

I wrote about the personal AI wars two months ago as a comparison of competing tools. That framing was useful but incomplete. The interesting story isn’t which tool wins. It’s that the architecture underneath them all converged before anyone noticed.

If the convergent architecture angle resonates, the companion piece is Context Engineering Is Infrastructure, Not a Skill — the seven building blocks that these three pillars map onto.

Find me on X @orestesgarcia or LinkedIn — I write about what happens when AI infrastructure meets regulated reality.