The Sandbox Isn't the Hard Part

Jensen Huang told every CEO at GTC 2026 to develop an OpenClaw strategy. He compared OpenClaw to Linux and HTTP — foundational infrastructure for a new computing paradigm. He called it “the most popular open source project in the history of humanity” and declared that “Claude Code and OpenClaw have sparked the agent inflection point — extending AI beyond generation and reasoning into action.” Then NVIDIA shipped the security infrastructure to back up the mandate: NemoClaw, an enterprise on-ramp that bundles OpenClaw with kernel-level sandboxing and local inference in a single-command install.

NVIDIA already had the sandbox. OpenShell — their kernel-level runtime for AI agents — works with Claude Code, Codex, OpenCode, and OpenClaw alike. It’s agent-agnostic by design. What’s new is NemoClaw as the enterprise integration layer, plus Jensen’s framing that this is no longer optional. Every company needs an agent strategy. NVIDIA built the security foundation. The mandate is clear.

The part nobody shipped is the organizational governance that determines whether any of this actually works. The sandbox exists. The mandate exists. The four layers between “isolated agent” and “governed agent in production” — identity, audit, change management, organizational readiness — those are still your problem. And if Sol Rashidi’s data holds, they’re 70% of the problem.

What NVIDIA Actually Built

Credit where credit is due: the engineering is genuinely impressive.

OpenShell implements three independent kernel-level isolation layers. Landlock confines filesystem access — agents can only read and write where policy explicitly allows. Seccomp filters system calls, blocking privilege escalation at the kernel boundary. Network namespaces give per-binary network control with default-deny egress. All three enforce simultaneously, and critically, enforcement happens out-of-process — the security constraints live on the environment, not inside the agent. A compromised agent can’t disable its own sandbox because it doesn’t control it.

The policies are declarative YAML with deny-by-default semantics. This is the right design decision. If you’ve worked with infrastructure-as-code, the pattern is familiar: define what’s allowed, reject everything else, version the policies alongside the code. OpenShell applies that pattern to agent execution rather than cloud infrastructure. And because it’s agent-agnostic, Claude Code, Codex, and Cursor all run inside it unmodified. The sandbox doesn’t care which agent it’s containing.

NemoClaw is the OpenClaw-specific layer on top. It’s a TypeScript CLI plugin that bundles OpenShell with Nemotron inference and a privacy router into a single-command install. Think of OpenShell as the runtime and NemoClaw as the opinionated distribution — the difference between Linux and Ubuntu. The enterprise partners — Adobe, Salesforce, SAP, CrowdStrike, Cisco, Dell — are betting on the distribution, not just the kernel.

Why OpenClaw Needed This Desperately

The mandate makes more sense when you look at what happened without it.

CVE-2026-25253 dropped with a CVSS score of 8.8 — a one-click remote code execution vulnerability in OpenClaw. The Particula security audit found that 20% of skills in the ClawHub marketplace were malicious — over 800 packages designed to exfiltrate data, escalate privileges, or establish persistence. Over 30,000 OpenClaw instances were exposed to the internet without authentication. Palo Alto Networks called it “the potential biggest insider threat of 2026.”

This is what happens when 250,000 developers adopt a tool faster than security can keep pace. OpenClaw isn’t a bad project — it’s a genuinely impressive piece of engineering that validated massive demand for personal AI agents. But it’s a case study in what I described in OpenClaw and the Trust Ladder: demand validation meets skipped governance. The market proved the demand. The security crisis proved the consequences of fulfilling that demand without infrastructure.

NemoClaw is NVIDIA’s answer to that crisis. The sandbox addresses the technical security gap — process isolation, filesystem confinement, network control. It’s a real solution to a real problem. But it’s a solution to the problem that was already visible. The problems that sink AI deployments in regulated environments are the ones you can’t see from a kernel trace.

The Five Layers of Agent Governance

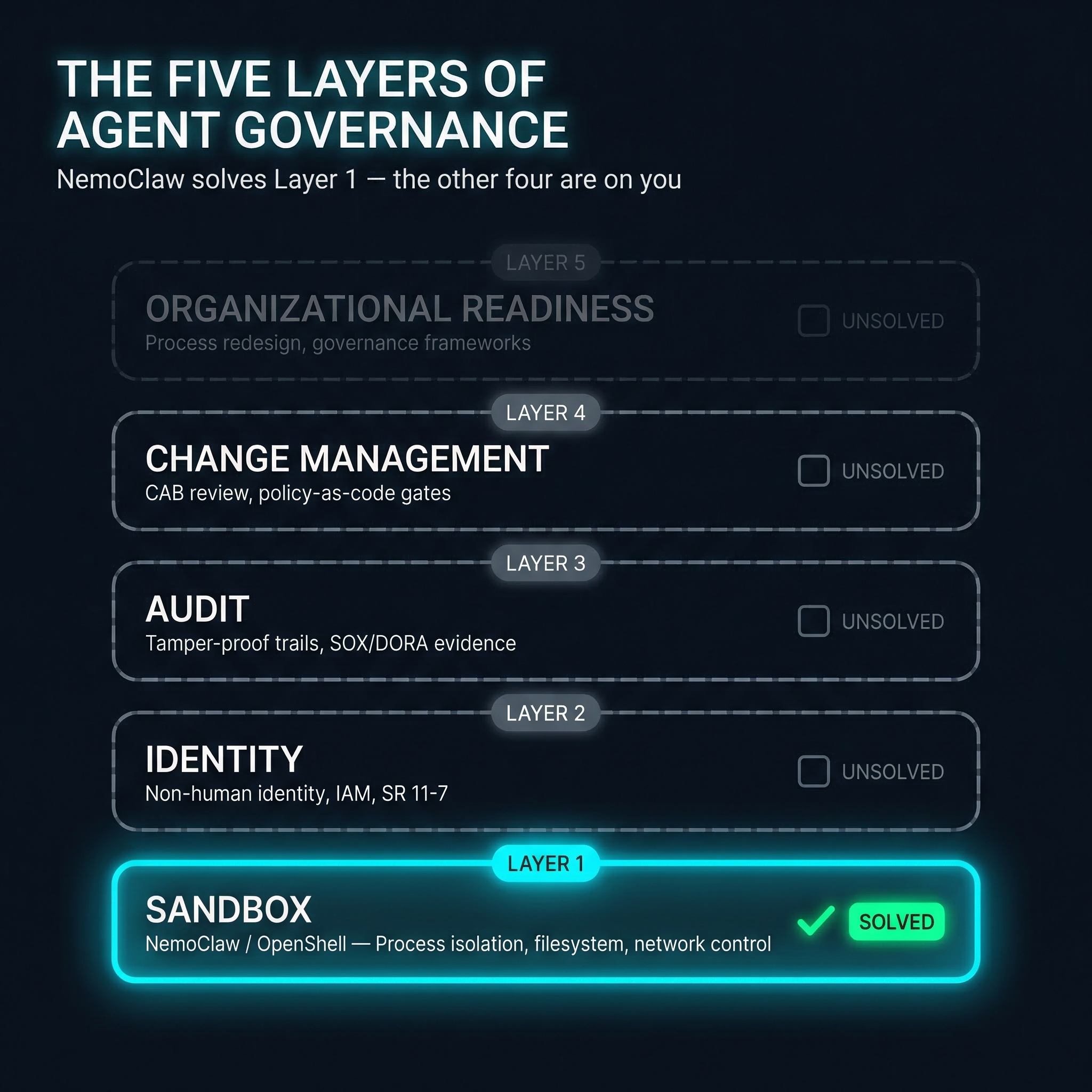

Here’s a framework for thinking about what “governed agent in production” actually requires. NemoClaw solves Layer 1. The other four are unsolved.

Layer 1: Sandbox — Process isolation, filesystem confinement, network control, inference routing. What NemoClaw and OpenShell deliver. Deny-by-default execution boundaries. This layer answers: what can the agent technically do? Status: solved.

Layer 2: Identity — Non-human identity management. Who is this agent acting as? What credentials does it hold? How does its identity map to your existing IAM framework? SR 11-7 model risk classification for agents making consequential decisions. This layer answers: who authorized this agent to act? Status: unsolved. Most organizations don’t have a non-human identity strategy, let alone one that covers AI agents.

Layer 3: Audit — Tamper-proof trails where every action is traceable to an authorized decision. Not just logging what the sandbox blocked — logging what it allowed and why. The kind of evidence that satisfies examiners, not just engineers. This layer answers: can we prove what happened and why? Status: unsolved. OpenShell logs policy violations, but regulated environments need proof of what agents did within their allowed scope.

Layer 4: Change Management — Agent actions flowing through change advisory board review. Policy-as-code gates that require approval before an agent modifies production systems. Rollback documentation. Risk scoring for agent-proposed changes. This layer answers: did a human approve this change before it happened? Status: unsolved. OpenShell’s operator approval pattern is a break-glass mechanism, not a CAB workflow.

Layer 5: Organizational Readiness — The 70% from Sol Rashidi’s data. Process redesign. Training programs for teams that will work alongside agents. Governance frameworks that bridge the gap between “we have a sandbox” and “we have a governed deployment.” Cultural change. This layer answers: can the organization absorb autonomous agents? Status: unsolved. And if the data from Automating Chaos holds, this is where most failures actually live.

The Trust Ladder maps to these layers directly. The sandbox enables Level 1-2 trust — observe and advise, human decides. Organizational readiness enables Level 3-5 — the graduated autonomy that lets agents actually operate in production. The validation hooks that enable climbing the ladder must be agent-agnostic — the governance infrastructure can’t be welded to one vendor’s runtime. And the policy-as-code bridge between AI-generated changes and production deployment applies to agent actions just as it applies to Terraform plans.

Where the Engineering Stops and the Org Chart Starts

I genuinely want to deploy this. The engineering is right. The architecture decisions are sound. Out-of-process enforcement, declarative policies, deny-by-default — this is how you’d design it if you started from first principles. As a developer, I look at OpenShell and think: finally, someone built the security layer properly.

But I work in banking, and I know what happens next. You walk into the compliance review with a beautiful sandbox architecture and the first question is: “Where are the audit trails?” Not “how does Landlock work?” — nobody in that room cares. They want to know what the agent did, who approved it, and whether you can prove that chain of authorization six months from now when the examiners show up. The sandbox proves the agent couldn’t break out. It doesn’t prove what it did inside the walls.

Then the operational resilience questions start. If an agent hallucinates a trade, who gets paged? What’s the escalation path? What’s the recovery time objective? If your agent calls a cloud inference API, that’s a third-party dependency — and regulators want to know you’ve assessed the risk of that dependency going down during market hours. These aren’t unreasonable questions. They’re the same questions we answer for every other production system. AI agents don’t get a pass just because the technology is exciting.

This is the same governance gap I described with the Architect’s Dilemma — impressive technology on both sides, no unified governance in the middle. The technology is ready. The organizational scaffolding around it isn’t. And that gap is where deployments stall — not because the sandbox failed, but because nobody built Layers 2 through 5.

The Privacy Router Is the Sleeper Feature

If I had to bet on which part of NemoClaw matters most for enterprise adoption, it’s not the sandbox. It’s the privacy router.

Every AI agent proposal I’ve seen stall in a regulated environment hit the same question: “Where does the data go?” Not “is the model accurate?” Not “can we sandbox it?” — where does customer data end up when the agent calls an inference API?

If you’re already inside a cloud provider’s enterprise boundary — say, an Azure Enterprise Subscription running AI Foundry — the answer is straightforward. Your data stays within your tenant. Your BAA and enterprise agreements cover the inference endpoints. The “where does the data go?” question is already answered by your existing cloud architecture. For organizations in that position, the privacy router is less about solving a new problem and more about adding defense-in-depth to a problem you’ve already addressed at the platform level.

But not every agent call stays inside your tenant boundary. OpenClaw agents can invoke arbitrary skills, call external APIs, and chain together tools that reach outside your cloud perimeter. That’s where OpenShell’s privacy router earns its keep. It’s model-agnostic — works whether your agents call Claude, GPT, Nemotron, or anything else — and it strips PII from prompts before they reach any inference endpoint, tokenizes sensitive fields, and enforces policy-based routing decisions. The router doesn’t care which model answers. It cares what data leaves your perimeter. As an engineer, this is the kind of infrastructure I want to build on — it solves the problem at the right layer without forcing you into a specific model ecosystem.

The realistic value for most of us isn’t replacing our existing cloud inference with local Nemotron — it’s catching the edge cases. The agent query that unexpectedly reaches a third-party API. The skill that phones home to an endpoint outside your tenant. The chained tool call that routes customer context through a service you haven’t vetted. A privacy router at the sandbox boundary catches what your cloud enterprise agreement doesn’t cover.

The on-premises Nemotron path exists for organizations that want everything local — NVIDIA’s NIM microservices make that possible, and the Palantir-NVIDIA sovereign AI architecture is the high-end version. But for most enterprises already invested in a cloud provider, the privacy router’s value is as a safety net for data flows that escape your existing controls, not as a replacement for them.

What I Don’t Have Figured Out Yet

I haven’t run OpenShell in a bank yet — but I want to. The engineering decisions are sound enough that I’d advocate for a proof of concept. This entire analysis is my assessment of the gap between what NemoClaw provides and what regulated environments require — informed by years of building banking infrastructure, but not field-tested against a specific deployment. The five-layer framework is a model I’m proposing, not something I’ve validated through implementation. The layers might be wrong. There might be six. There might be three that matter and two that don’t.

Jensen’s mandate — “every company needs an OpenClaw strategy” — assumes organizations can absorb autonomous agents. Most can’t absorb a ChatGPT usage policy. The gap between Jensen’s vision and organizational reality is the same gap Sol Rashidi catalogs with her 74% stall rate at MVP stage. His prediction that “every SaaS company will become an Agent-as-a-Service company” is probably directionally correct, but the timeline is aspirational for any industry with a compliance function. Regulated industries measure adoption in years, not quarters.

And there’s a question I keep circling back to: is NVIDIA’s real play the sandbox, or the gravitational pull toward Nemotron? The CUDA analogy keeps surfacing — own the infrastructure layer, make it indispensable, win the platform. OpenShell is Apache 2.0 and model-agnostic, which looks genuinely open. The privacy router works with any model. But NemoClaw — the opinionated distribution — defaults to Nemotron inference through NVIDIA’s cloud. Most enterprises will start with cloud frontier models because that’s what they already use. The question is whether the NemoClaw on-ramp gradually shifts inference toward NVIDIA’s stack. The lock-in isn’t contractual — it’s gravitational. The Red Hat playbook: the platform is free, the convenient distribution is where the revenue flows. As I wrote in Automating Chaos, Jensen’s mandate without organizational readiness is automating chaos with better marketing. The sandbox doesn’t change the underlying dynamic.

The Layer That Matters Most

Jensen told every CEO to have an OpenClaw strategy. He’s right — the agent era is here, and the demand signal from 250,000 developers isn’t ambiguous. NVIDIA built the sandbox, and the sandbox is good. OpenShell’s kernel-level isolation is real engineering that addresses a real security crisis. NemoClaw makes it deployable. The privacy router solves the data residency problem that actually kills deployments. As an engineer, I’m genuinely excited about what this enables.

But the sandbox is Layer 1 of five. Identity, audit, change management, organizational readiness — those are on us. And if Sol Rashidi’s data holds, those four layers are where 70% of the failure happens. The good news is that Layer 1 used to be unsolved too, and NVIDIA just solved it with genuinely good engineering. The path forward is building the other four with the same rigor. That’s the work worth doing.

The NemoClaw repository and OpenShell are both Apache 2.0. If you’re evaluating agent infrastructure, start there. If the five-layer governance framework resonated, the Trust Ladder provides a model for calibrating agent autonomy — and Automating Chaos explores why the organizational layer is where most deployments actually fail.