Claude Buddy Stopped Being a Tool and Became an Extension

Four months ago I wrote that Claude Buddy was optimized for accountability, not learning. That sentence became the design brief for v5.

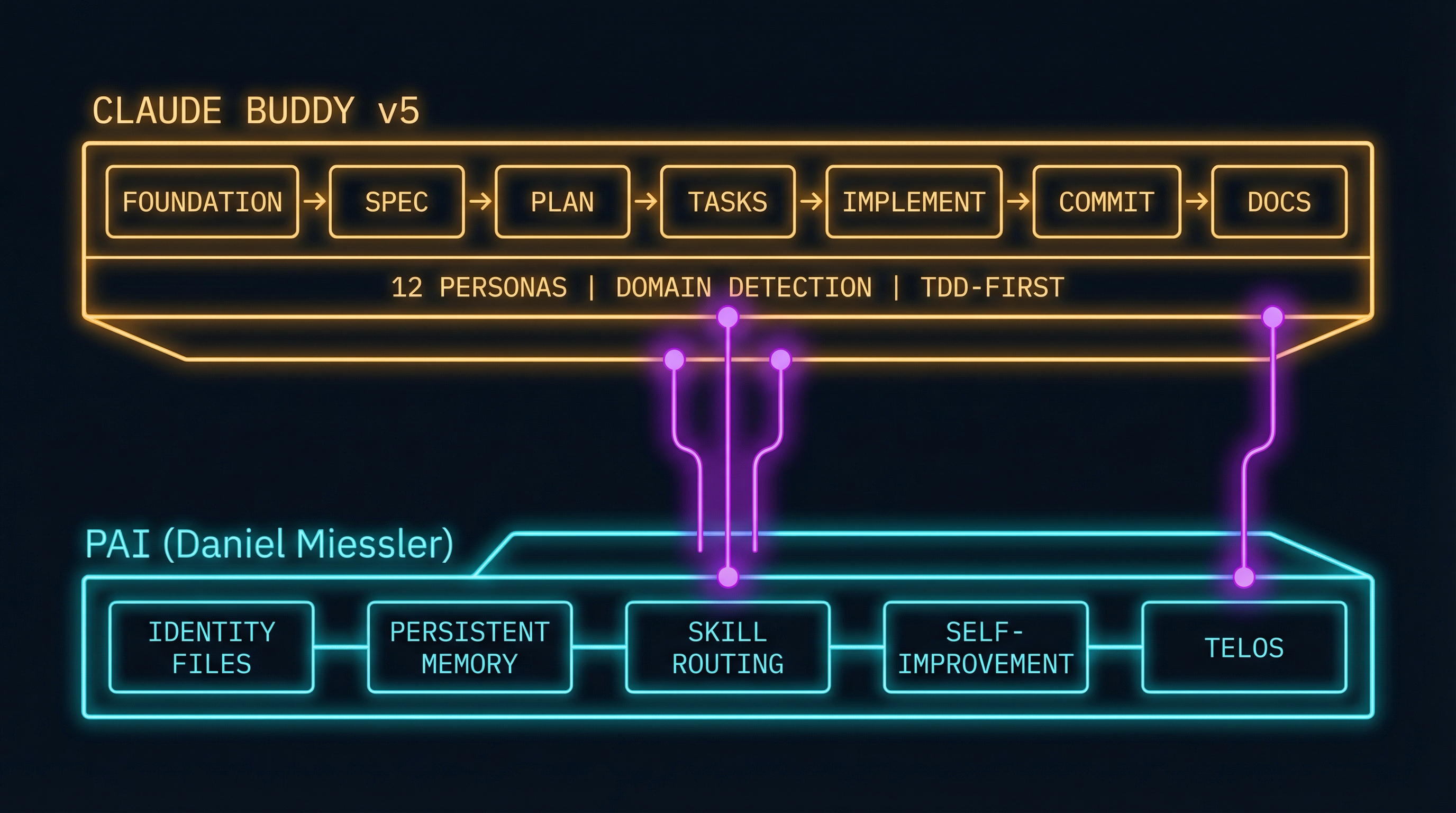

The original post mapped Claude Buddy’s workflow against Daniel Miessler’s Personal AI Infrastructure (PAI) and found a clean alignment across six of seven phases. Specification mapped to Observe and Think. Planning mapped directly. Tasks and Implementation mapped to Build and Execute. The QA persona covered Verify. But the seventh phase — Learn — had no counterpart. Claude Buddy captured audit trails. It didn’t capture insights.

I ended that post saying I was experimenting with learning hooks. Four months later, the experiment is done. Claude Buddy v5 doesn’t add learning hooks. It adds PAI as a dependency and inherits learning as infrastructure. The tool became an extension. Here’s what that means and why it matters.

The Gap That Wouldn’t Close

I ran two systems in parallel for months. On one terminal, PAI powered my personal workflow — persistent memory, identity files, a structured algorithm for task execution, and a self-improvement loop that captured what worked and what didn’t across every session. On another terminal, Claude Buddy powered development work — structured specifications, implementation plans, persona-guided coding, git workflows.

Both systems were good at what they did. Neither was complete.

Claude Buddy v4 had twelve specialist personas, seven focused skills, domain auto-detection, and a foundation document system that defined project principles and constraints. Every session produced documented specifications, auditable decisions, and clean commits. But every session also started cold. The security persona didn’t remember the vulnerability pattern it flagged last week. The architect persona didn’t know which design approach failed in the previous session. The foundation doc was static — it defined constraints, but it didn’t evolve with what the team learned.

PAI had the opposite profile. Daniel Miessler designed it with learning as a first-class concern — not a nice-to-have, but a core architectural decision. Identity files persist who you are. Memory persists what you’ve learned. Ratings and reflections persist what worked. The TELOS system connects daily work to longer-term goals. Every session builds on the last. But PAI is a general-purpose personal AI infrastructure. It doesn’t have opinions about how software should be specified, planned, or tested.

Three days ago I wrote about the stack nobody designed — how builder after builder independently converged on the same three-pillar architecture: memory, skills, and heartbeat. Claude Buddy had skills. It didn’t have memory or heartbeat. PAI had all three. The obvious architectural move was to stop rebuilding what Daniel Miessler already solved and start extending it.

Depend, Don’t Duplicate

The core design decision in v5 was simple: make PAI a dependency instead of reimplementing its capabilities.

This isn’t a loose integration or an optional plugin. Claude Buddy v5 ships with a PAI setup plugin that handles installation, version detection, and configuration. When you install Claude Buddy, you get PAI. The buddy plugin then extends PAI with development workflow capabilities — the skills, personas, and domain detection that Claude Buddy is known for.

The separation is clean. PAI lives at ~/.claude/ and owns the infrastructure layer:

- Identity files — ABOUTME, AI steering rules, opinions, writing style, assistant identity.

- Persistent memory — Learnings, feedback, project context that accumulates across sessions.

- Skill routing — Intelligent capability selection based on the current request.

- Self-improvement — Ratings, reflections, and learning signals feeding a continuous improvement cycle.

- TELOS — Deep goal documentation connecting daily tasks to life and career objectives.

Claude Buddy lives at ~/.buddy/ and owns the development workflow layer:

- Seven focused skills — Foundation, Specification, Planning, Tasks, Implementation, SourceControl, Documentation.

- Twelve specialist personas — Contextual expertise that activates at the right workflow stage.

- Domain auto-detection — Technology-specific templates for React, JHipster, MuleSoft, and custom domains.

- TDD-first task generation — Every task includes test requirements before implementation begins.

The storage separation matters. ~/.buddy/ is independent of ~/.claude/, so upgrades to either system never overwrite the other.

Why depend instead of duplicate? Because the convergent evolution argument from “The Stack Nobody Designed” applies to my own project. Every builder who tries to implement memory, identity, and learning from scratch is reimplementing what Daniel Miessler already designed, tested, and open-sourced. His core philosophy — “System > Intelligence” — means a well-designed system with average models outperforms brilliant models with poor architecture. Claude Buddy should be excellent at development workflows, not mediocre at everything. PAI handles the infrastructure. Claude Buddy handles the craft.

This is the same pattern that made Unix successful. Small tools that do one thing well, composed through clean interfaces. PAI is the operating system. Claude Buddy is the application.

What the Skills Actually Do

Claude Buddy’s seven skills form a pipeline from initial concept to published documentation. Each skill follows the Agent Skills open standard — the same format adopted by over 30 agent products including Cursor, VS Code, GitHub Copilot, and Gemini CLI. Composable, versionable, shareable.

Foundation defines project principles, patterns, and constraints — PAI’s identity files, but for the project instead of the person. It auto-detects your technology domain and loads domain-specific templates that shape every downstream skill.

Specification documents what you’re building and why, mapping to PAI’s Observe and Think phases. Requirements, constraints, acceptance criteria, and anti-requirements — what the feature explicitly should not do.

Planning designs the implementation approach. The Architect persona activates here, ensuring every decision aligns with the foundation’s constraints.

Tasks breaks the plan into executable, test-driven units — every task specifies what test to write before specifying what code to implement. Ordered by dependency, parallelizable where possible.

Implementation executes tasks with persona-guided expertise. Writing a React component? The Frontend persona brings accessibility patterns. Touching authentication? The Security persona reviews for vulnerabilities. The personas aren’t prompts — they’re structured skill files with domain knowledge and specific behavioral rules.

SourceControl generates conventional commit messages through the Scribe persona and integrates with existing branch and PR workflows.

Documentation generates technical docs from the artifacts produced during specification, planning, and implementation — reflecting what was actually built, not what was planned.

The twelve personas activate contextually across these skills:

| Group | Personas | When They Activate |

|---|---|---|

| Design | Architect, Product Owner | Specification, Planning |

| Build | Frontend, Backend, DevOps | Implementation |

| Quality | Security, QA, Performance | Implementation, Review |

| Improve | Refactorer, Analyzer | Debugging, Code Quality |

| Communicate | Mentor, Scribe | Documentation, Commits |

Each persona is a genuine specialist. The Security persona threat-models based on your project’s domain and stack. The QA persona designs test strategies covering the specific risk profile of your changes. And domain auto-detection is the multiplier — when Claude Buddy detects a JHipster project, every persona adjusts to JHipster’s layered architecture, Spring Boot conventions, and Angular Material patterns. Custom domains extend this with your own detection rules, workflows, and templates.

TDD-First Is Not Optional

This is an opinionated design decision and I’ll defend it.

Every task that Claude Buddy generates includes test requirements before implementation requirements. Red, green, refactor — in that order, every time. The AI writes the failing test first. The implementation makes the test pass. The QA persona verifies that the test actually validates the requirement and not just the implementation.

When I first built Claude Buddy, I described the core philosophy as “guided autonomy” — the AI should be incredibly capable, but it should operate within boundaries. TDD-first is the most concrete expression of that principle. The test is the boundary. It defines what “done” means before anyone writes a line of production code.

This matters more for AI-generated code than human-written code. AI coding agents are fast — they produce working code in seconds. But “working” and “correct” aren’t the same thing. A function can return the right value for test inputs while containing a subtle bug that surfaces in production. TDD-first catches this class of error because the test encodes the requirement, not the code.

For teams in regulated environments, there’s a secondary benefit: the test suite is the audit trail. Regulators can trace from business requirement to test case to production code. But I’d enforce TDD-first in a startup too. The audit trail is a side effect of good engineering practice. The primary benefit is fewer defects, caught earlier. That’s just better software.

Tasks also support parallel execution — independent tasks are explicitly flagged and can be distributed across sessions or developers while the test suite ensures integration.

What Learning Looks Like Now

This is the payoff.

The January post ended with three integration ideas: learning hooks, persona learning, and compliant UOCS. Here’s where each one landed.

Learning hooks shipped — but not the way I originally imagined. I was going to build a custom hook system that prompted for insights at session end. Instead, Claude Buddy inherits PAI’s native learning infrastructure. Daniel Miessler designed PAI with a multi-tier memory system — hot memory for the current session, warm memory for cross-session learnings, cold memory for long-term knowledge. When you rate a session, when you correct an approach, when something works unexpectedly well — PAI captures it. Claude Buddy sessions now feed into that same capture system automatically.

The practical difference is immediate. Last week, the Security persona flagged a dependency with a known vulnerability in a React project. That interaction was captured as a learning signal. Two days later, working on a different React project, the same dependency appeared — and the system already knew to flag it. Not because someone wrote a rule. Because the previous session’s learning persisted.

Persona learning partially shipped. The twelve personas don’t maintain their own isolated knowledge stores yet — that’s architecturally complex and raises questions about memory boundaries that I haven’t fully resolved. But they benefit from PAI’s persistent memory in a meaningful way. The Architect persona in session ten knows that a microservices approach was rejected in session three because the team size couldn’t support it. The Frontend persona knows that the team prefers composition over inheritance for React components because that feedback was captured five sessions ago. Context accumulates. Personas start warm, not cold.

Compliant UOCS remains an open design space. PAI’s Universal Output Capture System logs everything — session transcripts, decisions, learnings, research findings. In environments with data sensitivity requirements, “log everything” is a non-starter. I’ve sketched approaches for filtered capture — logging insights without logging content, capturing decision rationale without capturing the data that informed the decision — but I haven’t shipped it yet. This is genuinely hard to do right, and shipping a half-measure would be worse than shipping nothing. It’s the most interesting unsolved problem in the integration, and I expect it’ll be the focus of a future release.

The TELOS integration is the piece I didn’t anticipate when I wrote the original post. Daniel Miessler’s TELOS system captures deep goal documentation — mission, beliefs, strategies, challenges, projects. When Claude Buddy’s Foundation skill initializes a new project, it can connect project goals to TELOS goals. A refactoring initiative isn’t just “reduce technical debt” — it connects to a career goal of building maintainable systems, which connects to a belief about engineering craftsmanship. This sounds abstract until you experience it. The AI makes different decisions when it understands not just what you’re building, but why you’re building it in the context of what you’re trying to become.

The Convergence From the Inside

Three days ago I wrote about convergent evolution in personal AI infrastructure from the analyst’s chair. Today I have a data point from the builder’s chair.

Claude Buddy v5’s architecture maps directly onto the three-pillar pattern I described. Memory comes from PAI — persistent identity, accumulated learnings, cross-session context. Skills come from both layers — PAI’s general skill routing plus Claude Buddy’s seven development-specific skills and twelve personas. The heartbeat — proactive, scheduled automation — comes from PAI’s hooks system and its emerging capabilities through the Claude Agent SDK.

The decision to depend on PAI rather than reimplement its capabilities is itself a convergence signal. I needed memory, identity, and learning. Daniel Miessler had already solved memory, identity, and learning. Building my own version would have been architectural vanity. You don’t set out to validate a thesis — you make pragmatic engineering decisions, and when you step back, the architecture matches the pattern that a half-dozen independent builders discovered independently. The constraint space has an attractor.

The interesting question isn’t whether the three-pillar architecture is correct. The interesting question is what the fourth pillar looks like. In “The Stack Nobody Designed,” I flagged multi-agent coordination as the emerging pattern. Claude Buddy as a PAI extension is a building block toward that. When PAI instances can coordinate — and Daniel Miessler’s Lattice System sketches exactly this at organizational scale — Claude Buddy workflows could delegate across agents. An Architect persona on one machine. A Security persona on another. A QA persona running tests in a sandbox. Coordinated through standardized APIs broadcasting work specifications across teams.

That’s not built yet. It’s the frontier. But for the first time, Claude Buddy’s architecture doesn’t block it. When you’re an extension of a composable platform rather than a standalone monolith, composition is the default, not the exception.

What Ships Today

Claude Buddy v5 is available now on the Claude Buddy Marketplace. The installation handles PAI setup automatically — you don’t need to configure Daniel Miessler’s framework separately. The buddy plugin installs on top, and the complete workflow is available immediately: Foundation through Documentation, with persistent memory, learning, and identity from day one.

Four months ago I asked whether we’d build systems that actually get smarter over time, or just systems that document their mistakes really well. Claude Buddy v5 is the first version that does both. The accountability infrastructure that enterprise teams need — structured workflows, documented decisions, audit trails — now sits on a learning infrastructure that improves with every session. Governance and growth. Accountability and adaptability. The gap closed.

The narrative arc: Why I Built Claude Buddy → What PAI Taught Me About the Missing Piece → The Stack Nobody Designed → this post. The convergence thesis is here. The context engineering blueprint is here.

Find me on X or LinkedIn. I write about what happens when AI infrastructure meets regulated reality.