From Threads to Trust: A Framework for Measuring Your Agentic Engineering Growth

How do you know you’re actually improving at agentic engineering?

It’s a question I’ve been wrestling with. We’ve moved from writing code to directing AI that writes code, but the metrics haven’t caught up. Lines of code? Meaningless when Claude generates thousands in minutes. Features shipped? Doesn’t capture the nuance of how efficiently you’re leveraging your tools.

Two recent videos crystallized a framework that finally makes sense: IndyDevDan’s Thread-Based Engineering and Brian Casel’s “Claude Code Is All You Need”. Together, they offer both a measurement system and a counter-intuitive truth about simplification.

The Thread Mental Model

Andy Devdan (IndyDevDan) introduces a powerful abstraction: all agent-assisted work is threads.

A thread is a unit of engineering work where you, the human, show up at exactly two points:

- The Start: You provide the prompt or plan

- The End: You review and validate the output

Everything in between—the file reads, the code writes, the test runs—those are tool calls performed by the agent.

[Human: Prompt] → [Agent: Tool Calls] → [Human: Review]Here’s the insight that reframes everything: tool calls roughly equal impact. Pre-2023, humans were the tool calls. We wrote the code, read the files, ran the commands. Now agents handle that middle portion. The metric isn’t lines of code—it’s total tool calls your agents execute on your behalf.

The Thread Type Hierarchy

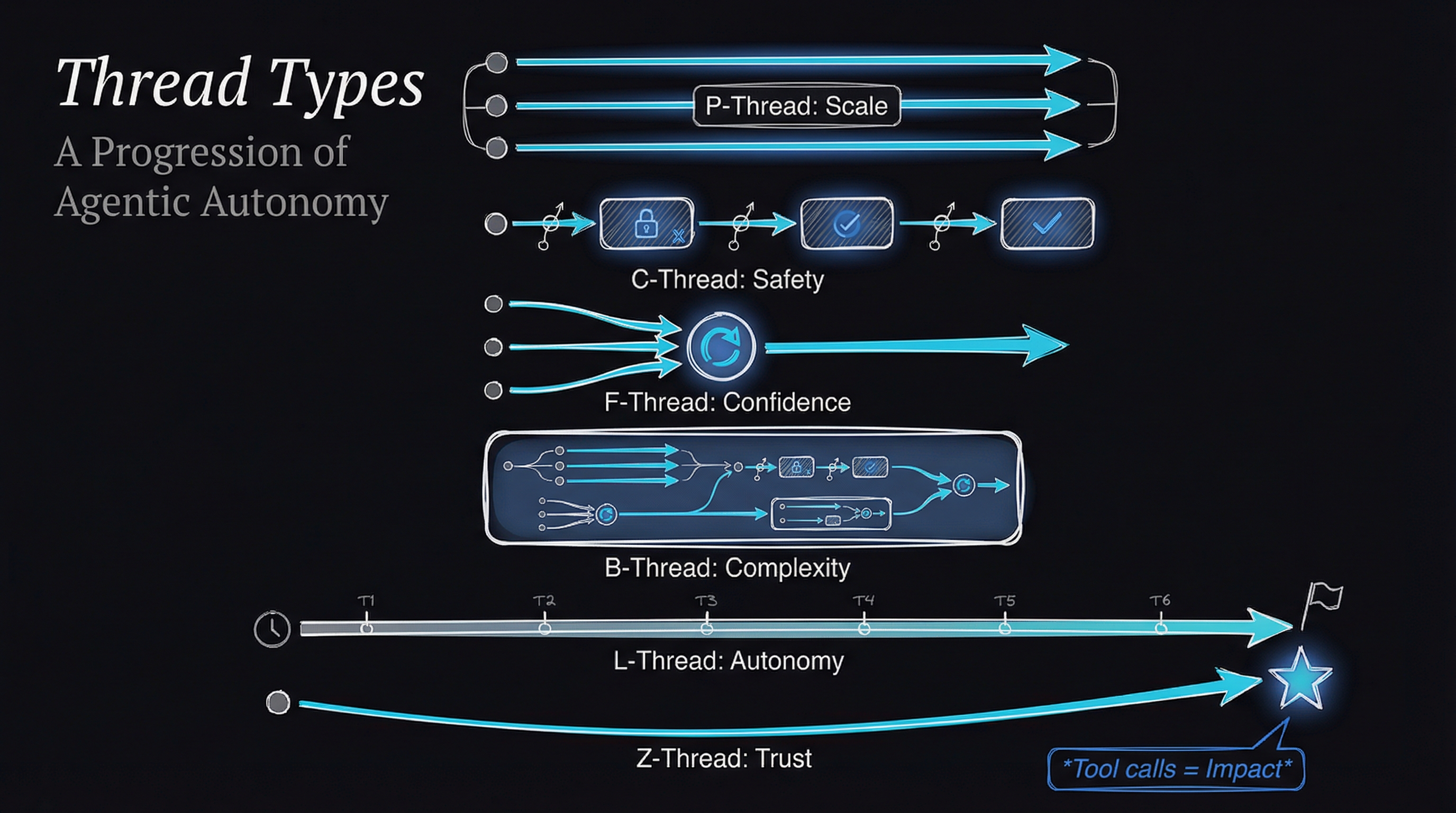

Not all threads are equal. Andy lays out a progression:

P-Threads (Parallel): Multiple agents running simultaneously. Boris Cherny, the creator of Claude Code, runs 5+ terminal instances plus 5-10 background web sessions. “Guess who’s getting more done? The engineer kicking off one agent or five?”

C-Threads (Chain): Sequential threads with human gates between phases. You sacrifice efficiency for safety—useful for production deployments or security-sensitive changes.

F-Threads (Fusion): Same prompt sent to multiple agents, results combined. Four agents reviewing the same code catch more issues than one. Confidence through redundancy.

B-Threads (Big): Nested threads where agents spawn agents. The orchestrator kicks off a planning agent, then a building agent, then review agents. You only see the outer shell.

L-Threads (Long): Extended duration work—hours instead of minutes, thousands of tool calls. Boris has runs documented at over a day of continuous execution.

Z-Threads (Zero): The endgame. Maximum trust where human review becomes unnecessary. As Andy puts it: “It isn’t that we don’t look at the code, it’s that we know we don’t have to.”

The Four Ways to Improve

This framework gives us concrete improvement vectors:

- More Threads — Run additional parallel agents

- Longer Threads — Increase duration before review

- Thicker Threads — Add nested sub-threads

- Fewer Checkpoints — Reduce human intervention

If you want to scale your impact, you must scale your compute. It’s that simple.

The Core Four Framework

Andy distills agentic engineering to four elements:

- Context: Information available to the agent

- Model: The AI model you’re using

- Prompt: Instructions given

- Tools: Capabilities available

“If you understand these four, you understand agents and therefore agentic engineering.”

Better context enables longer threads. Better models enable more autonomy. Better prompts yield better results. Better tools unlock more capabilities. Every improvement technique maps back to optimizing one of these four.

The Simplification Counter-Movement

Here’s where Brian Casel throws a curveball.

Brian created Agent OS, one of the most popular frameworks for structured AI development. He built the spec-driven development methodology, the standards-as-code approach, the elaborate planning workflows. And his message in 2026?

“Pure vanilla Claude Code with Opus 4.5 is all I actually need for 90% of my daily work.”

Wait, what?

The frameworks of 2025—Agent OS, custom cursor rules, MCP servers, elaborate prompt swipe files—solved real gaps in early AI tools. Those gaps largely no longer exist. Claude Code now has native plan mode, clarifying questions, sub-agent orchestration, skills, and task tracking. The features we built externally got absorbed into the core product.

Brian’s 90/10 rule: 90% of work needs just vanilla Claude Code. Only 10%—green field projects without existing codebases, legacy systems with established conventions, genuine friction points—benefit from additional structure.

The New Bottleneck

This leads to perhaps the most counter-intuitive insight:

“The bottleneck isn’t writing code anymore. It’s knowing what to build and how to structure it.”

Models can implement any pattern we describe. They can execute complex multi-file changes, maintain context across long sessions, explore codebases and analyze architecture. What they can’t do:

- Choose which pattern is right

- Understand your users

- Make strategic calls about what to build

- Differentiate what customers say they want vs. what they need

- Apply product thinking and taste

Your years of experience aren’t obsolete—they’re your multiplier. Channel them into scope decisions, architecture choices, user understanding, and strategic prioritization. That’s the craft now.

Practical Implementation

So how do you actually improve? Andy offers concrete techniques:

Stop Hooks: Deterministic validation before agent completion. The agent attempts to stop, a hook runs, validates the work, and either continues the workflow or completes. This enables L-threads without blind trust.

The Ralph Wiggum Pattern: Loop over agents for specific work. Agents plus code outperforms agents alone. Build systems that orchestrate agents rather than manually prompting.

Closed Loop Prompts: Self-correcting systems with strategic feedback loops. The agent validates its own work before completing.

Agent Experts Pattern: Act → Learn → Reuse. Agents retain knowledge across sessions, getting smarter about your specific codebase over time.

What This Means for Claude Buddy

Mapping these insights to Claude Buddy, I see alignment and gaps.

Good alignment: Claude Buddy’s spec → plan → tasks → implement workflow is essentially a C-Thread. Sequential phases with human gates. Safety through structure.

Gaps to address:

- No P-Thread support for parallel task execution

- No F-Thread fusion workflows for multi-agent review

- No L-Thread optimization (stop hooks, validation loops)

- No Z-Thread confidence scoring for graduated trust

The roadmap for Claude Buddy 5.0 is becoming clearer: thread-aware development workflows, confidence scoring, and validation systems that enable longer autonomous runs while maintaining the governance enterprise environments require.

Building Toward Z-Threads

Andy’s vision stuck with me:

“I want to build living software that works for us while we sleep.”

That’s the north star. Not vibe coding—careful, systematic trust-building. Specialized agents for specialized codebases. Robust validation systems. High-trust tooling. We’re not abandoning review; we’re building systems where we know we don’t have to review.

The measurement framework is clear: run more threads, longer threads, thicker threads, with fewer checkpoints. The simplification principle is clear: start with vanilla Claude Code, add complexity only when you feel genuine friction.

The question isn’t whether you’re using the right framework. It’s whether you’re running enough threads, long enough, deep enough, with the right balance of trust and verification.

Because tool calls are the new productivity metric. And the engineer running longer threads of useful work is outperforming the others.

Sources & Credits

This article synthesizes insights from:

- IndyDevDan (Andy Devdan) — Thread-Based Engineering framework, Core Four, thread type hierarchy

- Brian Casel — Agent OS creator, 90/10 simplification principle, spec-driven development

- Boris Cherny — Claude Code creator, vanilla setup insights, validation philosophy

- Andrej Karpathy — “I’ve never felt this behind as a programmer”

Working on thread-based workflows or building AI governance systems? I’d love to hear your approach. Find me on X or LinkedIn.