Python Won't Run Your Bank: The Language Stack Reckoning for Enterprise AI

Rod Johnson told a room full of enterprise developers to stop letting data scientists architect their AI strategy. He’s right — and the conversation he started deserves to go further.

The Air Canada Moment

An Air Canada chatbot hallucinated a refund policy that didn’t exist. A customer relied on it. A tribunal ruled the airline liable. One chatbot, one hallucinated answer, one legal precedent.

Every regulated enterprise is one hallucinated policy away from the same headline. And when the postmortem lands, nobody asks “what model did you use?” They ask: “What production discipline was around this thing? Who reviewed it? Where’s the audit trail?”

That’s the real question underneath “which language stack.” It’s not about syntax preferences. It’s about which ecosystem brings production discipline to AI — and which one optimizes for something else entirely. I wrote about this dynamic in the Trust Ladder — the Air Canada bot was operating at a trust level it hadn’t earned. The language stack question is a proxy for whether you have the infrastructure to calibrate that trust at all.

The Skills Gap Isn’t About Syntax

At the Advantage conference on March 4th, Johnson — creator of the Spring Framework and someone whose work shaped how a generation of us thinks about enterprise architecture — laid out a provocation: the people who are best at training models are not the same people who should be building the production systems that run them. Data science and enterprise application development require genuinely different skills. Different failure mode awareness. Different operational instincts.

He’s right, and the gap is wider than most organizations admit. Getting a model to perform well in a Jupyter notebook is real work. Getting that same capability to survive a SOX audit, handle concurrent users, produce audit trails, and degrade gracefully under load — that’s a fundamentally different discipline. The architectural decisions between those two worlds aren’t about lines of code. They’re about what you optimize for.

I explored a version of this in The Browser Is Not an API — the tools you use for research shape how you think about production. When your primary interface is a notebook designed for experimentation, you build for experimentation. When your primary interface is a framework designed for production services, you build for production services. The tooling isn’t neutral.

The Python Paradox

Let me give Python its due — Johnson’s talk focused on the enterprise side, so let me fill in the other half of the picture.

Python has over 300,000 packages. Every major AI SDK ships Python first. The innovation velocity is unmatched — when Anthropic or OpenAI releases a new capability, the Python SDK gets it days or weeks before anything else. The research community thinks in Python. The training pipelines run in Python. The evaluation frameworks are Python. If you’re doing model fine-tuning, embeddings research, or evaluation pipelines, Python isn’t just a good choice — it’s the only serious one.

The paradox: the language that produces the best research prototypes also produces the worst regulated production systems. Not because Python can’t do production — it obviously can. Django and FastAPI run plenty of serious applications. The problem is that the AI framework ecosystem built on top of Python optimizes for experimentation speed over operational stability.

LangChain is the canonical example. “Academically interesting but operationally fragile” is the kindest honest assessment. Deep abstraction layers that obscure what’s actually happening. Versioning instability that breaks production deployments. Error messages that require reading framework source code to diagnose. This isn’t a Python problem — it’s a framework ecosystem problem. But the framework ecosystem is where enterprises make their stack decisions, and right now, the Python AI framework ecosystem optimizes for the demo, not the deployment.

The Java Case: Boring Is a Feature

Johnson’s solution, naturally, is Java. And the ecosystem is more mature than most AI practitioners realize.

Spring AI hit 1.0 GA. LangChain4j — backed by Red Hat and Microsoft, with hundreds of production deployments — provides a Java-native approach to the same agent patterns. Johnson’s own project, Embabel, takes a genuinely interesting approach using Goal-Oriented Action Planning (GOAP) from game AI to compose agent workflows. It’s too early to evaluate Embabel for production, but the architectural thinking behind it — planning over imperative chains — is worth watching.

Java’s superpower here isn’t innovation speed. It’s production maturity. For a bank already running Spring Boot microservices, adding AI capabilities through Spring AI means: same observability pipeline, same deployment model, same team, same runbooks, same 3 AM incident response. No new operational surface area. No new monitoring stack. No retraining operations engineers.

That’s not exciting. That’s the point. In my Reference Architecture, Java fits naturally at Layers 1 and 2 — the infrastructure and integration layers where operational maturity matters more than framework novelty.

TypeScript: The Language Nobody Expected

Here’s where I want to build on Johnson’s argument. Because there’s a dark horse in this race that he acknowledged but didn’t fully explore — TypeScript.

I built my entire personal AI assistant on top of PAI — Daniel Miessler’s open-source Personal AI Infrastructure framework — extending it with dozens of custom skills, agent compositions, and workflow automations that handle everything from research to code generation. All of it runs in TypeScript on Claude Code. Not because I set out to make a statement about language choice. Because TypeScript offered the best combination of type safety, first-class async/await, and the broadest deployment surface of any language in the stack.

The numbers tell the story. The Vercel AI SDK — the most popular TypeScript AI framework — has over 20 million monthly npm downloads. Mastra, built by the team behind Gatsby, is bringing opinionated agent orchestration patterns to TypeScript. LangChain.js provides TypeScript parity with the Python original. The TypeScript AI ecosystem isn’t catching up — it’s already arrived.

Johnson himself said TypeScript is his favorite language and that he plans to bring Embabel to TypeScript. This is part of what makes his talk so worth engaging with — he’s not dogmatic. He sees the same polyglot future and is already moving toward it.

If your integration layer is already TypeScript — and for many modern enterprises, it is — then AI orchestration in TypeScript extends your existing stack rather than introducing a new one. Same argument Java makes, different starting point. I explored this dynamic in Claude Code vs Copilot CLI and the agentic engineering maturity framework — the tooling you choose shapes the operational patterns you develop.

The Three-Language Architecture

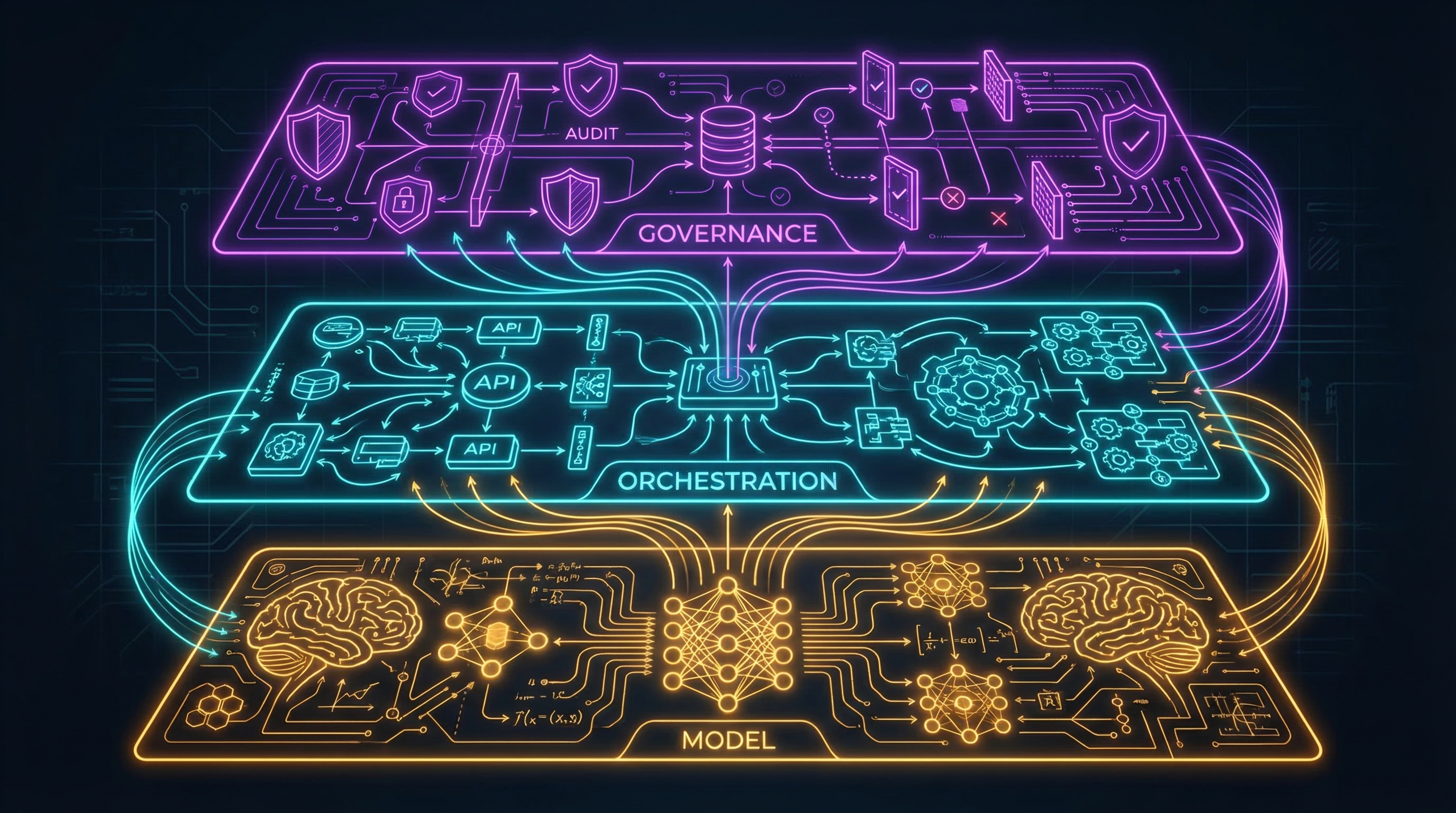

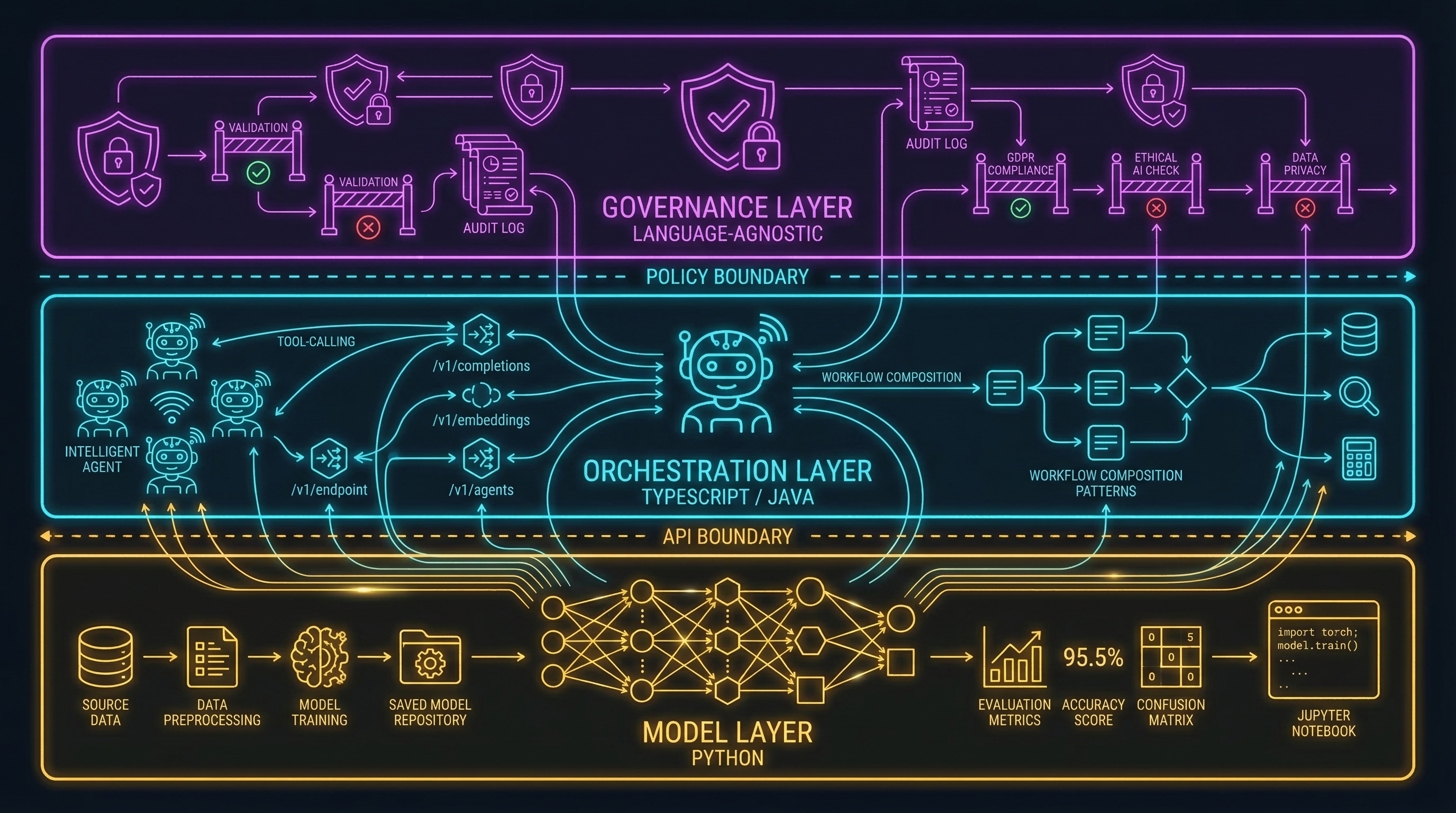

Here’s my proposed resolution. Not “pick one,” but recognize three layers with different optimization targets:

1. Model layer — Python. Fine-tuning, embeddings, evaluation pipelines, research prototyping. Python owns this completely. Don’t fight it. Don’t let it leak into production orchestration. Keep it behind an API boundary.

2. Orchestration and integration layer — TypeScript or Java. Agent composition, tool calling, API integration, workflow management. Use whatever your enterprise already uses for its integration tier. If you’re a Spring Boot shop, use Spring AI or LangChain4j. If your integration layer is Node/TypeScript, use the Vercel AI SDK or Mastra. The point is operational continuity — your AI orchestration should be operated by the same team, with the same tools, using the same patterns as the rest of your integration infrastructure.

3. Governance and infrastructure layer — language-agnostic. Validation hooks, trust calibration, audit trails, compliance gates. This layer should work regardless of which language your agents run in. I built validation hooks to be deliberately language-agnostic for exactly this reason — the governance layer can’t be coupled to the orchestration layer’s language choice, because the governance layer needs to survive the orchestration layer being swapped out.

The key insight: these aren’t competing choices. They’re complementary layers. Python does what Python does best — nobody is writing CUDA kernels in Java. Java or TypeScript does what they do best — nobody is running a SOX-audited production service on a Jupyter notebook. The governance layer sits above all of it.

Who Decides Matters More Than What They Choose

Johnson’s strongest argument isn’t about Java. It’s about organizational authority.

The architect who understands failure modes, operational overhead, and regulatory requirements should choose the production framework. The data scientist who understands model behavior, evaluation methodology, and training dynamics should choose the model-layer tooling. These are different decisions requiring different expertise, and they should have different owners.

The dysfunction Johnson is calling out — and he’s right to call it out — is when one person’s expertise is applied to both decisions. When the data scientist who’s brilliant at model evaluation also gets to choose the production orchestration framework, you get LangChain in production. When the enterprise architect who’s brilliant at production systems also gets to choose the model evaluation tooling, you get someone trying to do ML research in Java. Both are bad outcomes.

This is the judgment argument applied to language stacks. The moat isn’t knowing Python or Java or TypeScript. It’s knowing which one to apply where, and having the organizational structure to let the right people make the right decisions.

What I Don’t Have Figured Out Yet

Full disclosure: I’m a Java enterprise architect who extended an open-source AI framework with extensive custom infrastructure in TypeScript. I grew up on Spring — Johnson’s work fundamentally shaped how I think about enterprise software, and I owe a real debt to the design philosophy he pioneered. So I’m predisposed to agree with him on the production discipline argument, and predisposed to extend it with the TypeScript angle, because that’s where I’ve been most productive with AI tooling.

My PAI setup is personal infrastructure. It handles my workflows, my research, my content pipeline. It’s not battle-tested enterprise AI running under SOX audit constraints in a regulated financial institution. The patterns I’ve found productive at personal scale may not survive the operational demands of enterprise scale. That distinction matters, and I don’t want to gloss over it.

The three-language architecture I’m proposing is a framework for thinking, not a proven enterprise pattern. It needs to be pressure-tested by teams running real production AI workloads in regulated environments. I’m confident in the conceptual separation. I’m less confident in the specific boundary placements.

The Question That Will Matter in 18 Months

The language stack question will matter less over time. MCP is converging tool integration across languages. Frameworks are borrowing patterns from each other. The model layer APIs are standardizing. The operational gap between “Python AI framework” and “Java AI framework” will narrow as the ecosystem matures.

What won’t change is the skills gap. The difference between someone who understands model behavior and someone who understands production systems isn’t going away. If anything, it’s widening — as AI systems become more autonomous, the production discipline required to operate them safely increases, not decreases.

The real question isn’t which language runs your agents. It’s whether the person choosing the language has ever been woken up at 3 AM by a production incident. That’s the instinct that separates a demo from a deployment.

If the three-layer architecture resonated, the Reference Architecture goes deeper on the 4-layer model for organizing AI tooling in regulated environments. And if you’re thinking about how to calibrate trust for AI systems regardless of language stack, the Trust Ladder provides a framework for that.